Applying neuroscience to robot vision

May 17, 2011

A major European study in which robots attempt to replicate human behavior related to vision, gripping objects, and spatial perception has been developed by researchers at Robotic Intelligence Laboratory of the Universitat Jaume I (UJI) in Spain.

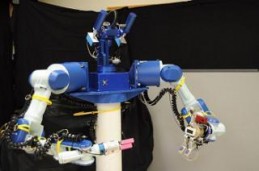

A robot head with moving eyes was integrated into a torso with articulated arms, built using computer models computer models from animal and human biology.

The robot head uses an advanced 3-D visual system synchronized with robotic arms that allows robots to observe and be aware of their surroundings and also remember the contents of those images in order to act accordingly.

The research design process included recording monkey neurons engaged in visual-motor coordination. Saccadic eye movement, related to the dynamic change of attention, was the first feature implemented in the vision system.

Using the neural data collected from the monkeys, the researchers developed computer models of the section of the brain that integrates images with movements of eyes and arms.

They then developed a neural network that allowed the robots to learn how to look, construct a representation of the environment, preserve the appropriate images, and use their memory to reach for objects, even if these are out of their sight at that moment.

The EYESHOTS (Heterogeneous 3-D Visual Perception Across Fragments) project was funded by the European Union through the Seventh Framework Programme and coordinated by the University of Genoa (Italy).