DARPA seeks probabilistic inference-based intel/recon sensor processing system to minimize energy requirements

August 27, 2012

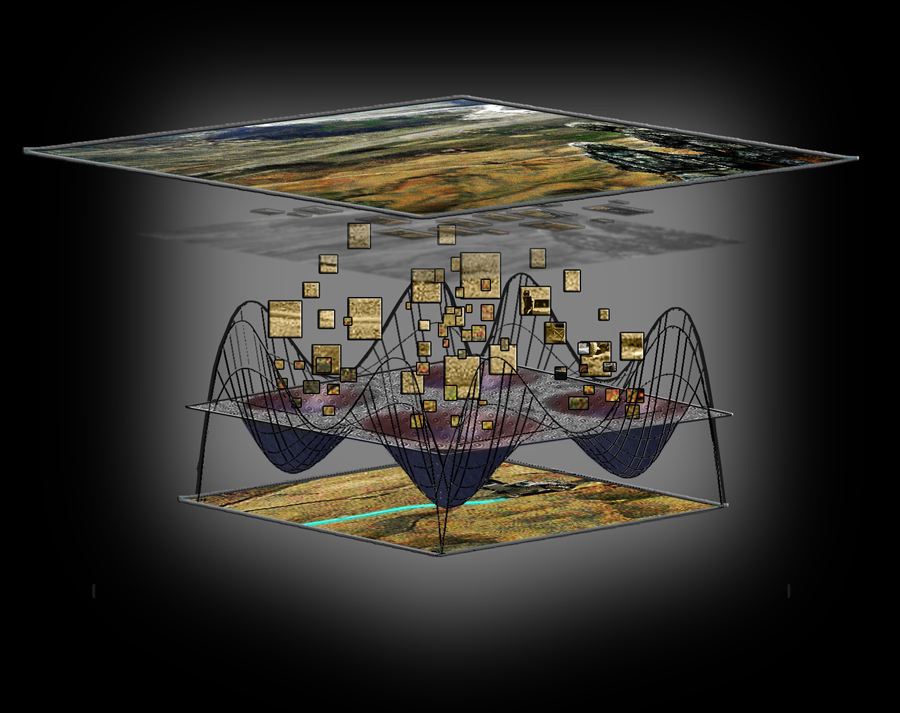

Artist’s concept. Through the UPSIDE program, intelligence, surveillance and reconnaissance sensor data is analyzed by an array of self-organizing devices. The array processes the data using inference, where elements of the image are automatically sorted based on similarities and dissimilarities, generating target identification and tracking as the output. (Credit: DARPA)

DARPA is looking for a new, ultra-low power processing method that may enable faster, mission critical analysis of intelligence, surveillance and reconnaissance (ISR) data.

Today’s Defense missions rely on a massive amount of sensor data collected by ISR platforms, says the agency. “Not only has the volume of sensor data increased exponentially, there has also been a dramatic increase in the complexity of analysis required for applications such as target identification and tracking.”

The problem: the digital processors used for ISR data analysis are limited by power requirements, potentially limiting the speed and type of data analysis that can be done.

Unconventional Processing of Signals for Intelligent Data Exploitation (UPSIDE)

The Unconventional Processing of Signals for Intelligent Data Exploitation (UPSIDE) program seeks to break the status quo of digital processing with methods of video and imagery analysis based on the physics of nanoscale devices.

UPSIDE processing will be non-digital and fundamentally different from current digital processors and the power and speed limitations associated with them.

Instead of traditional complementary metal oxide semiconductor (CMOS)-based electronics, UPSIDE envisions arrays of physics-based devices (nanoscale oscillators may be one example) performing the processing. These arrays would self-organize and adapt to inputs, meaning that they will not need to be programmed, as digital processors are.

Probabilistic inference model

Unlike traditional digital processors that operate by executing specific instructions to compute, it is envisioned that the UPSIDE arrays will rely on a higher-level computational element based on probabilistic inference embedded within a digital system.

Probabilistic inference is the fundamental computational model for the UPSIDE program. An inference process uses energy minimization to determine a probability distribution to find the object that is the most likely interpretation of the sensor data. It can be implemented directly in approximate precision by traditional semiconductors as well as by new kinds of emerging devices.

“Redefining the fundamental computation as inference could unlock processing speeds and power efficiency for visual data sets that are not currently possible,” explained Dan Hammerstrom, DARPA program manager. “DARPA hopes that this type of technology will not only yield faster video and image analysis, but also lend itself to being scaled for increasingly smaller platforms.”

UPSIDE solicitation

An interdisciplinary approach is expected as interested performer teams must address three tasks set forth in the UPSIDE solicitation. Task 1 forms the foundation for the program and involves the development of the computational model and the image processing application that will be used for demonstration and benchmarking. Tasks 2 and 3 will build on the results of Task 1 to demonstrate the inference module implemented in mixed signal CMOS in Task 2 and with non-CMOS emerging nano-scale devices in Task 3. The ability to successfully address all three tasks will require close collaboration within the proposer’s team and will be an important aspect of any successful UPSIDE effort.

“Leveraging the physics of devices to perform computations is not a new idea, but it is one that has never been fully realized,” added Hammerstrom. “However, digital processors can no longer keep up with the requirements of the Defense mission. We are reaching a critical mass in terms of our understanding of the required algorithms, of probabilistic inference and its role in sensor data processing, and the sophistication of new kinds of emerging devices. At DARPA, we believe that the time has come to fund the development of systems based on these ideas and take computational capabilities to the next level.”