Developing robots that collaborate with people

October 29, 2013

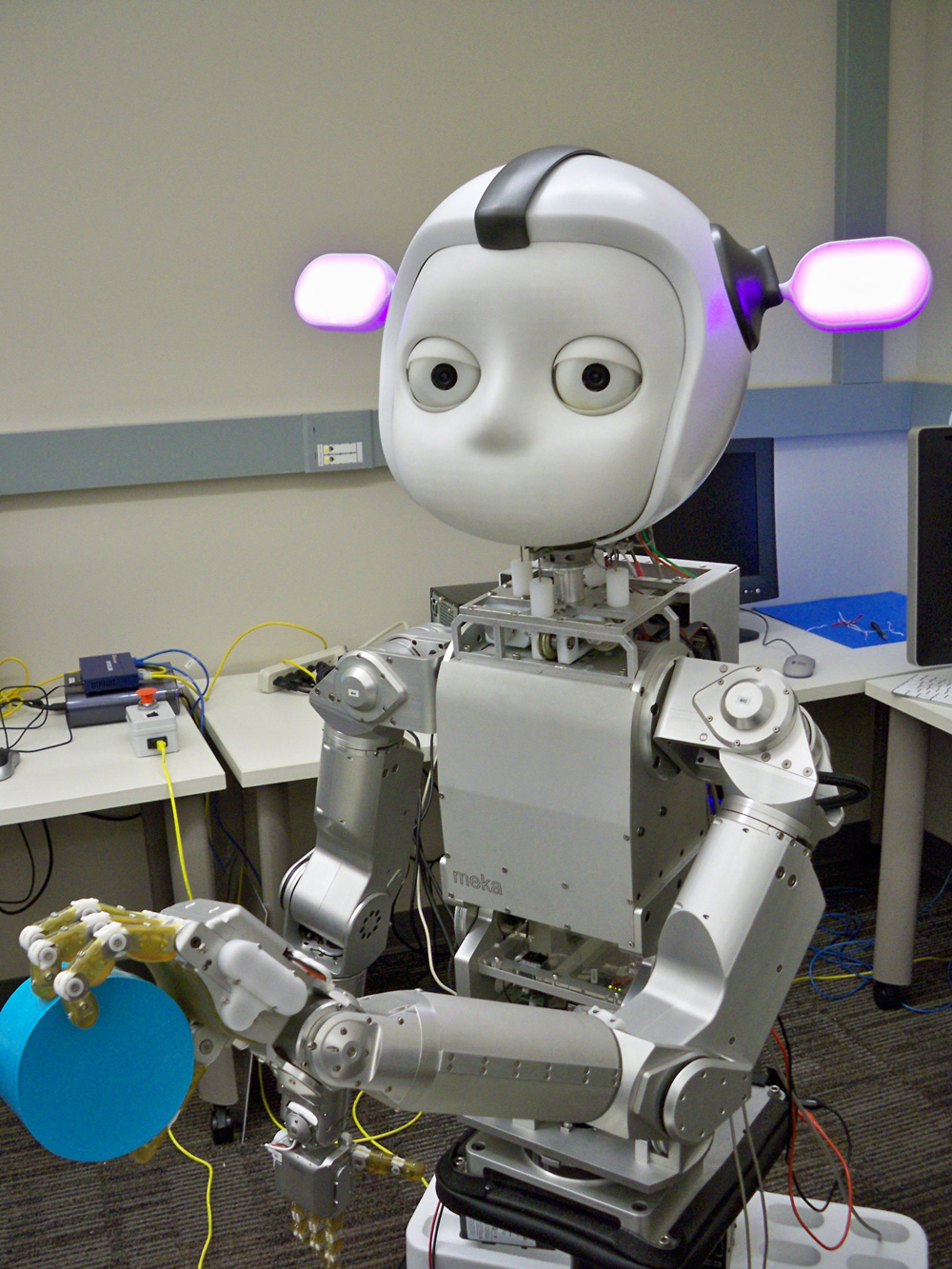

Simon the robot was developed by Georgia Tech researcher Andrea Thomaz, whose research is funded by NSF. Using Simon as her student, Thomaz is redefining how robots and humans interact. She sees a future where any “naive user” (or nonprogrammer) could buy a robot, take it home and instruct it to do almost anything. But for this to work, robots need to think like naive users. So Thomaz invites folks from off the street to teach Simon at her Georgia Tech lab. Lessons include everything from clearing the dinner table to sorting objects by color. Based on the results, Thomaz tweaks Simon’s algorithms to make him a more efficient communicator and learner. (Credit: Georgia Tech)

The National Science Foundation (NSF), in partnership with NIH, USDA and NASA, has announced about $38 million in investment for developing robots that cooperatively work with people to enhance individual human capabilities, performance and safety.

Co-robots

The 30 new projects, funded projects target the creation of next-generation collaborative robots (co-robots) for advanced manufacturing; civil and environmental infrastructure; health care and rehabilitation; military and homeland security; space and undersea exploration; food production, processing and distribution; independence and quality of life improvement and driver safety.

This year’s projects include research to improve robotic motion — advancing bipedal movement, dexterity and manipulation of robots and prostheses — and robotic sensing–advancing theories, models and algorithms to share and analyze data for robots to perform collective behaviors with humans and with other robots.

Small, autonomous rotorcraft such as this would be used to inspect bridges and other critical infrastructure in a research project led by roboticists at Carnegie Mellon University (credit: Luke Yoder, The Robotics Institute, Carnegie Mellon University)

The projects also aim to enhance 3-D printing, develop co-robot mediators, improve the training of robots, advance the capabilities of surgical robotics, provide assistive robots for people with disabilities, and improve the capability of robots for lifting and transporting heavy objects and for dangerous and complex tasks like search and rescue during disaster response.

These mark the second round of funding awards made through the National Robotics Initiative (NRI) launched with NSF as the lead federal agency just over two years ago as part of President Obama’s Advanced Manufacturing Partnership Initiative. A full listing of the NRI investments made by NSF is available on NSF’s NRI Program Page.

NIH announced investments in three projects totaling approximately $2.4 million during the next five years (see Robots designed to assist people with disabilities, aid doctors)

USDA announced five grants totaling $4.5 million to spur the development and use of robots in American agriculture production. These awards include research projects to develop robotics for fruit harvesting, early disease and stress detection in fruits and vegetables and water sampling in remote areas.

NASA has awarded grants to eight American universities to advance the state of the art in America’s robotics capabilities. Research topics range from avatar robots for co-exploration of hazardous environments to active skin for simplified tactile feedback in robotics. These projects will help enable NASA’s future missions while benefiting future co-robotic technology applications here on Earth. A new solicitation for proposals has recently been announced.

Examples of three projects

Matthias Scheutz, Linda Tickle-Degnen, Tufts University; Ronald Arkin, Georgia Institute of Technology

This research will assist people with Parkinson’s Disease (PD). Those afflicted with PD often experience facial masking, a reduced ability to signal emotion, pain, personality and intentions to caregivers and health care providers who often misinterpret the lack of emotional expressions as disinterest and an inability to adhere to treatment regimen, resulting in stigmatization.

This project will develop a robotic architecture endowed with moral emotional control mechanisms, abstract moral reasoning and a theory of mind to allow co-robots (such as Simon) to be sensitive to human affective and ethical demands. The long-term goal of this work is to develop co-robot mediators for people with facial masking due to PD.

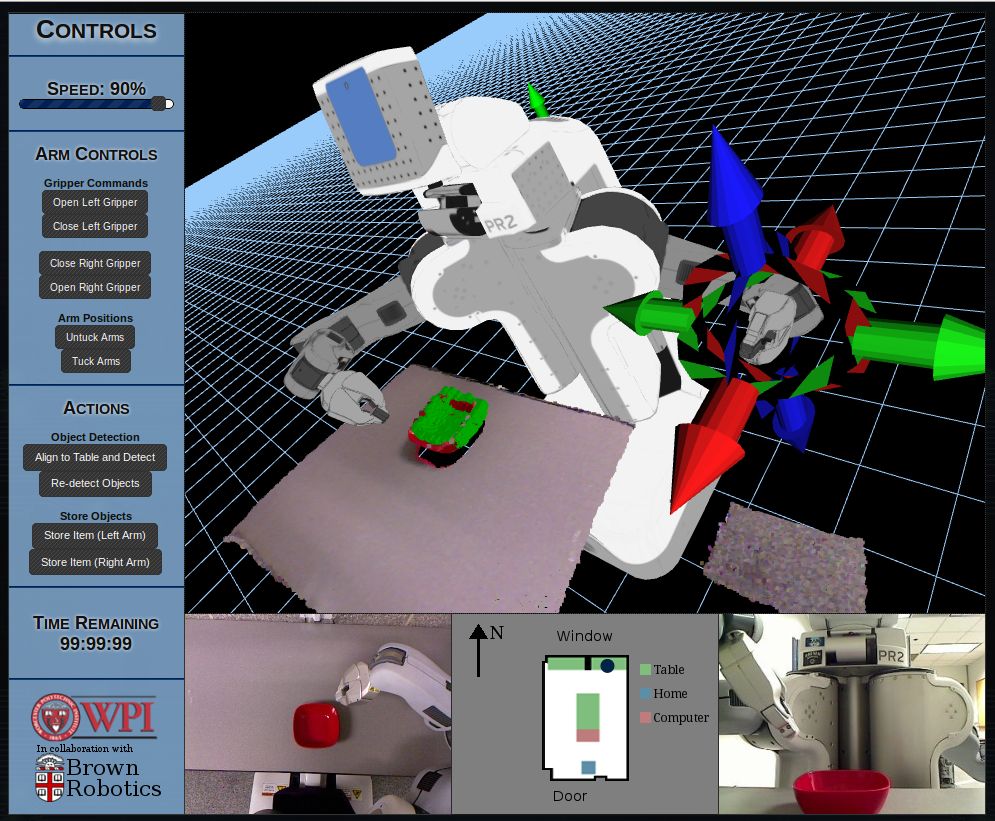

Example of the web browser interface for RobotsFor.Me (credit: Sonia Chernova, Worcester Polytechnic Institute)

Learning from demonstration for cloud robotics

Sonia Chernova, Worcester Polytechnic Institute; Andrea Thomaz, Georgia Institute of Technology

This work seeks to leverage cloud computing to enable robots to efficiently learn from remote human domain experts.

This project builds on RobotsFor.Me, a remote robotics research lab and will unite learning from demonstration and cloud robotics to enable anyone with Internet access to teach a robot household tasks.

Collaborative planning for human-robot science teams

Gaurav Sukhatme, University of Southern California

This research combines scientists’ specialized knowledge and experience with the efficiency of autonomous systems to develop a collaborative system capable of guiding scientific exploration and data collection by integrating input from scientists into an autonomous learning and planning framework.

The project team is validating the approach in the challenging domain of autonomous underwater ocean monitoring, particularly well suited for the testing of human-robot collaboration due to the limited communication available under water and the necessary supervised capabilities.