Originally published on MolecularAssembler.com January 12, 2004. Published on KurzweilAI.net January 27, 2006.

This is the complete original document describing the “Freitas process” to the level of detail that was known on 12 January 2004, following its initial conception on 1 November 2003. The actual Provisional Patent Application, prepared subsequently with the assistance of legal counsel, was abstracted from (and thus differs in some particulars from) this complete original document. A full utility patent on this process (containing numerous claims and some additional material, running a total of 133 pages in length) was subsequently filed on 11 February 2005. This patent is now pending before the USPTO. It is the first known patent ever filed on positional mechanosynthesis, and the first known patent ever filed on positional diamond mechanosynthesis.

Note: Philip Moriarty at the University of Nottingham (U.K.) has posted online several technical objections to one of the two proposed toolbuilding pathways, which Freitas says he is currently working through, point by point, with Moriarty via private correspondence in the manner of a friendly collaboration.

Abstract. A method is described for building a mechanosynthesis tool intended to be used for the molecularly precise fabrication of physical structures–as for example, diamond structures. The exemplar tool consists of a bulk-synthesized dimer-capped triadamantane tooltip molecule which is initially attached to a deposition surface in tip-down orientation, whereupon CVD or equivalent bulk diamond deposition processes are used to grow a large crystalline handle structure around the tooltip molecule. The large handle with its attached tooltip can then be mechanically separated from the deposition surface, yielding an integral finished tool that can subsequently be used to perform diamond mechanosynthesis in vacuo. The present disclosure is the first description of a complete tool for positional diamond mechanosynthesis, along with its method of manufacture. The same toolbuilding process may be extended to other classes of tooltip molecules, other handle materials, and to mechanosynthetic processes and structures other than those involving diamond.

OUTLINE

Abstract

1.Background of the Invention

1.1 Conventional Diamond Manufacturing

1.2 Diamond Manufacturing via Positional Diamond Mechanosynthesis

2. Description of the Invention

2.1 STEP 1: Synthesis of Capped Tooltip Molecule

2.2 STEP 2: Attach Tooltip Molecule to Deposition Surface in Preferred Orientation

2.2.1 Surface Nucleation and Choice of Deposition Substrate

2.2.2 Tooltip Attachment Method A: Ion Bombardment in Vacuo

2.2.3 Tooltip Attachment Method B: Surface Decapping in Vacuo

2.2.4 Tooltip Attachment Method C: Solution Chemistry

2.3 STEP 3: Attach Handle Structure to Tooltip Molecule

2.3.1 Handle Attachment Method A: Nanocrystal Growth

2.3.2 Handle Attachment Method B: Direct Handle Bonding

2.4 STEP 4: Separate Finished Tool from Deposition Surface

References

1. Background of the Invention

The properties of diamond, such as its extraordinary hardness, coefficient of friction, tensile strength and low compressibility, electrical resistivity, electrical carrier (electron and hole) mobility, high energy bandgap and saturation velocity, dielectric breakdown strength, low neutron cross-section (radiation-hardness), thermal conductivity, thermal expansion resistance, optical transmittance and refractive index, and chemical inertness allow this material to serve a vital role in a wide variety of industrial and technical applications.

The present invention relates generally to methods for the manufacture of synthetic diamond. More particularly, the invention is concerned with the physical structure and method of manufacture of a tool, which can itself subsequently be employed in the mechanosynthetic manufacture of other molecularly precise diamond structures. However, the same toolbuilding process is readily extended to other classes of tooltip molecules, handle materials, and mechanosynthetic processes and structures other than diamond.

1.1 Conventional Diamond Manufacturing

All prior art methods of manufacturing diamond are bulk processes in which the diamond crystal structure is manufactured by statistical processes. In such processes, new atoms of carbon arrive at the growing diamond crystal structure having random positions, energies, and timing. Growth extends outward from initial nucleation centers having uncontrolled size, shape, orientation and location. Existing bulk processes can be divided into three principal methods – high pressure, low pressure hydrogenic, and low pressure nonhydrogenic.

(A) In the first or high pressure bulk method of producing diamond artificially, powders of graphite, diamond, or other carbon-containing substances are subjected to high temperature and high pressure to form crystalline diamond. High pressure processes are of several types [1]:

(1) Impact Process. The starting powder is instantaneously brought under high pressure by applying impact generated by, for example, the explosion of explosives and the collision of a body accelerated to a high speed. This produces granular diamond by directly converting the starting powder material having a graphite structure into a powder composed of grains having a diamond structure. This process has the advantage that no press as is required, as in the two other processes, but there is difficulty in controlling the size of the resulting diamond products. Nongraphite organic compounds can also be shock-compressed to produce diamond [2].

(2) Direct Conversion Process. The starting powder is held under a high static pressure of 13-16 GPa and a high temperature of 3,000-4,000 oC in a sealed high pressure vessel. This establishes stability conditions for diamond, so the powder material undergoes direct phase transition from graphite into diamond, through graphite decomposition and structural reorganization into diamond. In both direct conversion and flux processes, a press is widely used and enables single crystal diamonds to be grown as large as several millimeters in size.

(3) Flux Process. As in direct conversion, a static pressure and high temperature are applied to the starting material, but here fluxes such as Ni and Fe are added to allow the reaction to occur under lower pressure and temperature conditions, accelerating the atomic rearrangement which occurs during the conversion process. For example, high-purity graphite powder is heated to 1500-2000 oC under 4-6 GPa of pressure in the presence of iron catalyst, and under this extreme, but equilibrium, condition of pressure and temperature, graphite is converted to diamond: The flux becomes a saturated solution of solvated graphite, and because the pressure inside the high pressure vessel is maintained in the stability range for diamond, the solubility for graphite far exceeds that for diamond, leading to diamond precipitation and dissolution of graphite into the flux. Every year about 75 tons of diamond are produced industrially this way [14].

(B) In the second or low pressure hydrogenic bulk method of producing diamond artificially, widely known as CVD or Chemical Vapor Deposition, hydrogen (H2) gas mixed with a few percent of methane (CH4) is passed over a hot filament or through a microwave discharge, dissociating the methane molecule to form the methyl radical (CH3) and dissociating the hydrogen molecule into atomic hydrogens (H). Acetylene (C2H2) can also be used in a similar manner as a carbon source in CVD. Diamond or diamond-like carbon films can be grown by CVD epitaxially on diamond nuclei, but such films invariably contain small contaminating amounts (0.1-1%) of hydrogen which gives rise to a variety of structural, electronic and chemical defects relative to pure bulk diamond. Currently, diamond synthesis from CVD is routinely achieved by more than 10 different methods [163].

As noted by McCune and Baird [3], a diamond particle is a special cubic lattice grown from a single nucleus of four-coordinated carbon atoms. The diamond-cubic lattice consists of two interpenetrating face-centered cubic lattices, displaced by one quarter of the cube diagonal. Each carbon atom is tetrahedrally coordinated, making strong, directed sp3 bonds to its neighbors using hybrid atomic orbitals. The lattice can also be visualized as planes of six-membered saturated carbon rings stacked in an ABC ABC ABC sequence along <111> directions. Each ring is in the “chair” conformation and all carbon-carbon bonds are staggered. A lattice with hexagonal symmetry, lonsdaleite, can be constructed with the same tetrahedral nearest neighbor configuration. In lonsdaleite, however, the planes of chairs are stacked in an AB AB AB sequence, and the carbon-carbon bonds normal to these planes are eclipsed. In simple organic molecules, the eclipsed conformation is usually less stable than the staggered because steric interactions are greater. Thermodynamically, diamond is slightly unstable with respect to crystalline graphite. At 298 K and 1 atm the free energy difference is 0.026 eV per atom, only slightly greater than kBT, where kB is the Boltzmann constant and T is the absolute temperature in degrees Kelvin.

The basic obstacle to crystallization of diamond at low pressures is the difficulty in avoiding co-deposition of graphite and/or amorphous carbon when operating in the thermodynamically stable region of graphite [3]. In general, the possibility of forming different bonding networks of carbon atoms is understandable from their ability to form different electronic configurations of the valence electrons. These bond types are classified as sp3 (tetrahedral), sp2 (planar), and sp1 (linear), and are related to the various carbon allotropes including cubic diamond and hexagonal diamond or lonsdaleite (sp3), graphite (sp2), and carbenes (sp1), respectively.

Hydrogen is generally regarded as an essential part of the reaction steps in forming diamond film during CVD, and atomic hydrogen must be present during low pressure diamond growth to: (1) stabilize the diamond surface, (2) reduce the size of the critical nucleus, (3) “dissolve” the carbon in the feedstock gas, (4) produce carbon solubility minimum, (5) generate condensable carbon radicals in the feedstock gas, (6) abstract hydrogen from hydrocarbons attached to the surface, (7) produce vacant surface sites, (8) etch (regasify) graphite, hence suppressing unwanted graphite formation, and (9) terminate carbon dangling bonds [4, 6]. Both diamond and graphite are etched by atomic hydrogen, but for diamond, the deposition rate exceeds the etch rate during CVD, leading to diamond (tetrahedral sp3 bonding) growth and the suppression of graphite (planar sp2 bonding) formation. (Note that most potential atomic hydrogen substitutes such as atomic halogens etch graphite at much higher rates than atomic hydrogen [4].)

Low pressure or CVD hydrogenic metastable diamond growth processes are of several types [3–5]:

(1) Hot Filament Chemical Vapor Deposition (HFCVD). Filament deposition involves the use of a dilute (0.1-2.5%) mixture of hydrocarbon gas (typically methane) and hydrogen gas (H2) at 50-1000 torr which is introduced via a quartz tube located just above a hot tungsten filament or foil which is electrically heated to a temperature ranging from 1750-2800 oC. The gas mixture dissociates at the filament surface, yielding dissociation products consisting mainly of radicals including CH3, CH2, C2H, and CH, acetylene, and atomic hydrogen, as well as unreacted CH4 and H2. A heated deposition substrate placed just below the hot tungsten filament is held in a resistance heated boat (often molybdenum) and maintained at a temperature of 500-1100 oC, whereupon diamonds are condensed onto the heated substrate. Filaments of W, Ta, and Mo have been used to produce diamond. The filament is typically placed within 1 cm of the substrate surface to minimize thermalization and radical recombination, but radiation heating can produce excessive substrate temperatures leading to nonuniformity and even graphitic deposits. Withdrawing the filament slightly and biasing it negatively to pass an electron current to the substrate assists in preventing excessive radiation heating.

(2) High Frequency Plasma-Assisted Chemical Vapor Deposition (PACVD). Plasma deposition involves the addition of a plasma discharge to the foregoing filament process. The plasma discharge increases the nucleation density and growth rate, and is believed to enhance diamond film formation as opposed to discrete diamond particles. There are three basic plasma systems in common use: a microwave plasma system, a radio frequency or RF (inductively or capacitively coupled) plasma system, and a direct current or DC plasma system. The RF and microwave plasma systems use relatively complex and expensive equipment which usually requires complex tuning or matching networks to electrically couple electrical energy to the generated plasma. The diamond growth rate offered by these two systems can be quite modest, on the order of ~1 micron/hour. Diamonds can also be grown in microwave discharges in a magnetic field, under conditions where electron cyclotron resonance is considerably modified by collisions. These “magneto-microwave” plasmas can have significantly higher densities and electron energies than isotropic plasmas and can be used to deposit diamond over large areas.

(3) Oxyacetylene Flame-Assisted Chemical Vapor Deposition. Flame deposition of diamond occurs via direct deposit from acetylene as a hydrocarbon-rich oxyacetylene flame. In this technique, conducted at atmospheric pressure, a specific part of the flame (in which both atomic hydrogen (H) and carbon dimers (C2) are present [19]) is played on a substrate on which diamond grows at rates as high as >100 microns/hour [7].

(C) In the third or low pressure nonhydrogenic bulk method of producing diamond artificially [8–17], a nonhydrogenic fullerene (e.g., C60) vapor suspended in a noble gas stream or a vapor of mixed fullerenes (e.g., C60, C70) is passed into a microwave chamber, forming a plasma in the chamber and breaking down the fullerenes into smaller fragments including isolated carbon dimer radicals (C2) [6]. (Often a small amount of H2, e.g., ~1%, is added to the feedstock gas.) These fragments deposit onto a single-crystal silicon wafer substrate, forming a thickness of good-quality smooth nanocrystalline diamond (15 nm average grain size, range 10-30 nm crystallites [8–10]) or ultrananocrystalline diamond (UNCD) diamond films with intergranular boundaries free from graphitic contamination [9], even when examined by high resolution TEM [16] at atomic resolution [10]. Fullerenes are allotropes of carbon, containing no hydrogen, so diamonds produced from fullerene precursors are hydrogen-defect free [11] – indeed, the Ar/C60 film is close in both smoothness and hardness to a cleaved single crystal diamond sample [10]. The growth rate of diamond film is ~1.2 microns/hour, comparable to the deposition rate observed using 1% methane in hydrogen under similar system deposition conditions [9, 10]. Diamond films can, using this process, be grown at relatively low temperatures (<500 oC) [10] as opposed to conventional diamond growth processes which require substrate temperatures of 800-1000 oC.

Ab initio calculations indicate that C2 insertion into carbon-hydrogen bonds is energetically favorable with small activation barriers, and that C2 insertion into carbon-carbon bonds is also energetically favorable with low activation barriers [15]. A mechanism for growth on the diamond C(100) (2×1):H reconstructed surface with C2 has been proposed [16]. A C2 molecule impinges on the surface and inserts into a surface carbon-carbon dimer bond, after which the C2 then inserts into an adjacent carbon-carbon bond to form a new surface carbon dimer. By the same process, a second C2 molecule forms a new surface dimer on an adjacent row. Then a third C2 molecule inserts into the trough between the two new surface dimers, so that the three C2 molecules incorporated into the diamond surface form a new surface dimer row running perpendicular to the previous dimer row. This C2 growth mechanism requires no hydrogen abstraction reactions from the surface and in principle should proceed in the absence of gas phase atomic hydrogen.

The UNCD films were grown on silicon (Si) substrates polished with 100 nm diamond grit particles to enhance nucleation [16]. Deposition of UNCD on a sacrificial release layer of SiO2 substrate is very difficult because the nucleation density is 6 orders of magnitude smaller on SiO2 than on Si [18]. However, the carbon dimer growth species in the UNCD process can insert directly into either the Si or SiO2 surface, and the lack of atomic hydrogen in the UNCD fabrication process permits both a higher nucleation density and a higher renucleation rate than the conventional H2/CH4 plasma chemistry [18], so it is therefore possible to grow UNCD directly on SiO2.

Besides fullerenes, it has been proposed that “diamondoids” or polymantanes, small hydrocarbons made of one or more fused cages of adamantane (C10H16, the smallest unit cell of hydrogen-terminated crystalline diamond) could be used as the carbon source in nonhydrogenic diamond CVD [20–22]. Dahl, Carlson and Liu [22] suggest that the injection of diamondoids could facilitate growth of CVD-grown diamond film by allowing carbon atoms to be deposited at a rate of about 10-100 or more at a time, unlike conventional plasma CVD in which carbons are added to the growing film one atom at a time, possibly increasing diamond growth rates by an order of magnitude or better. However, Plaisted and Sinnott [23] used atomistic simulations to study thin-film growth via the deposition of very hot (119-204 eV/molecule; 13-17 km/sec) beams of adamantane molecules on hydrogen-terminated diamond (111) surfaces, with forces on the atoms in the simulations calculated using a many-body reactive empirical potential for hydrocarbons. During the deposition process the adamantane molecules react with one another and the surface to form hydrocarbon thin films that are primarily polymeric with the amount of adhesion depending strongly on incident energy. Despite the fact that the carbon atoms in the adamantane molecules are fully sp3 hybridized, the films contain primarily sp2 hybridized carbon with the percentage of sp2 hybridization increasing as the incident velocity goes up. However, cooler beams might allow more consistent sp3 diamond deposition, and other techniques [24] have deposited diamond-like carbon (DLC) films with a higher percentage of sp3 hybridization from adamantane.

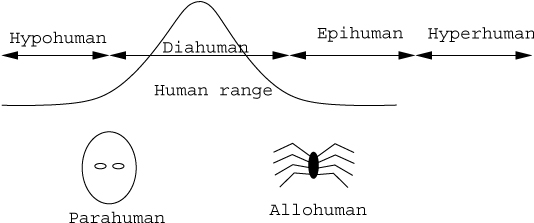

1.2 Diamond Manufacturing via Positional Diamond Mechanosynthesis

A new non-bulk non-statistical method of manufacturing diamond, called positional diamond mechanosynthesis, was proposed theoretically by Drexler in 1992 [32]. In this method, positionally controlled carbon deposition tools are manipulated to sub-Angstrom tolerances via SPM (Scanning Probe Microscopy) or similar atomic-resolution manipulator mechanisms to build diamond in vacuo. Each carbon deposition tool includes a tooltip molecule attached to a larger handle structure which is grasped by the atomic-resolution manipulator mechanism. One or more carbon atoms having one or more dangling bonds are relatively loosely bound to the tip of the tooltip molecule. When the tip is brought into contact with the substrate surface at a specific location and sufficient mechanical forces (compression, torsion, etc.) are applied, a stronger covalent bond is formed between the tip-bound carbon atom(s) and the surface, via the dangling bonds, than previously existed between the tip-bound carbon atom(s) and the tooltip structure. As a result, the tool may subsequently be retracted from the substrate and the tip-bound carbon atom(s) will be left behind on the substrate surface at the specific location and orientation desired. By repeating this process of positionally-constrained chemistry or mechanosynthesis, using a succession of similar tools, a large variety of molecularly precise diamond structures can be fabricated, placing one or a few atoms at a time on the growing workpiece.

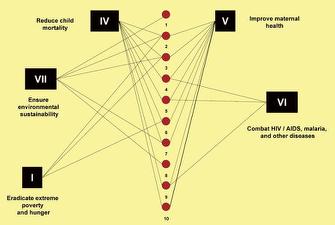

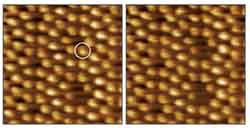

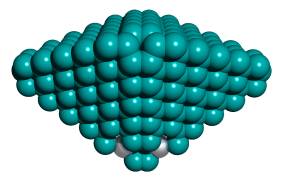

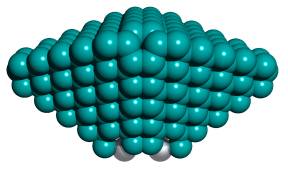

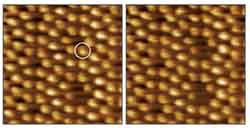

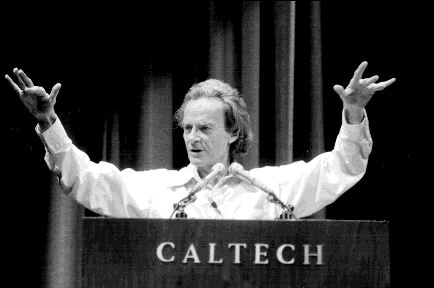

Several analyses using the increasingly accurate methods of computational chemistry have confirmed the theoretical validity of the proposed process of positional diamond mechanosynthesis for hydrogen abstraction [25–33] and hydrogen donation [32, 33], in respect to the surface passivating hydrogen atoms, and carbon deposition [32–38], in respect to diamond surfaces and the body of diamond nanostructures. While positional diamond mechanosynthesis has not yet been demonstrated experimentally, early experiments [39] have demonstrated single-molecule positional covalent bond formation on surfaces via SPM, though in these cases bond formation was not purely mechanochemical but included electrochemical or other means. Mechanosynthesis of the Si(111) lattice has been studied theoretically [40, 41] and the first laboratory demonstration of nonelectrical, purely mechanical positional covalent bond formation on a silicon surface using a simple SPM tip was reported in 2003 [42]. In this demonstration, Osaka University researchers lowered a silicon AFM tip toward the silicon Si(111)-(7×7) surface and pushed down on a single atom. The focused pressure forced the atom free of its bonds to neighboring atoms, which allowed it to bind to the AFM tip. After lifting the tip and imaging the material, there was a hole where the atom had been (Figure 1). Pressing the tip back into the vacancy redeposited the tip-bound selected single atom, this time using the pressure to break the bond with the tip. These manipulation processes were purely mechanical since neither bias voltage nor voltage pulse was applied between probe and sample [42].

Figure 1. Mechanosynthesis of a single silicon atom on the silicon Si(111)-(7×7) surface

Phys. Rev. Lett. 90, 176102 (2003)

Existing mechanosynthetic tools can only be used at ultralow temperatures near absolute zero, and hold the atom or molecule to be deposited only very weakly, and can be employed only very slowly (minutes or hours per mechanosynthetic operation). These tools include the simple diamond stylus [43] and other crude tools such as nanocrystalline diamond grown (a) on standard silicon [44, 48] AFM tips with a 30 nm radius [48], (b) on silicon cantilever tips [46, 47], (c) on tungsten STM tips [45], or (d) on 12 nm radius doped-diamond STM tips [49], using CVD [44–49] including HFCVD [44, 46] or PACVD [45] diamond deposition processes. There is a need for improved mechanosynthetic tools with a molecularly precise <0.3 nm tip radius that can operate at liquid nitrogen or even room temperatures, and can perform mechanosynthetic operations in seconds or even faster cycle times, and can conveniently be precisely manipulated to sub-Angstrom positional accuracy using conventional SPM instruments.

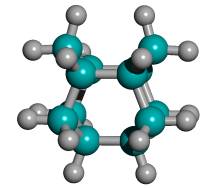

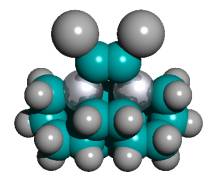

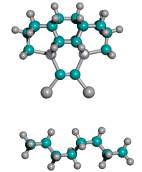

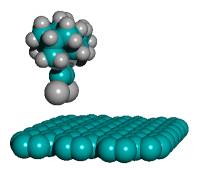

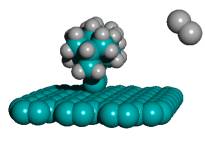

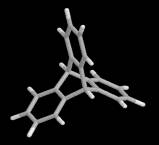

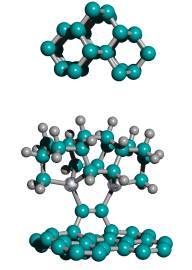

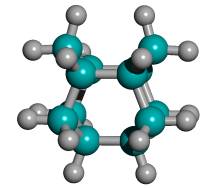

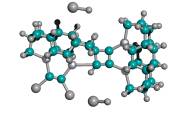

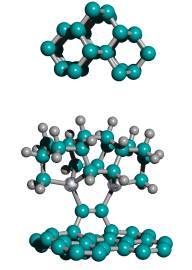

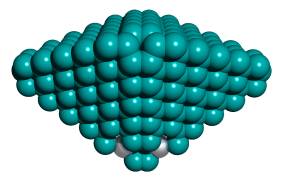

In 2002, Merkle and Freitas [36] proposed the first design for a class of precision tooltip molecules intended to positionally deposit individual carbon dimers on a growing diamond substrate via diamond mechanosynthesis (Figure 2), and subsequent theoretical analysis [37, 38, 235] has verified that this class of tooltip molecules should be useful for depositing carbon dimers on a dehydrogenated diamond C(110) crystal surface, for the purpose of building additional C(110) surface or other molecularly precise structures at liquid nitrogen or room temperatures.

Figure 2. DCB6-Si dimer placement tooltip molecule [36]

(A) Wire frame view of tooltip molecule

(B) Overlapping spheres view of (A)

(C) Iceane

No specific proposals for attaching tooltip molecules such as the one illustrated in Figure 2 A/B to larger tool handles, or complete tools for positional diamond mechanosynthesis, have previously been reported in the scientific, engineering or patent literature. While others have previously noted the need for a handle structure to manipulate the active mechanosynthetic tooltip [32, 33, 36, 38], this invention is the first practical description of how to manufacture and to attach tooltips to such a handle structure, and thus to manufacture a complete mechanosynthetic tool.

The present invention is not limited to a method for the manufacture of a complete tool which can be used for diamond mechanosynthesis. The same toolbuilding process is readily extended to other classes of tooltip molecules, handle materials, and mechanosynthetic processes and structures other than diamond. As examples, which in no way limit or exhaust the possible applications of this invention, the same method as described herein can be used to build complete mechanosynthetic tools and attach handles to: (1) other possible C2 dimer deposition tooltips proposed by Drexler [32] and Merkle [33, 34] for the building of molecularly precise diamond structures; (2) other possible carbon deposition tooltips, including but not limited to carbene tooltips as proposed by Drexler [32] and Merkle [33, 34] and monoradical methylene tooltips as proposed by Freitas [234], for the deposition of carbon or hydrocarbon moieties during the building of molecularly precise diamond structures, or other tooltips that may be used for the removal of individual carbon atoms, C2 dimers [38], or other hydrocarbon moieties from a growing diamond surface; (3) tooltips for the abstraction [25–33] and donation [32, 33] of hydrogen atoms, for the purpose of positional surface passivation or depassivation during the building of molecularly precise diamond structures, or during the building of molecularly precise structures other than diamond, or of other atoms similarly employed for passivation purposes; or (4) tooltips for the deposition or abstraction of atoms, dimers, or other moieties, to or from materials including, but not limited to, covalent solids other than diamond, silicon, germanium or other semiconductors, intermetallics, ceramics, and metals.

2. Description of the Invention

The present invention is concerned with the physical structure and method of manufacture of a complete tool for positional diamond mechanosynthesis, which can subsequently be employed in the mechanosynthetic manufacture of other molecularly precise diamond structures, including other tools for positional diamond mechanosynthesis.

The present invention is the first description of a complete tool for positional diamond mechanosynthesis, along with its method of manufacture. The subject mechanosynthetic tool is constructed using only bulk chemical and mechanical processes, and yet, once fabricated, is capable of molecularly precise carbon dimer deposition to produce molecularly precise diamond structures. The present invention provides a tool by which the trajectory and timing of each new carbon atom added to a growing diamond nanostructure can be precisely controlled, thus allowing the manufacture of molecularly precise three-dimensional diamond structures of specified size, shape, orientation, location, and chemical composition, a significant improvement over all known bulk methods for fabricating synthetic diamond and a significant improvement over all existing mechanosynthetic SPM tips or styluses.

The positional diamond mechanosynthesis tool described herein enables the convenient manufacture of large numbers and varieties of diamond mechanosynthesis tools of similar or improved types, and also enables the convenient manufacture of a wide variety of molecularly precise nanoscale, microscale, and other diamond structures that cannot be fabricated by any known bulk process, including, but not limited to, molecularly-sharp scanning probe tips, shaped nanopores and custom binding sites, complex nanosensors, interleaved nanomechanical structures, compact mechanical nanocomputer components, nanoelectronic and quantum computational devices, aperiodically nanostructured optical materials, and many other complex nanodevices, nanomachines, and nanorobots. The tool can also be used in the fabrication of additional tools for the positional mechanosynthetic manufacture of molecularly precise structures made of materials other than diamond, employing either carbon (e.g., nanotubes and other graphene sheet structures) or carbon together with elements other than carbon, such as nanostructured nondiamond hydrocarbons, nanostructured fluorocarbons, nanostructured sapphire/alumina, and even DNA and other organic polymeric materials.

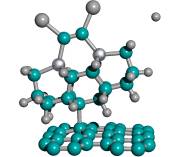

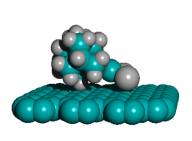

The positional diamond mechanosynthesis tool consists of two distinct parts which are covalently joined.

The first part of the positional diamond mechanosynthesis tool is the tooltip molecule (Figure 2). In the preferred embodiment the tooltip molecule consists of one or more adamantane molecules arranged in a polymantane or lonsdaleite (iceane; Figure 2C) configuration making a triadamantane base molecule. One or more dimerholder atoms (most preferably the Group IV elements Si, Ge, Sn, and Pb with three bonds into the base, but Group V elements N, P, As, Sb and Bi and Group III elements B, Al, Ga, In, and Tl with two bonds into the base may also be used [36]) are substituted into each of the adamantane molecules composing the triadamantane base molecule. A single carbon dimer (C2) molecule is bonded to two dimerholder atoms integral to the triadamantane base molecule; the carbon dimer is held by the tooltip but is later mechanically released during a mechanosynthetic dimer placement operation. Finally, a capping group is temporarily bonded to the two dangling bonds of the carbon dimer, passivating the dangling bonds and chemically stabilizing the tooltip molecule for a solution-phase environment. The capping group must be removed from the tooltip, exposing the dimer dangling bonds and activating the tooltip molecule, prior to use in a diamond mechanosynthesis operation.

The second part of the positional diamond mechanosynthesis tool is the handle structure (e.g., Figure 17). The handle structure may be a large rigid molecule, consisting in the preferred embodiment of a regular crystal, or a rod, or a cone, of pure hydrogen-terminated diamond, thus providing the greatest possible mechanical rigidity and thermal stability. At the base of the handle, the handle structure is sufficiently wide (0.1-10 microns in diameter) to be securely grasped by, or bonded to, a conventional SPM tip, a MEMS robotic end-effector, or other similarly rigid and well-controlled microscale manipulator device. Near the apex of the handle structure, the tooltip molecule is covalently bonded to the handle structure, forming an intimate and permanent connection thereto. The tooltip molecule is oriented coaxially with the handle structure, with the carbon dimer (whether capped or uncapped) of the tooltip molecule occupying the location most distal from the base of the handle structure, just as the writing tip of a sharpened pencil is most distal from the pencil eraser end.

The manufacture of the complete positional diamond mechanosynthesis tool requires four distinct steps, including (1) synthesis of capped tooltip molecule (Section 2.1), (2) attachment of tooltip molecule to deposition surface in a preferred orientation (Section 2.2), (3) attaching handle structures onto the tooltip molecules (Section 2.3), and finally (4) separating the finished tools from the deposition surface (Section 2.4). The concept of seeded growth of a useful nanoscale tool has previously been employed in the CVD growth of carbon nanotube tips for AFM [50–52].

2.1 STEP 1: Synthesis of Capped Tooltip Molecule

STEP 1. Synthesize the triadamantane tooltip molecule, with its active C2 dimer tip appropriately capped, using methods of bulk chemical synthesis derived from known synthesis pathways for functionalized polyadamantanes as found in the existing chemical literature.

While an explicit synthesis of the exact DCB6-X (X = Si, Ge, Sn, Pb) capped tooltip molecule has not yet been located in the chemical literature, the sila-adamantanes have been investigated since at least the early 1970s [53–55] and multiply-substituted adamantanes such as 1,3,5,7-tetramethyl-tetrasilaadamantane [53, 56] and other 1,3,5,7-tetrasilaadamantanes [57] have been synthesized. Adamantanes are readily functionalized with alkene C=C bonds, e.g., 2,2-divinyladamantane, a colorless liquid at room temperature [161]. Polymantanes as a class of molecules can be functionalized [58, 60] and assembled to a limited extent, including biadamantanes [63], diadamantanes [64–66] and diamantanes [67], triamantanes [68, 69], and tetramantanes [70, 71]. The Beilstein database lists over 20,000 adamantane variants and there are several excellent literature reviews of adamantane chemistry [59–63]. The molecular geometries of diamantane, triamantane, and isotetramantane have been investigated theoretically using molecular mechanics, semiempirical and ab initio approaches [72]. The core of the DCB6-X (X = Si, Ge, Sn, Pb) class of adamantane-based tooltip molecules is a single iceane molecule (Figure 2C), the smallest unit cage of lonsdaleite or hexagonal diamond (the counterpart to adamantane which is the unit cage for the more common cubic diamond lattice). The iceane molecule was first synthesized experimentally in 1974 [73–75] and more recently has been studied using the customary methods of computational chemistry [77–80]; commercial sources for hexagonal diamond (lonsdaleite) powder already exist [76].

A crucial decision to be made in a particular application of this invention is the choice of capping group to be used to passivate the two dangling bonds of the C2 dimer that is held by the tooltip molecule. The presence of the capping group converts the otherwise highly reactive C2 dimer radical into a chemically stable moiety in solution phase for the duration of the synthesis process. Only when the capping group is later removed (Section 2.2), in vacuo, does the C2 dimer resume its status as a chemically active radical. Note that for some choices of capping group it may be simpler to synthesize the capped tooltip molecule in the configuration of a double-capped single-bonded C-C dimer, then employ a subsequent process to alkenate the dimer bond to C=C which would include removing half of the capping groups.

Many possible capping groups could in principle provide electronic closed-shell termination of the C2 dangling bonds, thus maximizing tooltip molecule chemical stability during conventional solution synthesis in Step 1 and during tooltip molecule attachment in Step 2 (Section 2.2). In some procedures, attachment is facilitated if the chemical structure of the capping group is highly dissimilar to the adamantane structure of the tooltip molecule, so that the capping group may be conveniently removed, e.g., by selective bond resonance excitation, during the tooltip attachment process. (Thus purely hydrocarbon-based and some other organic radicals may be problematic as capping groups.) For simplicity of analysis, ease of tooltip molecule synthesis, and ease of capping group removal, the capping group should have as few atoms as possible, all else equal. An enumeration of 400 potentially useful capping groups fulfilling the above requirements is given in Table 1, though the present invention is not limited to this partial list of illustrative exemplar moieties. As the number of atoms in the capping group increases, the combinatoric possibilities expand enormously. Some of the groups listed in Table 1 may yield tooltip molecules that are stable only at very low temperatures or only in particular chemical environments, and a few may not yet have been verified as experimentally available or even chemically stable.

| Table 1. Possible capping groups for the C2 dimer tooltip molecule |

|

| Type of Capping Group |

Capping Group Atoms or Multi-atom Moieties |

| Single-atom, single-element (=C-cap) |

-H, -F, -Cl, -Br, -I

-Li, -Na, -K, -Rb, -Cs

|

| Bridge-atoms, single-element (=C-cap-C=) |

-O-, -O-O-, -S-, -S-S-, -Se-, -Se-Se-, -Te-, -Te-Te-

-Be-, -Be-Be-, -Mg-, -Mg-Mg-, -Ca-, -Ca-Ca-, -Sr-, -Sr-Sr-, -Ba-, -Ba-Ba-

|

|

Two-atom, two-element (=C-cap)

|

| -OH -OF -OCl -OBr -OI -OLi -ONa -OK -ORb -OCs |

-SH -SF -SC -SBr -SI -SLi -SNa -SK -SRb -SCs |

-SeH -SeF -SeCl -SeBr -SeI -SeLi -SeNa -SeK -SeRb -SeCs

|

-TeH -TeF -TeCl -TeBr -TeI -TeLi -TeNa -TeK -TeRb -TeCs |

-BeH -BeF -BeCl -BeBr -BeI |

-MgH -MgF -MgCl -MgBr -MgI |

-CaH -CaF -CaCl -CaBr -CaI |

-SrH -SrF -SrCl -SrBr -SrI |

-BaH -BaF -BaCl -BaBr -BaI |

|

| Bridge-atoms, two-element (=C-cap-C=) |

-NH-, -NHHN-, -PH-, -PHHP-, -AsH-, -AsHHAs-, -SbH-,-SbHHSb-, -BiH-, -BiHHBi-, -BH-, -BHHB-, -AlH-, -AlHHAl-,-GaH-, -GaHHGa-, -InH-, -InHHIn-, -TlH-, -TlHHTl-

-NLi-, -NLiLiN-, -PLi-, -PLiLiP-, -AsLi-, -AsLiLiAs-, -SbLi-,-SbLiLiSb-, -BiLi-, -BiLiLiBi-, -BLi-, -BLiLiB-, -AlLi-, -AlLiLiAl-,-GaLi-, -GaLiLiGa-, -InLi-, -InLiLiIn-, -TlLi-, -TlLiLiTl-

-NF-, -NFFN-, -PF-, -PFFP-, -AsF-, -AsFFAs-, -SbF-,-SbFFSb-, -BiF-, -BiFFBi-, -BF-, -BFFB-, -AlF-, -AlFFAl-,-GaF-, -GaFFGa-, -InF-, -InFFIn-, -TlF-, -TlFFTl-

-NNa-, -NNaNaN-, -PNa-, -PNaNaP-, -AsNa-, -AsNaNaAs-, -SbNa-,-SbNaNaSb-, -BiNa-, -BiNaNaBi-, -BNa-, -BNaNaB-, -AlNa-, -AlNaNaAl-,-GaNa-, -GaNaNaGa-, -InNa-, -InNaNaIn-, -TlNa-, -TlNaNaTl-

-NCl-, -NClClN-, -PCl-, -PClClP-, -AsCl-, -AsClClAs-, -SbCl-,-SbClClSb-, -BiCl-, -BiClClBi-, -BCl-, -BClClB-, -AlCl-, -AlClClAl-,-GaCl-, -GaClClGa-, -InCl-, -InClClIn-, -TlCl-, -TlClClTl-

-NK-, -NKKN-, -PK-, -PKKP-, -AsK-, -AsKKAs-, -SbK-,-SbKKSb-, -BiK-, -BiKKBi-, -BK-, -BKKB-, -AlK-, -AlKKAl-,-GaK-, -GaKKGa-, -InK-, -InKKIn-, -TlK-, -TlKKTl-

-NBr-, -NBrBrN-, -PBr-, -PBrBrP-, -AsBr-, -AsBrBrAs-, -SbBr-,-SbBrBrSb-, -BiBr-, -BiBrBrBi-, -BBr-, -BBrBrB-, -AlBr-, -AlBrBrAl-,-GaBr-, -GaBrBrGa-, -InBr-, -InBrBrIn-, -TlBr-, -TlBrBrTl-

-NRb-, -NRbRbN-, -PRb-, -PRbRbP-, -AsRb-, -AsRbRbAs-, -SbRb-,-SbRbRbSb-, -BiRb-, -BiRbRbBi-, -BRb-, -BRbRbB-, -AlRb-, -AlRbRbAl-,-GaRb-, -GaRbRbGa-, -InRb-, -InRbRbIn-, -TlRb-, -TlRbRbTl-

-NI-, -NIIN-, -PI-, -PIIP-, -AsI-, -AsIIAs-, -SbI-,-SbIISb-, -BiI-, -BiIIBi-, -BI-, -BIIB-, -AlI-, -AlIIAl-,-GaI-, -GaIIGa-, -InI-, -InIIIn-, -TlI-, -TlIITl-

-NCs-, -NCsCsN-, -PCs-, -PCsCsP-, -AsCs-, -AsCsCsAs-, -SbCs-,-SbCsCsSb-, -BiCs-, -BiCsCsBi-, -BCs-, -BCsCsB-, -AlCs-, -AlCsCsAl-,-GaCs-, -GaCsCsGa-, -InCs-, -InCsCsIn-, -TlCs-, -TlCsCsTl-

|

| Three-atom, two-element (=C-cap) |

|

-NH2 -PH2 -AsH2 -SbH2 -BiH2 -NLi2 -PLi2 -AsLi2 -SbLi2 -BiLi2

-BH2 -AlH2 -GaH2 -InH2 -TlH2 -BLi2 -AlLi2 -GaLi2 -InLi2 -TlLi2

|

-NF2 -PF2 -AsF2 -SbF2 -BiF2 -NNa2 -PNa2 -AsNa2 -SbNa2 -BiNa2

-BF2 -AlF2 -GaF2 -InF2 -TlF2 -BNa2 -AlNa2 -GaNa2 -InNa2 -TlNa2

|

-NCl2 -PCl2 -AsCl2 -SbCl2 -BiCl2 -NK2 -PK2 -AsK2 -SbK2 -BiK2

-BCl2 -AlCl2 -GaCl2 -InCl2 -TlCl2 -BK2 -AlK2 -GaK2 -InK2 -TlK2

|

-NBr2 -PBr2 -AsBr2 -SbBr2 -BiBr2 -NRb2 -PRb2 -AsRb2 -SbRb2 -BiRb2

-BBr2 -AlBr2 -GaBr2 -InBr2 -TlBr2 -BRb2 -AlRb2 -GaRb2 -InRb2 -TlRb2

|

-NI2 -PI2 -AsI2 -SbI2 -BiI2 -NCs2 -PCs2 -AsCs2 -SbCs2 -BiCs2

-BI2 -AlI2 -GaI2 -InI2 -TlI2 -BCs2 -AlCs2 -GaCs2 -InCs2 -TlCs2

|

|

| Organic radicals (=C-cap)

|

methyl (-CH3), vinyl (-CH=CH2), ethyl (-CH2CH3), etc. carboxyl (-COOH), methoxy (-OCH3), etc. formyl (-CHO), acetyl (-CCH3O), etc. phenyl (-C6H5) etc. |

The precise choice of capping group is determined by the desired interactions of tooltip molecules with the selected deposition surface (as described in Step 2 (Section 2.2) and Step 4 (Section 2.4)), but also by the desired interactions of tooltip molecules with themselves, e.g., during synthesis. There are at least four relevant factors which must be considered.

First, from the standpoint of basic utility the ideal capping group: (1) should be loosely bound to the dimer, thus easily released in order to uncap (and activate) the tooltip; (2) should form only a single bond with carbon; and (3) should be very simple, hence relatively easy to synthesize in a polymantane system. A few capping atoms that meet these criteria are given in Table 2.

| Table 2. Bonding energies between capping group and carbon or diamond (modified from [4]) |

|

| Possible Tooltip Molecule Capping Atoms |

Bond Energy to Carbon (kcal/mole)

|

Bond Energy to Diamond* (kcal/mole)

|

|

Iodine (I)

Sulfur (S)

Bromine (Br)

Silicon (Si)

Nitrogen (N)

Methoxy (OCH3)

Chlorine (Cl)

Carbon (C)

Oxygen (O)

Hydroxyl (OH)

Hydrogen (H)

Fluorine (F)

|

52

65

68

72

73

—

81

83

86

—

99

116

|

49.5

—

63

—

—

78

78.5

80

—

90.5

91

103

|

| * Values given are the binding energies of tertiary carbon atoms to the capping atoms, i.e., the bonding energy between capping atoms and a carbon atom which is bound to three other carbon atoms. |

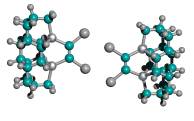

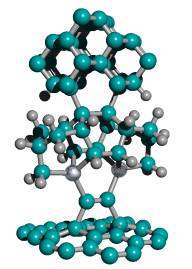

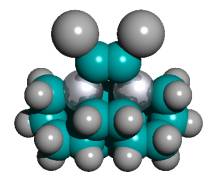

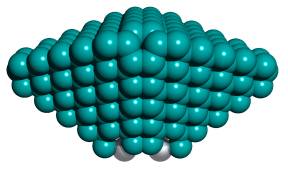

For ease of release alone, Table 2 implies that a preferred embodiment is to use two iodine atoms as the C2 dimer capping group of the tooltip molecule, as shown in Figure 3 below, right, though other capping groups may also serve in this capacity.

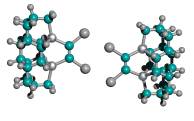

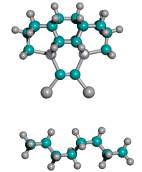

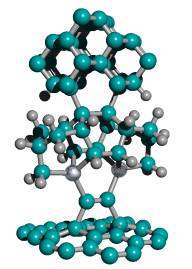

Figure 3. DCB6-Ge tooltip molecule, uncapped (left), and capped (right) with iodine atoms

(A) uncapped

(B)) capped with iodine atoms

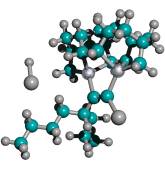

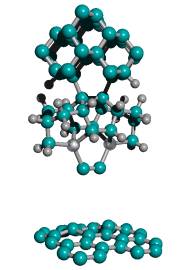

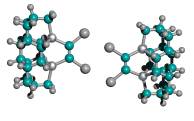

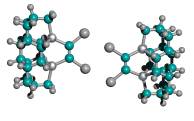

Second, during bulk chemical synthesis using conventional techniques in solution phase, the capped tooltip molecule should not spontaneously dimerize across the C2 working tips. Dimerization can occur between two tooltip molecules across one bond or two bonds, as shown in Figure 4. Table 3 shows the results of geometry optimization energy minimization calculations using semi-empirical AM1 for the DCB6-Ge capped tooltip molecule [235] in various stages of “tip-on-tip” dimerization, for a variety of capping groups, in vacuo.

With no protective capping group in place, tip-to-tip dimerization is very energetically favorable. Tooltip molecule dimerization is energetically unfavorable to varying degrees for 1-atom capping groups consisting of, for example, -I, -Cl, -F, -Na, and -Li, and also for several 2-atom capping groups including hydroxyl (-OH), amine (-NH2), oxylithyl (-OLi), oxyiodinyl (-OI), and sulfiodinyl (-SI). In the case of some 2-atom oxyl (-OF), sulfyl (-SS-, -SH, -SF), and selenyl (-SeH) capping groups, dimerization is energetically unfavorable for direct =C-C= bonds linking the two tooltip molecules but appears likely to occur if dimerization occurs through an oxygen, sulfur (e.g., =C-S-C= or =C-S-S-C=) or selenium atom in the dimerization bond(s) linking the two tooltip molecules. Single-bond dimerization of an H-capped tooltip molecule with release of H2 is also energetically favorable, though double-bond dimerization for H-capped tooltips with the release of 2H2 appears unfavorable.

These analyses should be repeated using ab initio techniques, and should be extended to include a calculation of activation energy barriers (which could be substantial), weak ionic forces that could lead to crystallization (in the case of capping groups containing metal or semi-metal atoms), and solvent effects, all of which could affect the results. As a limited example of one such study, Mann et al [38] found that the dimerization reaction enthalpies of uncapped DCB6-Si and DCB6-Ge tooltip molecules are -1.64 eV and -1.84 eV, but that the energy barriers to the dimerization reaction were 1.93 eV and 1.86 eV, respectively. Therefore the dimerization of uncapped DCB6-Si and DCB6-Ge tooltip molecules “is thermodynamically favored but not kinetically favored. Due to the electron correlation errors in DFT these barrier heights may be considerably overestimated, therefore both reactions may be kinetically accessible at room temperature.” Subsequent work [235] appears to have confirmed that both tooltips work well as expected on the diamond C(110) surface, with the DCB6-Ge structure emerging as the preferred dimer placement tooltip molecule [235].

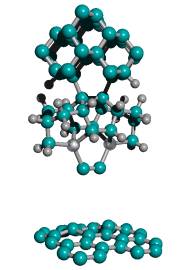

Figure 4. Progressive stages of possible “tip-on-tip” dimerization of capped tooltip molecules

(A) undimerized

(B) dimerized (1-bond)

(C) dimerized (2-bond)

| Table 3. Energy minimization calculations for DCB6-Ge capped tooltip molecule “tip-on-tip” dimerization, using semi-empirical AM1 (0 eV = lowest-energy configuration) |

|

| Tooltip Molecule Capping Group |

Undimerized Tooltip Molecule (eV)

|

Lowest-E Dimerized Tooltip Molecule (1-bond) (eV) |

Lowest-E Dimerized Tooltip Molecule (2-bond) (eV) |

| Dioxyl (=C-O-O-C=) |

forms unstable cyclic peroxides (ozonides) |

|

Diberyl (=C-Be-Be-C=)

Be in dimerizing bond(s):

no Be in dimerizing bond(s):

Oxygen (=C-O-C=)

including ozonides:

excluding ozonides:

O in dimerizing bond(s):

no O in dimerizing bond(s):

Beryllium (=C-Be-C=)

Sulfur (=C-S-C=)

S in dimerizing bond(s):

no S in dimerizing bond(s):

Imide (=C-NH-C=)

Diselenyl (=C-Se-Se-C=)*

Se in dimerizing bond(s):

no Se in dimerizing bond(s):

Diamine (=C-NHHN-C=)

N in dimerizing bond(s):

no N in dimerizing bond(s):

Selenium (=C-Se-C=)*

Se in dimerizing bond(s):

no Se in dimerizing bond(s):

NO CAPPING GROUP

Nitrodiiodinyl (I2N-C=C-NI2)

N in dimerizing bond(s):

no N in dimerizing bond(s):

Disulfyl (=C-S-S-C=)

S in dimerizing bond(s):

no S in dimerizing bond(s):

Selenohydryl (H-Se-C=C-Se-H)*

Se in dimerizing bond(s):

no Se in dimerizing bond(s):

Magnesium (=C-Mg-C=)*

Mg in dimerizing bond(s):

no Mg in dimerizing bond(s):

Oxybromyl (Br-O-C=C-O-Br)

O in dimerizing bond(s):

no O in dimerizing bond(s):

Phosphohydryl (H2P-C=C-PH2)

P in dimerizing bond(s):

no P in dimerizing bond(s):

Oxyfluoryl (F-O-C=C-O-F)

O in dimerizing bond(s):

no O in dimerizing bond(s):

Dimagnesyl (=C-Mg-Mg-C=)*

Mg in dimerizing bond(s):

no Mg in dimerizing bond(s):

Nitrodifluoryl (F2N-C=C-NF2)

N in dimerizing bond(s):

no N in dimerizing bond(s):

Fluorosulfyl (F-S-C=C-S-F)

S in dimerizing bond(s):

no S in dimerizing bond(s):

Sulfobromyl (Br-S-C=C-S-Br)

S in dimerizing bond(s):

no S in dimerizing bond(s):

Hydrogen (H-C=C-H)

Bromine (Br-C=C-Br)

Sulfhydryl (H-S-C=C-S-H)

S in dimerizing bond(s):

no S in dimerizing bond(s):

Amine (H2N-C=C-NH2)

N in dimerizing bond(s):

no N in dimerizing bond(s):

Iodine (I-C=C-I)

Chlorine (Cl-C=C-Cl)

Sulfiodinyl (I-S-C=C-S-I)

S in dimerizing bond(s):

no S in dimerizing bond(s):

Borohydryl (H2B-C=C-BH2)

B in dimerizing bond(s):

no B in dimerizing bond(s):

Oxyiodinyl (I-O-C=C-O-I)

O in dimerizing bond(s):

no O in dimerizing bond(s):

Hydroxyl (H-O-C=C-O-H)

O in dimerizing bond(s):

no O in dimerizing bond(s):

Berylfluoryl (F-Be-C=C-Be-F)

Be in dimerizing bond(s):

no Be in dimerizing bond(s):

Seleniodinyl (I-Se-C=C-Se-I)*

Se in dimerizing bond(s):

no Se in dimerizing bond(s):

Berylchloryl (Cl-Be-C=C-Be-Cl)

Be in dimerizing bond(s):

no Be in dimerizing bond(s):

Oxylithyl (Li-O-C=C-O-Li)

O in dimerizing bond(s):

no O in dimerizing bond(s):

Selenobromyl (Br-Se-C=C-Se-Br)*

Se in dimerizing bond(s):

no Se in dimerizing bond(s):

Fluorine (F-C=C-F)

Sodium (Na-C=C-Na)**

Lithium (Li-C=C-Li)

|

+ 11.256

+ 11.256

+ 9.214

+ 9.214

+ 9.214

+ 9.214

+ 7.293

+ 7.089

+ 7.089

+ 7.015

+ 6.563

+ 6.563

+ 6.004

+ 6.004

+ 6.346

+ 6.346

+ 4.585

–

+ 3.702

+ 3.702

+ 3.545

+ 3.545

+ 3.320

+ 3.320

+ 2.886

+ 2.886

+ 2.271

+ 2.271

–

+ 1.322

+ 1.322

+ 1.242

+ 1.242

+ 1.206

+ 1.206

–

+ 1.160

+ 1.160

+ 0.648

+ 0.648

+ 0.425

+ 0.425

+ 0.379

+ 0.070

+ 0.075

+ 0.075

–

0

0

0

0

0

0

–

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

|

+ 5.013

+ 12.874

+ 7.520

+ 10.775

+ 7.520

—-

+ 2.472

+ 2.843

—-

+ 5.173

+ 2.141

+ 5.870

+ 1.438

+ 0.923

+ 3.565

—-

—-

–

+ 4.881

0

+ 0.612

+ 3.871

+ 1.545

+ 5.463

+ 1.544

—-

0

+ 5.662

–

+ 1.398

0

+ 0.786

+ 2.479

—-

+ 1.229

–

+ 0.642

+ 2.023

+ 0.593

+ 1.349

0

+ 0.426

0

0

+ 0.317

+ 0.856

–

+ 0.166

+ 0.969

+ 0.171

+ 0.236

+ 0.212

+ 0.525

–

+ 0.239

+ 0.270

+ 0.631

+ 2.705

+ 0.607

+ 2.839

+ 1.417

+ 1.092

+ 1.418

+ 7.294

+ 1.524

+ 1.633

+ 1.705

+ 4.539

+ 2.077

+ 4.826

+ 3.048

+ 3.753

+ 10.941

|

0

—-

0

+ 0.492

0

+ 5.466

0

0

+ 6.661

0

+ 1.969

0

0

+ 6.315

0

+ 6.173

0

–

+ 3.594

+ 1.471

0

+ 4.799

0

+ 10.295

0

+ 2.012

+ 0.771

+ 10.001

–

+ 0.936

+ 1.926

0

+ 6.467

0

+ 3.204

–

0

+ 6.597

0

+ 5.509

+ 0.742

+ 5.733

+ 3.193

+ 3.426

0

+ 5.415

–

+ 0.512

+ 5.598

+ 3.621

+ 4.089

+ 0.166

+ 5.175

–

+ 0.926

+ 4.153

+ 0.467

+ 5.475

+ 0.576

+ 6.830

+ 2.680

+ 4.375

+ 7.364

+ 9.901

+ 2.625

+ 5.260

+ 3.803

+ 11.752

+ 6.670

+ 8.683

+ 9.682

+ 11.766

+ 23.698

|

| * energy minimization computed using PM3 instead of AM1 ** energy minimization computed using MNDO/d instead of AM1 |

In the case of bromine, and to a lesser extent in several other cases, the undimerized and 1-bond dimerized forms appear energetically almost equivalent, although 2-bond dimerization is energetically unlikely. Application of the process described in Step 2 using a capping group having this characteristic could result in a mixture of undimerized and 1-bond dimerized tooltips attached to the deposition surface. In the event that some 1-bond dimerizations occur and that a few dimerized tooltip molecules are subsequently inserted into the deposition surface during Step 2, the distinctive two-lobed geometric signature of these dimerized nucleation seeds can be detected and mapped via SPM scan prior to Step 3, and subsequently avoided during tool detachment in Step 4. Surface editing is another approach. Due to the low surface nucleation density (Section 2.2.1), after the aforementioned mapping procedure it may be possible to selectively detach and remove from the surface all attached dimerized tooltip molecules that are detected, e.g., using focused ion beam, electron beam, or NSOM photoionization, subtractively editing the deposition surface prior to commencing CVD in Step 3. An alternative to subtractive editing is additive editing, wherein FIB deposition of new substrate atoms on and around the dimerized tooltip molecule can effectively bury it under a smooth mound of fresh substrate, again preventing nucleation of diamond at that site during Step 3.

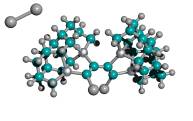

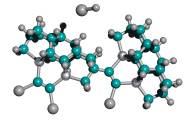

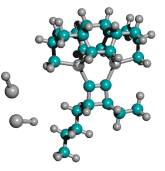

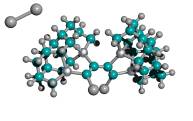

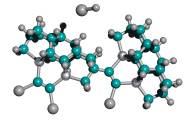

Third, the capped-C2 tip of the capped tooltip molecule should not spontaneously recombine into the side or the bottom of the adamantane base of neighboring tooltip molecules, during synthesis or storage, as illustrated in Figure 5 for a side-bonding event. Recombination can occur between two tooltip molecules across one bond or two bonds. Table 4 shows the results of semi-empirical energy calculations using AM1 for the DCB6-Ge capped tooltip molecule in two particular cases of “tip-on-base” side-bonding recombination, for a variety of capping groups, in vacuo.

With no protective capping group, tip-on-base recombination is very energetically preferred, with 1-bond recombination preferred over 2-bond when the H atom released from the adamantane base during formation of the 1-bond link becomes bonded with the remaining dangling bond of the tip-held C2 dimer. Mann et al [38] showed that intermolecular dehydrogenation from the bottom of the adamantane base by a neighboring uncapped tooltip molecule is exothermic and kinetically accessible (against a 0.48 eV reaction energy barrier) at room temperature. However, with an appropriate cap in place, tooltip molecule recombination is energetically unfavorable to varying degrees, e.g., for 1-atom capping groups consisting of -I, -Br, -Na, and -Li, and also for several 2-atom capping groups including hydroxyl (-OH), amine (-NH2), oxylithyl (-OLi), seleniodinyl (-SeI), several sulfyl groups including sulfhydryl (-SH), sulfiodinyl (-SI), and sulfalithyl (-SLi), and dimagnesyl (-MgMg-). There may be some tip-to-tip ionic bonding for beryllium (-Be-), lithium, oxylithyl, seleniodinyl, selenobromyl (-SeBr), berylfluoryl (-BeF) and berylchloryl (-BeCl) capping groups, and the imide (-NH-) cap appears to twist the tooltip dimer out of horizontal alignment. In the case of some 2-atom sulfyl (-SF, -SBr), and selenyl (-SeH) capping groups, recombination is energetically unfavorable for direct =C-C= bonds linking the two tooltip molecules but appears likely to occur if recombination occurs through a sulfur (e.g., =C-S-C= or =C-S-S-C=) or selenium atom in the recombination bond(s) linking the two tooltip molecules. Single-bond recombination of an H-capped tooltip molecule with release of H2 is slightly energetically favorable, though double-bond dimerization for H-capped tooltips with release of 2H2 appears very unfavorable energetically. These analyses should be repeated using ab initio techniques, and should be extended to include a calculation of activation energy barriers (which could be substantial), weak ionic forces that could lead to crystallization (in the case of capping groups containing metal atoms), and solvent effects, all of which could affect the results.

Figure 5. Progressive stages of possible “tip-on-base” recombination of capped tooltip molecules

(A) unrecombined

(B) 1-bond recombination

(C) 2-bond recombination

| Table 4. Energy minimization calculations for DCB6-Ge capped tooltip molecule “tip-on-base” recombination with adamantane base of tooltip molecule, using semi-empirical AM1 (0 eV = lowest-energy configuration) |

|

| Tooltip Molecule Capping Group |

Unrecombined (eV)

|

Recombined (1 bond) (eV)

|

Recombined (2 bonds) (eV)

|

|

Oxyfluoryl (F-O-C=C-O-F)

O in recombining bond(s):

no O in recombining bond(s):

Oxygen (=C-O-C=)

Nitrodifluoryl (F2N-C=C-NF2)

N in recombining bond(s):

no N in recombining bond(s):

Beryllium (=C-Be-C=)

Diselenyl (=C-Se-Se-C=)*

Se in recombining bond(s):

no Se in recombining bond(s):

NO CAPPING GROUP

Diamine (=C-NHHN-C=)

N in recombining bond(s):

no N in recombining bond(s):

Sulfur (=C-S-C=)

Imide (=C-NH-C=)

Diberyl (=C-Be-Be-C=)

Be in recombining bond(s):

no Be in recombining bond(s):

Oxybromyl (Br-O-C=C-O-Br)

O in recombining bond(s):

no O in recombining bond(s):

Selenium (=C-Se-C=)*

Fluorosulfyl (F-S-C=C-S-F)

S in recombining bond(s):

no S in recombining bond(s):

Fluorine (F-C=C-F)

Selenohydryl (H-Se-C=C-Se-H)*

Se in recombining bond(s):

no Se in recombining bond(s):

Oxyiodinyl (I-O-C=C-O-I)

O in recombining bond(s):

no O in recombining bond(s):

Sulfobromyl (Br-S-C=C-S-Br)

S in recombining bond(s):

no S in recombining bond(s):

Magnesium (=C-Mg-C=)*

Borohydryl (H2B-C=C-BH2)

B in recombining bond(s):

no B in recombining bond(s):

Chlorine (Cl-C=C-Cl)

Nitrodiiodinyl (I2N-C=C-NI2)

N in recombining bond(s):

no N in recombining bond(s):

Hydrogen (H-C=C-H)

Hydroxyl (H-O-C=C-O-H)

O in recombining bond(s):

no O in recombining bond(s):

Bromine (Br-C=C-Br)

Phosphohydryl (H2P-C=C-PH2)

P in recombining bond(s):

no P in recombining bond(s):

Amine (H2N-C=C-NH2)

N in recombining side bond(s):

N in recombining bottom bond(s):

no N in recombining side bond(s):

no N in recombining bottom bond(s):

Dimagnesyl (=C-Mg-Mg-C=)*

Mg in recombining bond(s):

no Mg in recombining bond(s):

Iodine (I-C=C-I)

Sulfhydryl (H-S-C=C-S-H)

S in recombining bond(s):

no S in recombining bond(s):

Sulfiodinyl (I-S-C=C-S-I)

S in recombining bond(s):

no S in recombining bond(s):

Oxylithyl (Li-O-C=C-O-Li)

O in recombining bond(s):

no O in recombining bond(s):

Sodium (Na-C=C-Na)**

Berylfluoryl (F-Be-C=C-Be-F)

Be in recombining bond(s):

no Be in recombining bond(s):

Sulfalithyl (Li-S-C=C-S-Li)

S in recombining bond(s):

no S in recombining bond(s):

Berylchloryl (Cl-Be-C=C-Be-Cl)

Be in recombining bond(s):

no Be in recombining bond(s):

Lithium (Li-C=C-Li)

Selenobromyl (Br-Se-C=C-Se-Br)*

Se in recombining bond(s):

no Se in recombining bond(s):

Seleniodinyl (I-Se-C=C-Se-I)*

Se in recombining bond(s):

no Se in recombining bond(s):

|

+ 8.306

+ 8.306

+ 4.622

–

+ 4.228

+ 4.228

+ 3.544

+ 3.306

+ 3.306

+ 3.207

+ 3.118

+ 3.118

+ 3.106

+ 2.883

+ 2.147

+ 2.147

+ 2.027

+ 2.027

+ 1.788

+ 1.583

+ 1.583

+ 0.771

+ 0.668

+ 0.668

+ 0.353

+ 0.353

+ 0.351

+ 0.351

+ 0.258

–

+ 0.209

+ 0.209

+ 0.111

–

+ 0.068

+ 0.068

0

0

0

0

–

0

0

–

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

|

+ 4.557

+ 7.973

0

–

+ 2.779

+ 4.011

0

+ 2.765

+ 2.563

0

+ 0.014

+ 0.622

0

0

0

+ 0.154

+ 1.815

+ 2.004

0

+ 1.312

+ 2.365

0

+ 1.544

+ 4.596

+ 0.502

+ 0.257

+ 0.531

+ 0.879

0

–

+ 0.237

+ 1.073

0

–

+ 1.086

+ 1.469

+ 0.117

+ 1.304

+ 0.143

+ 0.276

–

+ 0.662

+ 0.399

–

+ 1.066

+ 1.043

+ 0.423

+ 0.744

+ 0.731

+ 1.294

+ 0.785

+ 0.799

+ 0.890

+ 0.833

+ 0.921

+ 2.218

+ 1.148

+ 1.225

+ 1.842

+ 1.635

+ 3.018

+ 2.032

+ 3.430

+ 2.057

+ 3.700

+ 5.340

+ 7.749

+ 8.123

+ 10.503

|

0

+ 10.788

+ 2.997

–

0

+ 6.015

+ 4.335

0

+ 6.508

+ 1.333

0

+ 3.238

+ 3.859

+ 2.729

+ 0.663

+ 3.393

0

+ 5.019

+ 3.680

0

+ 6.057

+ 2.620

0

+ 8.318

0

+ 3.334

0

+ 5.087

+ 3.352

–

0

+ 4.215

+ 3.121

–

0

+ 3.632

+ 2.679

+ 1.570

+ 3.235

+ 3.538

–

+ 0.615

+ 2.607

–

+ 0.992

+ 1.854

+ 3.025

+ 2.444

+ 1.196

+ 3.229

+ 4.256

+ 0.379

+ 4.701

+ 0.425

+ 5.383

+ 0.089

+ 4.156

+ 4.813

+ 2.665

+ 5.569

+ 0.973

+ 7.264

+ 5.542

+ 6.162

+ 7.444

+ 5.145

+ 10.775

+ 11.421

+ 14.970

|

| * energy minimization computed using PM3 instead of AM1 ** energy minimization computed using MNDO/d instead of AM1 |

In the case of chlorine, and to a lesser extent in several other cases, the unrecombined and 1-bond recombined forms appear energetically almost equivalent, although 2-bond recombination is energetically unlikely. Application of the process described in Step 2 using a capping group having this characteristic could result in a mixture of unrecombined and 1-bond recombined tooltips attached to the deposition surface. In the event that some 1-bond recombinations occur and that a few recombined tooltip molecules are subsequently inserted into the deposition surface during Step 2, the distinctive two-lobed geometric signature of these recombined nucleation seeds can be detected and mapped via SPM scan prior to Step 3, and subsequently avoided during tool detachment in Step 4. Surface editing is another approach. Due to the low surface nucleation density (Section 2.2.1), after the aforementioned mapping procedure it may be possible to selectively detach and remove from the surface all attached recombined tooltip molecules that are detected, e.g., using focused ion beam, electron beam, or NSOM photoionization, subtractively editing the deposition surface prior to commencing CVD in Step 3. An alternative to subtractive editing is additive editing, wherein FIB deposition of new substrate atoms on and around the recombined tooltip molecule can effectively bury it under a smooth mound of fresh substrate, again preventing nucleation of diamond at that site during Step 3.

Fourth, the capped-C2 tip of the capped tooltip molecule should not spontaneously react with solvent, feedstock, or catalyst molecules that are employed during conventional techniques for the bulk chemical synthesis of functionalized adamantanes in solution phase. A definitive result regarding this capping-group selection factor depends critically upon the exact synthesis pathways required.

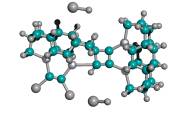

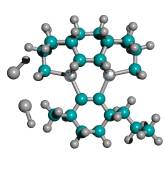

As a proxy for these many pathways, it has been shown that even straight-chain hydrocarbons, upon exposure to the customary aluminum halide catalysts at high temperature, readily produce mixtures of various polymethyladamantanes [81]. The simplest-case recombination event illustrated in Figure 6 was analyzed via semi-empirical energy calculations using AM1 for the DCB6-Ge iodine-capped tooltip molecule in the specific instances of 1-bond and 2-bond side-bonding recombination with a simple straight-chain hydrocarbon molecule (n-octane). The 2-bond analysis includes one event in which the second bond occurs adjacent to the first, producing a 4-carbon ring with the octane molecule, and a second alternative event in which the second bond occurs with an octane chain carbon atom three positions down the chain, producing a more stable 6-carbon ring with the octane molecule. Since solvent effects, temperature, reverse reaction rates, and so forth will determine whether the reaction can occur, and will also determine the relative yields of various products and reactants, the thermodynamics results indicate primarily the relative ease or difficulty of maintaining the given capped tooltip molecule stably in solution with liquid n-octane. The data in Table 5 show that iodine (-I), hydrogen (-H), amine (-NH2), and perhaps bromine (-Br) capped tooltip molecules should be the most stable in hydrocarbon media, as should seleniodinyl (-SeI) and several sulfyl-capped molecules including sulfhydryl (-SH), sulfiodinyl (-SI), and sulfobromyl (-SBr). Fluorine- and oxygen-containing capping groups may be (relatively) less stable.

Figure 6. Progressive stages of possible side-bonding recombination reaction between an iodine-capped DCB6-Ge tooltip molecule (above) and a molecule of n-octane (below)

|

->

|

|

| (A) unrecombined |

|

(B) 1-bond recombination |

|

->

|

|

|

| |

(C) 2-bond recombination (4-carbon ring) |

(D) 2-bond recombination (6-carbon ring)

|

| Table 5. Energy minimization calculations for DCB6-Ge capped tooltip molecule side-bonding recombination reaction with a molecule of n-octane, using semi-empirical AM1 (0 eV = lowest-energy configuration) |

|

| Tooltip Molecule Capping Group |

Not Recombined (eV)

|

Recombined (1 bond) (eV) |

Recombined (2 bonds, 4-carbon ring) (eV)

|

Recombined (2 bonds, 4-carbon ring) (eV) |

|

Imide (-NH-)

Sulfur (=C-S-C=)

NO CAP

Diamine (-NHHN-)

Fluorine (-F)

Lithium (-Li)

Oxylithyl (-OLi)

Selenobromyl (-SeBr)*

Oxybromyl (OBr)

Oxyiodinyl (-OI)

Hydroxyl (-OH)

Nitrodifluoryl (-NF2)

Disulfyl (=C-S-S-C=)

Chlorine (-Cl)

Borohydryl (-BH2)

Sulfalithyl (-SLi)

Bromine (-Br)

Hydrogen (-H)

Phosphohydryl (-PH2)

Iodine (-I)

Amine (-NH2)

Nitrodiiodinyl (-NI2)

Sulfhydryl (-SH)

Sulfiodinyl (-SI)

Sulfobromyl (-SBr)

Berylfluoryl (-BeF)

Berylchloryl (-BeCl)

Dimagnesyl (-Mg2-)*

Seleniodinyl (-SeI)*

|

+ 4.075

+ 3.397

+ 3.347

+ 2.838

+ 1.989

+ 1.744

+ 1.194

+ 1.099

+ 0.979

+ 0.967

+ 0.948 – + 0.885

+ 0.841

+ 0.765 – + 0.690

+ 0.484

+ 0.346

+ 0.081 – + 0.043

0 – 0 – 0

0

0

0

0

0 – 0

0

|

0

0

—-

+ 2.949

+ 1.029

+ 2.439

+ 1.189

+ 1.612

+ 0.503

+ 0.575

+ 0.472 – + 0.421

0

+ 0.429 – + 1.370

+ 1.276

+ 0.214

+ 0.069 – + 0.072

+ 0.147 – + 0.148 – + 0.239

+ 0.465

+ 0.478

+ 0.526

+ 0.562

+ 0.725 – + 0.956

+ 1.474

|

+ 2.148

+ 2.391

+ 1.935

+ 1.939

+ 1.999

+ 1.806

+ 2.379

+ 2.465

+ 1.963

+ 1.968

+ 1.987 – +1.961

+ 2.137

+ 2.044 – + 4.003

+ 1.859

+ 1.946

+ 1.939 – + 1.906

+ 2.041 – + 2.263 – + 2.261

+ 2.346

+ 2.579

+ 1.678

+ 2.263

+ 3.114 – + 2.399

+ 0.834

|

+ 0.200

+ 0.446

0

0

0

0

0

0

0

0

0 – 0

+ 0.380

0 – 0

0

0

0 – 0

+ 0.120 – + 0.301 – + 0.346

+ 0.759

+ 0.832

+ 1.082

+ 0.876

+ 1.191 – + 0.802

+ 1.498

|

|

* energy minimization computed using PM3 instead of AM1

|

2.2 STEP 2: Attach Tooltip Molecule to Deposition Surface in Preferred Orientation

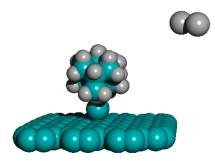

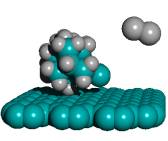

STEP 2. Attach a small number of tooltip molecules to an appropriate deposition surface in tip-down orientation, so that the tooltip-bound dimer is bonded to the deposition surface.

The appropriate deposition surface material (Section 2.2.1) is determined by choosing a surface which is not readily amenable to bulk diamond deposition, under the thermal and chemical conditions that will prevail during the diamond deposition processes described in Step 3. In Attachment Method A (Section 2.2.2), tooltip molecules may be bonded to the deposition surface in the desired orientation via low-energy ion bombardment of the deposition surface in vacuo, creating a low density of preferred diamond nucleation sites. In Attachment Method B (Section 2.2.3), tooltip molecules may be bonded to the deposition surface in the desired orientation by non-impact dispersal and weak physisorption on the deposition surface, followed by tooltip molecule decapping via targeted energy input producing dangling bonds at the C2 dimer which can then bond into the deposition surface in vacuo, also creating a low density of preferred diamond nucleation sites. In Attachment Method C (Section 2.2.4), the techniques of conventional solution-phase chemical synthesis are used to attach tooltip molecules to a deposition surface in the preferred orientation, again creating diamond nucleation sites.

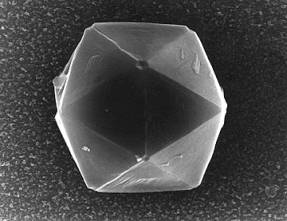

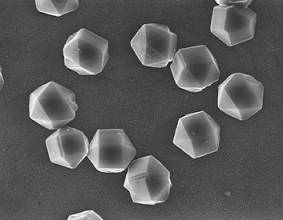

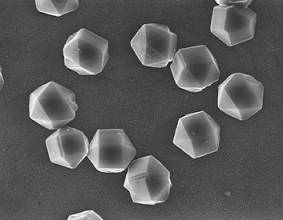

2.2.1 Surface Nucleation and Choice of Deposition Substrate

The intention of this invention is to grow a handle molecule as a single crystal of bulk diamond large enough to permit convenient physical manipulation of the attached C2 dimer-bearing tooltip. Since this single crystal will be in the size range of 0.1-10 microns, and since sufficient room must be allowed around each single crystal to afford access to a MEMS-scale gripping mechanism, the maximum surface nucleation density appropriate for this process in the preferred embodiment will be ~105 cm-2, giving a mean separation between handle molecule crystals of ~32 microns on the deposition surface. In other embodiments in which much smaller 100 nm handle molecule crystals can be employed with narrower attachment clearances for the external gripping mechanism, the maximum surface nucleation density could be as high as ~109 cm-2, giving a mean separation between surface-grown handle molecule crystals of ~320 nm.

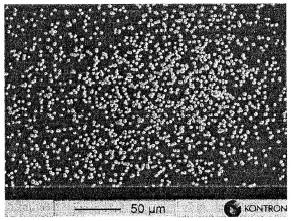

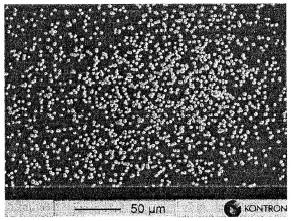

Conventional diamond films grown by CVD on smooth nondiamond substrates are characterized by very low nucleation densities, typically <104 cm-2 when diamond is deposited on a polished silicon wafer surface, which is many orders of magnitude less than that exhibited by most materials [127]. (Interestingly, the CVD nucleation density of diamond nanocrystals on an SiO2 substrate is 6 orders of magnitude smaller than on pure silicon [18].) The commercial preparation of continuous diamond films requires separately nucleated diamond crystals eventually to grow together to form a single sheet, hence is maximally efficient under conditions of high nucleation density. Therefore diamond film growth procedures often include preliminary substrate preparation techniques which attempt to increase the nucleation density to a practicable level. Such techniques typically involve introduction of surface discontinuities by scratching or abrading the substrate surface with a fine diamond grit powder or paste. Such surface discontinuities either create preferential geometrical sites for diamond crystal nucleation, or more probably embedded residues from the diamond abrading powder may serve as nucleation sites from which diamond growth can occur by accumulation. The presence of carbon particles on the surface of a substrate can provide a high density of nucleation sites for subsequent diamond growth [82]. As shown in Table 6, despite abrasive surface preparation the nucleation densities for diamond films prepared by such techniques remain relatively low, on the order of ~108 cm-2 (~1 µm-2) (vs. ~1015 cm-2 available atomic sites), and the surface structure of such films is unpredictable and typically exhibits very disordered surface patterns [127]. Nucleation has also been enhanced by coating substrate surfaces with a thin (10-20 nm) layer of hydrocarbon oil [83].

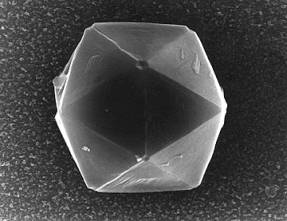

| Table 6. Typical surface nucleation densities of diamond on polished silicon after various surface pretreatments (modified from Liu and Dandy [84]) |

|

| Pretreatment Method |

Typical Nucleation Density (nuclei/cm2)

|

|

No pretreatment

Covering/coating with Fe film

As+ ion implantation on Si

Covering/coating with graphite film

Manual scratching with diamond grit

Seeding

Ultrasonic scratching with diamond grit

Biasing (voltage)

Covering/coating with graphite fiber

C70 clusters + biasing 0

|

103 – 105

5 x 105

105 – 106

106

106 – 1010

106 – 1010

107 – 1011

108 – 1011

>109

3 x 1010

|

Since the purpose of this invention is to grow isolated micron-scale diamond single crystals over tooltip molecule nucleation sites, rather than a continuous diamond film, the deposition surface ideally is chosen so as to minimize the number of natural (non-tooltip molecule) nucleation sites. If tooltip molecules are attached at a number density of ~105 cm-2 to a surface of polished silicon otherwise having no pretreatment, the number density of naturally occurring nucleation sites can be held to at most 103-105 cm-2. This implies that from 50% to 99% of the isolated micron-scale diamond single crystals that are grown during Step 3 (Section 2.3) will be correctly nucleated by surface-bound undimerized tooltip molecules. An SPM scan of the deposition surface, following the completion of Step 2 but prior to the commencement of Step 3, can identify and map the positions of all of the undimerized surface-bound tooltip molecules, so that the isolated micron-scale diamond single crystals that are later grown and properly nucleated by surface-bound tooltip molecules can be identified prior to selection and detachment in Step 4 (Section 2.4).

As noted by May [85], most of the CVD diamond films reported to date have been grown on single-crystal Si wafers, mainly due to the availability, low cost, and favorable properties of Si wafers. But this is not the only possible substrate material. Candidate substrates for diamond handle molecule crystal growth must satisfy five important basic criteria [85], the first four of which are summarized quantitatively in Table 7.

First, the substrate must have a melting point (at the process pressure) higher than the temperature required for diamond growth (at least 300-500 oC, but normally greater than 700 oC). This precludes the use of low-melting-point materials such as plastics, aluminum, certain glasses and some electronic materials such as GaAs as a deposition substrate, when hydrogenic diamond CVD techniques are employed in Step 3 (Section 2.3).

Second, for growing diamond films the substrate material should have a thermal expansion coefficient comparable with that of diamond, since at the high growth temperatures currently used, a substrate will tend to expand, and thus the diamond coating will be grown upon and bonded directly to an expanded substrate. Upon cooling, the substrate will contract back to its room temperature size, whereas the diamond coating, with its very small expansion coefficient, will be relatively unaffected by the temperature change, causing the diamond film to experience significant compressive stresses from the shrinking substrate, leading to bowing of the sample, and/or cracking, flaking or even delamination of the entire film [85]. However, a nondiamond deposition surface for growing diamond tool handle molecules, starting from surface-bound tooltip molecule nuclei, should incorporate the maximum possible thermal expansion mismatch between the substrate and diamond, producing thermal stresses upon cooling that can facilitate tool separation from the nondiamond deposition surface in Step 4 (Section 2.4).

Third, a mismatch in the crystal lattice constant [86, 87] between the diamond comprising the tool handle molecule and the nondiamond substrate greatly reduces the bonding opportunities between handle molecule and substrate, during handle molecule growth (Section 2.3). An extensive interfacial misfit also facilitates tool separation from the nondiamond deposition surface in Step 4 (Section 2.4).

Fourth, in order to form adherent diamond films it is a customary requirement that the substrate material should be capable of forming a carbide layer to a certain extent, since diamond CVD on nondiamond substrates usually involves the formation of a thin carbide interfacial layer upon which the diamond then grows. The carbide layer is viewed as a “glue” which promotes diamond growth and aids its adhesion by (partial) relief of interfacial stresses caused by lattice mismatch and substrate contraction [85]. However, the ideal nondiamond deposition surface for growing diamond tool handle molecules, starting from surface-bound tooltip molecule nuclei, is a substrate that resists or prohibits carbide formation. The absence of carbide on the nondiamond deposition surface (a) discourages downgrowth of the tool handle molecule into the substrate, (b) helps maintain the isolation of the finished tooltip apex, and (c) facilitates tool separation from the nondiamond deposition surface in Step 4 (Section 2.4). On the basis of carbide exclusion, potential substrate materials including metals, alloys and pure elements can be subdivided into three broad classes [85, 88], in descending order of preference for the present invention:

(1) Carbide Exclusion. Metals such as Cu, Sn, Pb, Ag and Au, as well as non-metals such as Ge and sapphire/alumina (Al2O3), have little or no solubility or reaction with C. These materials do not form a carbide layer, and so any diamond layer that might try to form will not adhere well to the surface (which is known as a way to make free-standing diamond films, as the films will often readily delaminate after deposition). These are the best materials for a deposition surface upon which to grow detachable diamond tool handle molecules nucleated by surface-bound tooltip molecules. Unwanted natural nucleation centers are unlikely to arise on polished non-pretreated surfaces and downgrowth from the tooltip molecule seed or the growing tool handle structure, towards the substrate, will be resisted by these surfaces.

(2) Carbon Solvation. Metals such as Pt, Pd, Rh, Ni, Ti and Fe exhibit substantial mutual solubility or reaction with C (all industrially important ferrous materials such as iron and stainless steel cannot be diamond coated using simple CVD methods) [85]. During CVD, a substrate composed of these metals acts as a carbon sink whereupon deposited carbon dissolves into the surface, forming a solid solution. This dissolution transports large quantities of C into the bulk, rather than remaining at the surface where it can promote diamond nucleation [85]. Often diamond growth on the surface only begins after the substrate is completely saturated with carbon, with carbide finally appearing on the surface, by which time the tool handle molecule may already have grown sufficiently large as a single diamond crystal atop a surface-bound tooltip molecule.