Glasses-free 3D projector

May 20, 2014

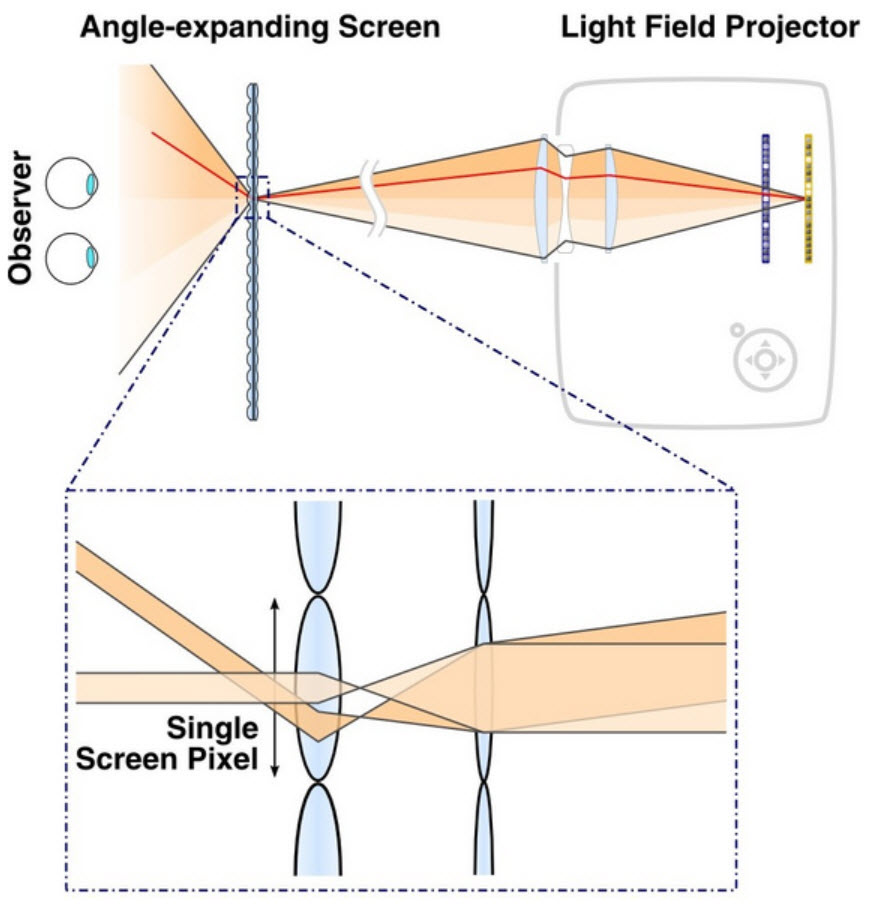

Overview of light field projection system. Two spatial light modulators synthesize a light field inside a projector (top right). The projection screen is composed of an array of angle-expanding pixels (bottom). Inspired by Keplerian telescopes, these pixels expand the field of view of the emitted light field for an observer on the other side of the screen. (Credit: MIT Media Lab, Camera Culture Group)

Over the past three years, researchers in the Camera Culture group at the MIT Media Lab have steadily refined a design for a glasses-free, multiperspective, 3D video screen, which they hope could provide a cheaper, more practical alternative to holographic video in the short term.

Now they’ve designed a projector that exploits this technology, which they’ll unveil at this year’s Siggraph, the major conference in computer graphics.

The projector can also improve the resolution and contrast of conventional video, which could make it an attractive transitional technology as content producers gradually learn to harness the potential of multiperspective 3D.

Multiperspective 3D differs from the stereoscopic 3D now common in movie theaters in that the depicted objects disclose new perspectives as the viewer moves about them, just as real objects would.

This means it might have applications in areas like collaborative design and medical imaging, as well as entertainment — an alternative to head-mounted virtual-reality devices.

The MIT researchers — research scientist Gordon Wetzstein, graduate student Matthew Hirsch, and Ramesh Raskar, the NEC Career Development Associate Professor of Media Arts and Sciences and head of the Camera Culture group — built a prototype of their system using off-the-shelf components.

The heart of the projector is a pair of liquid-crystal modulators — which are like tiny liquid-crystal displays (LCDs) — positioned between the light source and the lens. Patterns of light and dark on the first modulator effectively turn it into a bank of slightly angled light emitters — that is, light passing through it reaches the second modulator only at particular angles. The combinations of the patterns displayed by the two modulators thus ensure that the viewer will see slightly different images from different angles.

The researchers also built a prototype of a new type of screen that widens the angle from which their projector’s images can be viewed. The screen combines two lenticular lenses — the type of striated transparent sheets used to create crude 3D effects in, say, old children’s books.

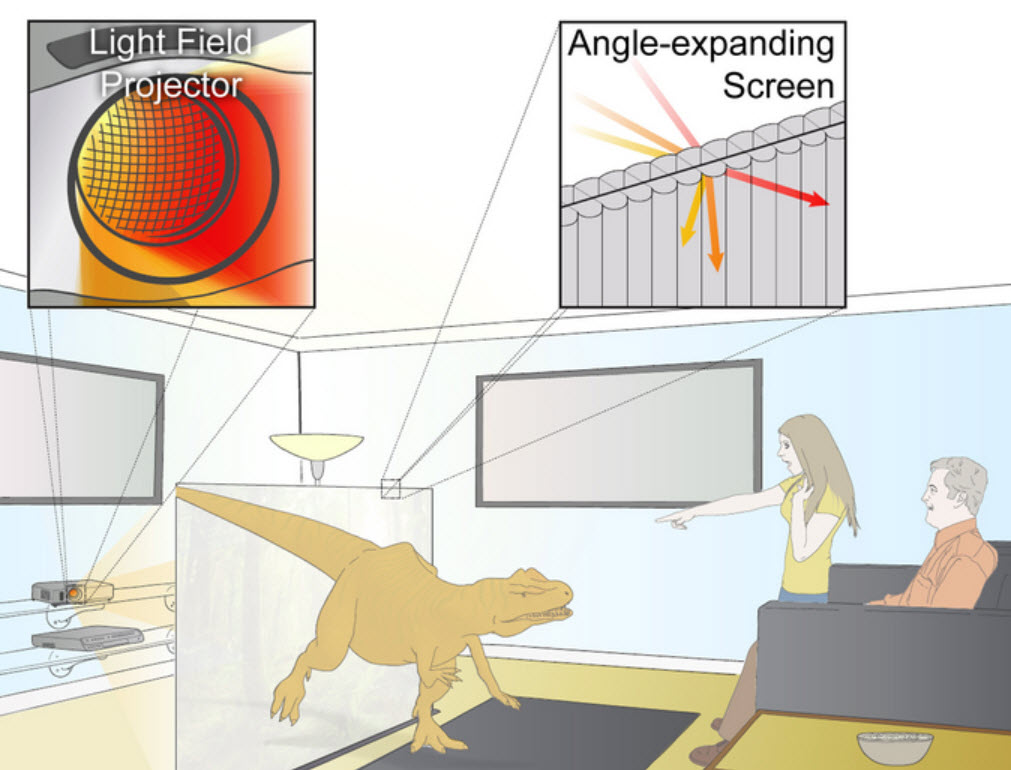

llustration of concept. A light field projector, build using readily-available optics and electronics, emits a 4D light field onto a screen that expands the field of view so that observers on the other side of the screen can enjoy glasses-free 3D entertainment. No mechanically moving parts are used in either the projector or the screen. Additionally, the screen is completely passive, potentially allowing for the system to be scaled to significantly larger dimensions. (Credit: MIT Media Lab, Camera Culture Group)

Exploiting redundancy

For every frame of video, each modulator displays six different patterns, which together produce eight different viewing angles: At high enough display rates, the human visual system will automatically combine information from different images. The modulators can refresh their patterns at 240 hertz, or 240 times a second, so even at six patterns per frame, the system could play video at a rate of 40 hertz, which, while below the 60 Hz refresh rate common in today’s TVs, is still higher than the 24 frames per second standard in film.

With the technology that has historically been used to produce glasses-free 3D images — known as a parallax barrier — simultaneously projecting eight different viewing angles would mean allotting each angle one-eighth of the light emitted by the projector, which would make for a dim movie.

But like the researchers’ prototype monitors, the projector takes advantage of the fact that, as you move around an object, most of the visual change takes place at the edges. If, for instance, you were looking at a blue mailbox as you walked past it, from one step to the next, much of your visual field would be taken up by a blue of approximately the same shade, even though different objects were coming into view behind it.

Algorithmically, the key to the researchers’ system is a technique for calculating how much information can be preserved between viewing angles and how much needs to be varied. Preserving as much information as possible enables the projector to produce a brighter image.

The resulting set of light angles and intensities then has to be encoded into the patterns displayed by the modulators. That’s a tall computational order, but by tailoring their algorithm to the architecture of the graphics processing units (GPUs) designed for video games, the MIT researchers have gotten it to run almost in real time. Their system can receive data in the form of eight images per frame of video and translate it into modulator patterns with very little lag.

Bridge technology

Passing light through two modulators can also heighten the contrast of ordinary 2-D video. One of the problems with LCD screens is that they don’t enable “true black”: a little light always leaks through even the darkest regions of the display. The researchers have developed an algorithm that can calculate patterns on the fly to enable true black.

As content creators move to “quad HD” — video with four times the resolution of today’s high-definition video, the combination of higher contrast and higher resolution could make a commercial version of the researchers’ technology appealing to theater owners, which in turn could smooth the way for the adoption of multiperspective 3D.

“One thing you could do — and this is what actual projector manufacturers have done in the recent past — is take four 1080p modulators and put them next to each other and build some very complicated optics to tile them all seamlessly and then get a much nicer lens because you have to project a much smaller spot and bundle that all up together,” Hirsch says.

“We’re saying you could take two 1080p modulators, stick them in your projector one after the other, then take your same old 1080p lens and project through it and use this software algorithm, and you end up with a 4k image. But not only that, it’s got even higher contrast.”

Spreading pixels

Oliver Cossairt, an assistant professor of electrical engineering and computer science at Northwestern University, once worked for a company that was attempting to commercialize glasses-free 3D projectors. “What I consider the novelty of [the MIT researchers’] approach involves two things,” Cossairt says. The first, he says, is “playing around with the parallax-barrier idea so that you can make it so that it (a) doesn’t block as much light and (b) gets better resolution.”

The second, he says, is the prototype screen. “There is this invariant of optical systems that says that if you take the area of the plane and the solid angle of light coming out from that plane, that is fixed,” Cossairt says. “What that means is that if you take the 3D image size and stretch it out to be, let’s say, 10 times as large, then the field of view will decrease by a factor of 10. That’s what we ran into. We couldn’t figure out a way around that.”

“They came up with a screen that instead of stretching the image — which is what projection optics does — essentially moved the pixels away from each other,” Cossairt continues. “That allowed them to break this invariance.”

As striking as it is, the illusion of depth now routinely offered by 3D movies is a paltry facsimile of a true three-dimensional visual experience. In the real world, as you move around an object, your perspective on it changes. But in a movie theater showing a 3D movie, everyone in the audience has the same, fixed perspective — and has to wear cumbersome glasses, to boot.

Despite impressive recent advances, holographic television, which would present images that vary with varying perspectives, probably remains some distance in the future. But in a new paper featured as a research highlight at this summer’s Siggraph computer-graphics conference, the MIT Media Lab’s Camera Culture group offers a new approach to multiple-perspective, glasses-free 3D that could prove much more practical in the short term.

Instead of the complex hardware required to produce holograms, the Media Lab system uses several layers of liquid-crystal displays (LCDs), the technology currently found in most flat-panel TVs. To produce a convincing 3D illusion, the displays would need to refresh at a rate of about 360 times a second, or 360 hertz. Such displays may not be far off: LCD TVs that boast 240-hertz refresh rates have already appeared on the market, just a few years after 120-hertz TVs made their debut.