Google and NASA launch Quantum Artificial Intelligence Lab

May 17, 2013

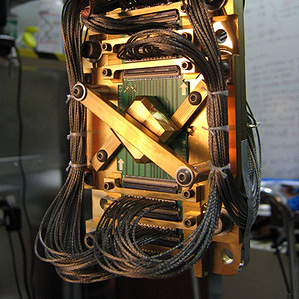

The chip at the heart of one of D-Wave’s computers (credit: D-Wave)

Google, in partnership with NASA and the Universities Space Research Association (USRA), has launched an initiative to investigate how quantum computing might lead to breakthroughs in machine learning, a branch of AI that focuses on construction and study of systems that learn from data..

The new lab will use the D-Wave Two quantum computer.A recent study (see “Which is faster: conventional or quantum computer?“) confirmed the D-Wave One quantum computer was much faster than conventional machines at specific problems.

The machine will be installed at the NASA Advanced Supercomputing Facility at the NASA Ames Research Center in Mountain View, California.

“We hope it helps researchers construct more efficient and more accurate models for everything from speech recognition, to web search, to protein folding,” said Hartmut Neven, Google director of engineering.

Hybrid solutions

“Machine learning is highly difficult. It’s what mathematicians call an ‘NP-hard’ problem,” he said. “Classical computers aren’t well suited to these types of creative problems. Solving such problems can be imagined as trying to find the lowest point on a surface covered in hills and valleys.

“Classical computing might use what’s called a ‘gradient descent’: start at a random spot on the surface, look around for a lower spot to walk down to, and repeat until you can’t walk downhill anymore. But all too often that gets you stuck in a “local minimum” — a valley that isn’t the very lowest point on the surface.

“That’s where quantum computing comes in. It lets you cheat a little, giving you some chance to ‘tunnel’ through a ridge to see if there’s a lower valley hidden beyond it. This gives you a much better shot at finding the true lowest point — the optimal solution.”

Google has already developed some quantum machine-learning algorithms, Neven said. “One produces very compact, efficient recognizers — very useful when you’re short on power, as on a mobile device. Another can handle highly polluted training data, where a high percentage of the examples are mislabeled, as they often are in the real world. And we’ve learned some useful principles: e.g., you get the best results not with pure quantum computing, but by mixing quantum and classical computing.”