How our visual perception system unconsciously affects our preferences

May 24, 2012

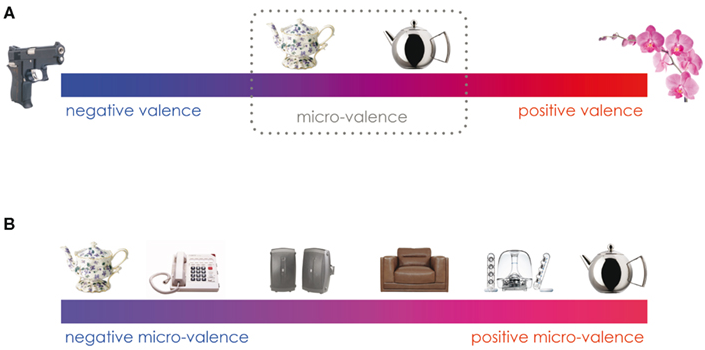

The valence continuum in (A) illustrates the dimension of valence ranging from strongly positive (red) to strongly negative (blue). As indicated by the dashed gray line, objects perceived to have a valence close to the neutral point on the continuum are nonetheless regarded as having a micro-valence (subtle affective valences). In (B) the portion of the continuum encompassed by the gray line in (A) has been expanded to represent a finer-grained continuum. The ordering of objects here reflects this expanded continuum, albeit with lesser magnitudes (S. Lebrecht et al./Frontiers in Perception Science)

The brain’s visual perception system automatically and unconsciously guides decision-making through valence perception, new research from Carnegie Mellon University’s Center for the Neural Basis of Cognition (CNBC) shows .

Valence — the positive or negative information automatically perceived in the majority of visual information — integrates visual features and associations from experience with similar objects or features. In other words, it is the process that allows our brains to rapidly make choices between similar objects.

The findings offer important insights into consumer behavior in ways that traditional consumer marketing focus groups cannot address. For example, asking individuals to react to package designs, ads or logos is simply ineffective. Instead, companies can use this type of brain science to more effectively assess how unconscious visual valence perception contributes to consumer behavior.

To transfer the research’s scientific application to the online video market, the CMU research team is in the process of founding the start-up company neonlabs through the support of the National Science Foundation (NSF) Innovation Corps (I-Corps).

“This basic research into how visual object recognition interacts with and is influenced by affect paints a much richer picture of how we see objects,” said Michael J. Tarr, the George A. and Helen Dunham Cowan Professor of Cognitive Neuroscience and co-director of the CNBC. “What we now know is that common, household objects carry subtle positive or negative valences and that these valences have an impact on our day-to-day behavior.”

The researchers are launching neonlabs to apply their model of visual preference to increase click rates on online videos, by identifying the most visually appealing thumbnail from a stream of video. The web-based software product selects a thumbnail based on neuroimaging data on object perception and valence, crowd-sourced behavioral data, and proprietary computational analyses of large amounts of video streams.

“Everything you see, you automatically dislike or like, prefer or don’t prefer, in part, because of valence perception,” said Sophie Lebrecht, lead author of the study and the entrepreneurial lead for the I-Corps grant. “Valence links what we see in the world to how we make decisions.

“Talking with companies such as YouTube and Hulu, we realized that they are looking for ways to keep users on their sites longer by clicking to watch more videos. Thumbnails are a huge problem for any online video publisher, and our research fits perfectly with this problem. Our approach streamlines the process and chooses the screenshot that is the most visually appealing based on science, which will in the end result in more user clicks.”

Ref.: Sophie Lebrecht, Moshe Bar, Lisa Feldman Barrett, Michael J. Tarr, Micro-valences: perceiving affective valence in everyday objects, Frontiers in Perception Science, 2012, DOI: 10.3389/fpsyg.2012.00107 (open access)