How to identify and predict human activities from video

October 30, 2012

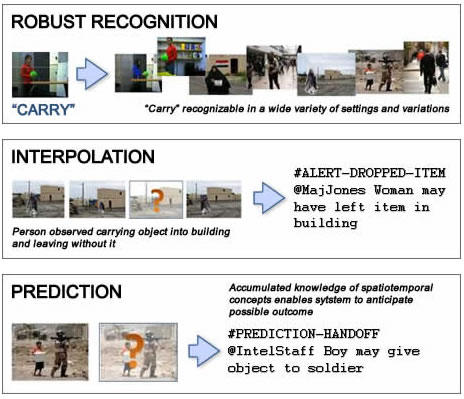

The Mind’s Eye program will automate video analysis — recognizing current behavior, interpolating actions that occur off-camera, and predicting future behavior (credit: Carnegie Mellon University)

A video shows a woman carrying a box into a building. Later, it shows her leaving the building without it. What was she doing?

Carnegie Mellon University’s (CMU) Mind’s Eye program is creating intelligent software that will recognize human activities in video and predict what might happen next. It will also flag unusual events and deduce actions that may be occurring off-camera.

Automating the time-consuming job of viewing and interpreting video images will speed intelligence-gathering, improve monitoring, and provide new tools for research. Autonomous systems could employ Mind’s Eye technologies in applications ranging from defense to medical and consumer robotics.

Recognizing and predicting human activity in video footage is a difficult problem. People do not all perform the same action in the same way. Different actions may look very similar on video. And videos of the same action can vary wildly in appearance due to lighting, perspective, background, the individuals involved, and more.

To minimize the effects of these variations, Carnegie Mellon’s Mind’s Eye software will generate 3D models of the human activities and match these models to the person’s motion in the video. It will compare the video motion to actions it’s already been trained to recognize (such as walk, jump, and stand) and identify patterns of actions (such as pick up and carry). The software examines these patterns to infer what the person in the video is doing. It also makes predictions about what is likely to happen next and can guess at activities that might be obscured or occur off-camera.

Carnegie Mellon is one of twelve research teams and three commercial integrators participating in this five-year program, which is sponsored by DARPA’s Information Innovation Office. The project kicked off in September, 2010 and is currently in the early stages of software development.