How to learn things automatically

December 12, 2011 by Amara D. Angelica

OK, this one’s right out of The Matrix and The Manchurian Candidate.

Imagine watching a computer screen while lying down in a brain imaging machine and automatically learning how to play the guitar or lay up hoops like Shaq O’Neal, or even how to recuperate from a disease — without any conscious knowledge.

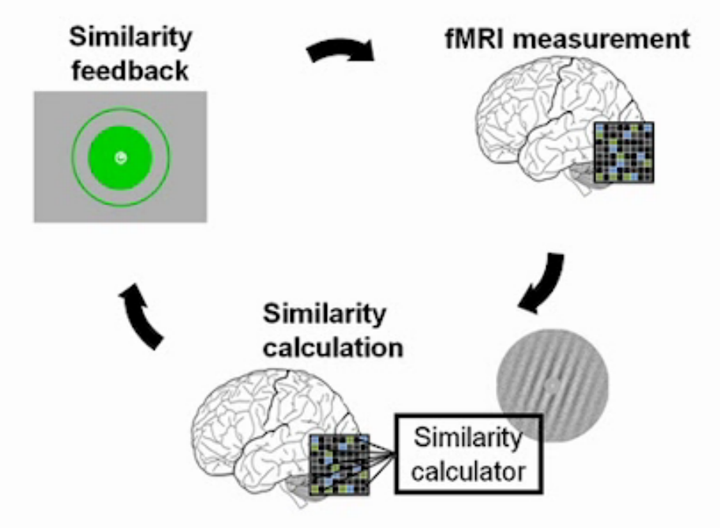

Researchers at Boston University (BU) and ATR Computational Neuroscience Laboratories in Kyoto, Japan used decoded functional magnetic resonance imaging (fMRI) to induce visual cortex activity patterns to match a previously known target state and thereby improve performance on visual tasks.

“Adult early visual areas are sufficiently plastic to cause visual perceptual learning,” said lead author and BU neuroscientist Takeo Watanabe, director of BU’s Visual Science Laboratory.

Neuroscientists have previously found that pictures gradually build up inside a person’s brain, appearing first as lines, edges, shapes, colors and motion in early visual areas. The brain then fills in greater detail to make a red ball appear as a red ball, for example. Researchers studied the early visual areas for their ability to cause improvements in visual performance and learning.

“However, none of these studies directly addressed the question of whether early visual areas are sufficiently plastic to cause visual perceptual learning,” said Watanabe. So they used decoded fMRI neurofeedback to induce a particular activation pattern in targeted early visual areas that corresponded to a pattern evoked by a specific visual feature in a brain region of interest. The researchers found that repetitions of the activation pattern caused long-lasting visual performance improvement on that visual feature — without the subject’s active involvement. The method could be used for improving memory or motor (muscle) skills, the researchers suggest.

But that’s where is gets a bit scary. “In theory, hypnosis or a type of automated learning is a potential outcome,” said Kawato. “However, in this study we confirmed the validity of our method only in visual perceptual learning. So we have to test if the method works in other types of learning in the future. At the same time, we have to be careful so that this method is not used in an unethical way.”

Uh, ya think?

Ref.: Kazuhisa Shibata et al., Perceptual Learning Incepted by Decoded fMRI Neurofeedback Without Stimulus Presentation, Science, 2011 [DOI: 10.1126/science.1212003]

Are virtual worlds better than the real world for learning?

Here another one that weirds me out just a little. It uses virtual worlds to help students learn.

Academics at Glasgow University and elsewhere have developed 3D virtual worlds to act as informal communities that allow students to learn by interacting in shared learning activities, such as film making and photography.

“We demonstrated that you can plan activities with kids and get them working in 3D worlds with commitment, energy and emotional involvement, over a significant period of time,” project lead researcher Professor Victor Lally said.

OK, but those are things sound like fun to do. Why do they need avatars and elaborate virtual worlds — just give me a video cam and some software and get the hell out of my way! These kids are sitting there in from of monitors vegging out watching virtual worlds instead of shooting Occupy Edinburgh for YouTube.

My guess is that this is really intended as a tool to teach stuff that students have no interest in. In other words: more effective compulsory government-controlled education.

“It’s a highly engaging medium that could have a major impact in extending education and training beyond geographical locations. 3-D worlds seem to do this in a much more powerful way than many other social tools currently available on the Internet,” Lally insisted. “When appropriately configured, this virtual environment can offer safe spaces to experience new learning opportunities that seemed unfeasible only 15 years ago.”

“You can now create multiple science simulations of field trip locations, for example, using 3-D world ‘hyper-grids’ that allow participants to ‘teleport’ between a range of experiments or activities. This enables the students to share their learning through recording their activities, presenting graphs about their results, and use voting technologies to judge attitudes and opinions from others. It can offer new possibilities for designing exciting and engaging learning spaces. This kind of 3D technology could be used to simulate training environments, retail contexts, and interview situations.”

A mobile app is also in development. The research is part of the Inter-Life project in Scotland, funded by the Economic and Social Research Council (ESRC).

OK, maybe I was wrong. For some subjects (think: math, science), hanging out in (and building) virtual worlds is a hell of a lot more fun than sitting around listening to some boring teacher drone on and on. What do you think?