‘Mind reading’ technology identifies complex thoughts, using machine learning and fMRI

June 30, 2017

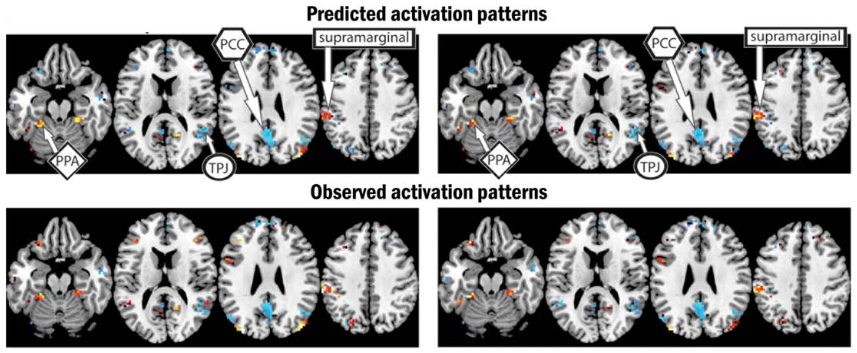

(Top) Predicted brain activation patterns and semantic features (colors) for two pairs of sentences. (Left: “The flood damaged the hospital”; (Right): “The storm destroyed the theater.” (Bottom) observed similar activation patterns and semantic features. (credit: Jing Wang et al./Human Brain Mapping)

By combining machine-learning algorithms with fMRI brain imaging technology, Carnegie Mellon University (CMU) scientists have discovered, in essense, how to “read minds.”

The researchers used functional magnetic resonance imaging (fMRI) to view how the brain encodes various thoughts (based on blood-flow patterns in the brain). They discovered that the mind’s building blocks for constructing complex thoughts are formed, not by words, but by specific combinations of the brain’s various sub-systems.

Following up on previous research, the findings, published in Human Brain Mapping (open-access preprint here) and funded by the U.S. Intelligence Advanced Research Projects Activity (IARPA), provide new evidence that the neural dimensions of concept representation are universal across people and languages.

“One of the big advances of the human brain was the ability to combine individual concepts into complex thoughts, to think not just of ‘bananas,’ but ‘I like to eat bananas in evening with my friends,'” said CMU’s Marcel Just, the D.O. Hebb University Professor of Psychology in the Dietrich College of Humanities and Social Sciences. “We have finally developed a way to see thoughts of that complexity in the fMRI signal. The discovery of this correspondence between thoughts and brain activation patterns tells us what the thoughts are built of.”

Goal: A brain map of all types of knowledge

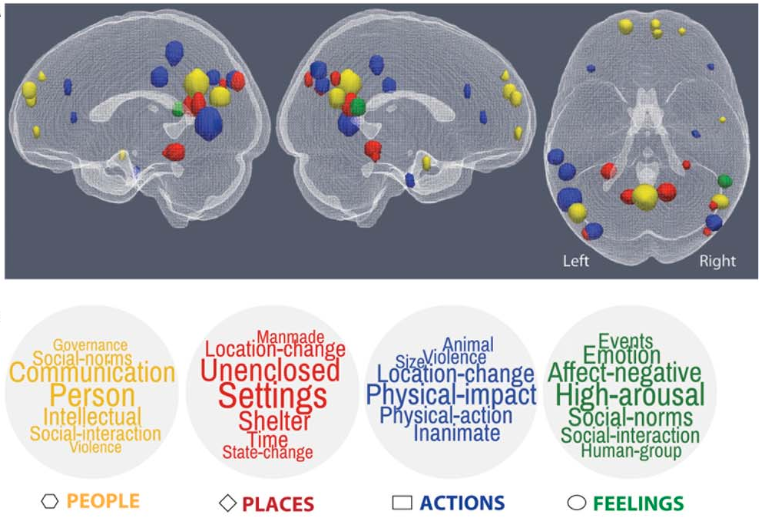

(Top) Specific brain regions associated with the four large-scale semantic factors: people (yellow), places (red), actions and their consequences (blue), and feelings (green). (Bottom) Word clouds associated with each large-scale semantic factor underlying sentence representations. These word clouds comprise the seven “neurally plausible semantic features” (such as “high-arousal”) most associated with each of the four semantic factors. (credit: Jing Wang et al./Human Brain Mapping)

The researchers used 240 specific events (described by sentences such as “The storm destroyed the theater”) in the study, with seven adult participants. They measured the brain’s coding of these events using 42 “neurally plausible semantic features” — such as person, setting, size, social interaction, and physical action (as shown in the word clouds in the illustration above). By measuring the specific activation of each of these 42 features in a person’s brain system, the program could tell what types of thoughts that person was focused on.

The researchers used a computational model to assess how the detected brain activation patterns (shown in the top illustration, for example) for 239 of the event sentences corresponded to the detected neurally plausible semantic features that characterized each sentence. The program was then able to decode the features of the 240th left-out sentence. (For “cross-validation,” they did the same for the other 239 sentences.)

The model was able to predict the features of the left-out sentence with 87 percent accuracy, despite never being exposed to its activation before. It was also able to work in the other direction: to predict the activation pattern of a previously unseen sentence, knowing only its semantic features.

“Our method overcomes the unfortunate property of fMRI to smear together the signals emanating from brain events that occur close together in time, like the reading of two successive words in a sentence,” Just explained. “This advance makes it possible for the first time to decode thoughts containing several concepts. That’s what most human thoughts are composed of.”

“A next step might be to decode the general type of topic a person is thinking about, such as geology or skateboarding,” he added. “We are on the way to making a map of all the types of knowledge in the brain.”

Future possibilities

It’s conceivable that the CMU brain-mapping method might be combined one day with other “mind reading” methods, such as UC Berkeley’s method for using fMRI and computational models to decode and reconstruct people’s imagined visual experiences. Plus whatever Neuralink discovers.

Or if the CMU method could be replaced by noninvasive functional near-infrared spectroscopy (fNIRS), Facebook’s Building8 research concept (proposed by former DARPA head Regina Dugan) might be incorporated (a filter for creating quasi ballistic photons, avoiding diffusion and creating a narrow beam for precise targeting of brain areas, combined with a new method of detecting blood-oxygen levels).

Using fNIRS might also allow for adapting the method to infer thoughts of locked-in paralyzed patients, as in the Wyss Center for Bio and Neuroengineering research. It might even lead to ways to generally enhance human communication.

The CMU research is supported by the Office of the Director of National Intelligence (ODNI) via the Intelligence Advanced Research Projects Activity (IARPA) and the Air Force Research Laboratory (AFRL).

CMU has created some of the first cognitive tutors, helped to develop the Jeopardy-winning Watson, founded a groundbreaking doctoral program in neural computation, and is the birthplace of artificial intelligence and cognitive psychology. CMU also launched BrainHub, an initiative that focuses on how the structure and activity of the brain give rise to complex behaviors.

Abstract of Predicting the Brain Activation Pattern Associated With the Propositional Content of a Sentence: Modeling Neural Representations of Events and States

Even though much has recently been learned about the neural representation of individual concepts and categories, neuroimaging research is only beginning to reveal how more complex thoughts, such as event and state descriptions, are neurally represented. We present a predictive computational theory of the neural representations of individual events and states as they are described in 240 sentences. Regression models were trained to determine the mapping between 42 neurally plausible semantic features (NPSFs) and thematic roles of the concepts of a proposition and the fMRI activation patterns of various cortical regions that process different types of information. Given a semantic characterization of the content of a sentence that is new to the model, the model can reliably predict the resulting neural signature, or, given an observed neural signature of a new sentence, the model can predict its semantic content. The models were also reliably generalizable across participants. This computational model provides an account of the brain representation of a complex yet fundamental unit of thought, namely, the conceptual content of a proposition. In addition to characterizing a sentence representation at the level of the semantic and thematic features of its component concepts, factor analysis was used to develop a higher level characterization of a sentence, specifying the general type of event representation that the sentence evokes (e.g., a social interaction versus a change of physical state) and the voxel locations most strongly associated with each of the factors.