New brain-computer interface mobilizes patients, opens up new mind-control scenarios

June 20, 2011 by Amara D. Angelica

(Credit: Warner Brothers Entertainment)

In the Green Lantern movie, a ring takes orders from Jordan’s mind, enabling him to fly, take down multiple bad guys, and create wormholes through which he can travel thousands of light-years in minutes.

University of Michigan Center for Wireless Integrated Microsystems professor Euisik Yoon and colleagues are developing a brain-computer interface (BCI) that would handle the mind-to-ring communication part. DARPA is working on the other stuff, I’m guessing.

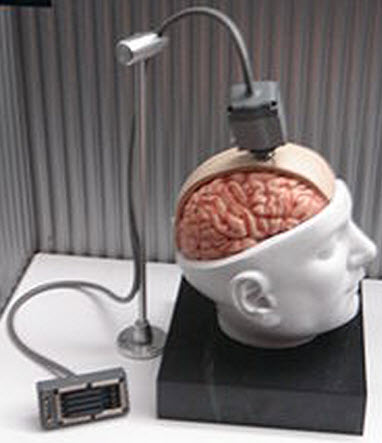

It’s a brain implant called BioBolt that communicates commands via the skin to objects on the body — or directly to muscles.

This patent-pending invention could someday allow some disabled patients to control an arm muscle (or other muscles) by just thinking about the movement — without wires keeping them immobilized in a chair.

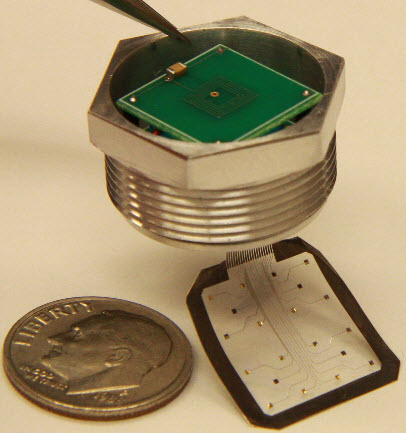

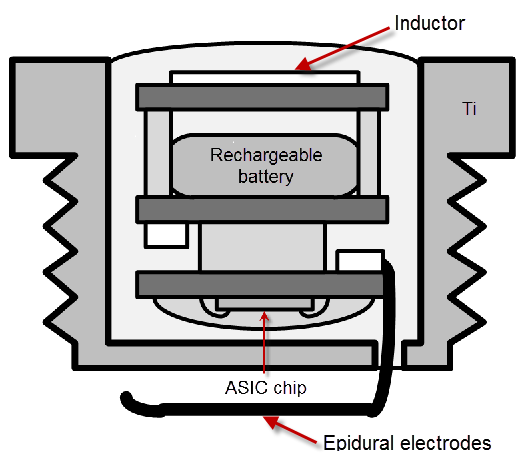

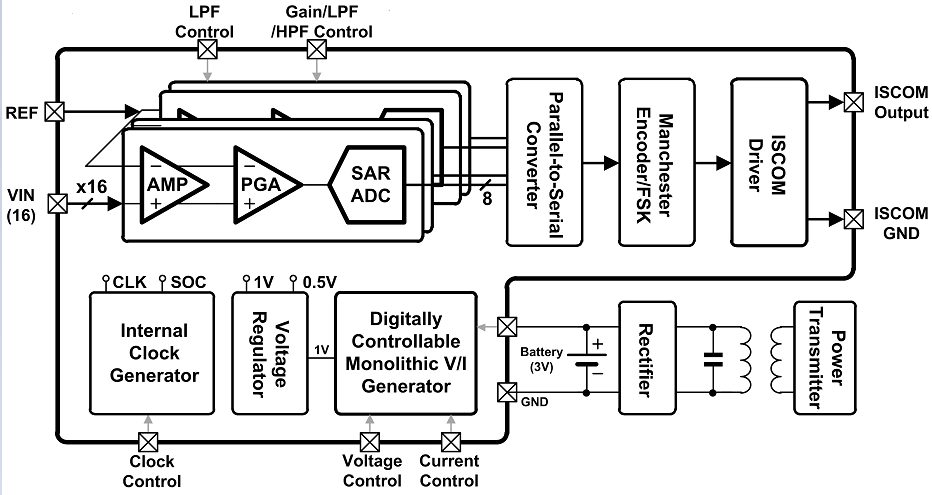

A bolt implanted in the skull would contain an ASIC (application-specific integrated circuit) microchip under the skin in the skull. It would pick up and process neural signals, and transmit them via the skin directly to a receiver located in or near the target muscle group (such as an arm or hand).

Human experiments: how BioBolt will work

Initial experiments will be with primates, but the researchers also plan human experiments in the future. Here’s how the BioBolt would work:

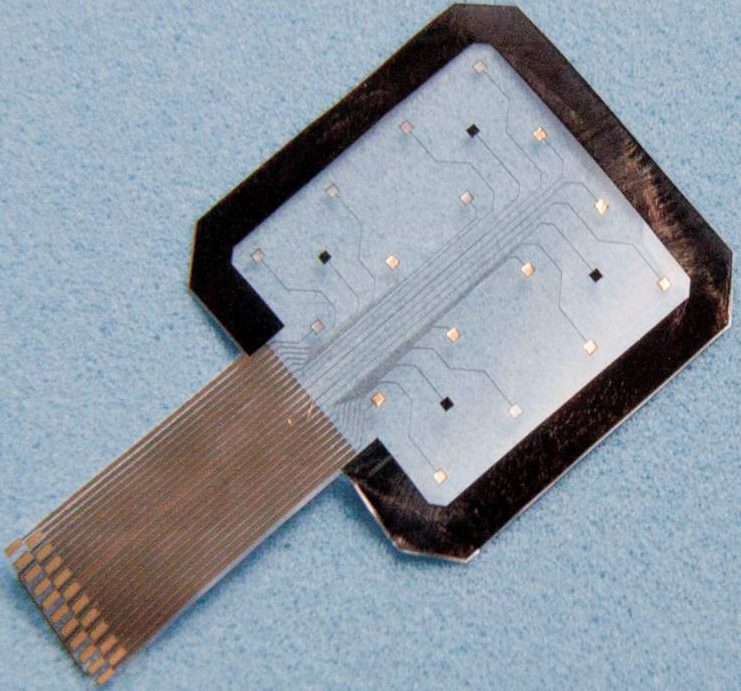

1. An array of microECoG (electrocorticography) electrodes senses epidural (between the skull and brain) signals generated by neurons in the motor cortex. These signals are picked up from “cortical control columns,” which have a diameter of a few mm. This allows for very precise control of specific muscle groups.

2. These analog signals are converted to digital signals, which modulate a miniature transmitter in the chip.

BioBolt cutaway. Power is inductively coupled (via coils) to the BioBolt to charge its internal battery (credit: adapted from illustration by E. Yoon et al./University of Michigan)

It then sends the signal via the skin of the body (a “Body Area Network,” or BAN) at a rate of 320kb/s to a receiver located in a target muscle group to be controlled. The receiver stimulates efferent neurons in the target muscle to take the action desired by the patient. The signal from afferent neurons could also go back to the BioBolt as a feedback signal. There can be multiple BioBolts for different muscle groups.

(In the initial system, the receiver, which could be in an earring, for example, will include a miniature transmitter that wirelessly sends the signals to a computer via Bluetooth, for example, for processing, and then back to another receiver for activating the muscle.)

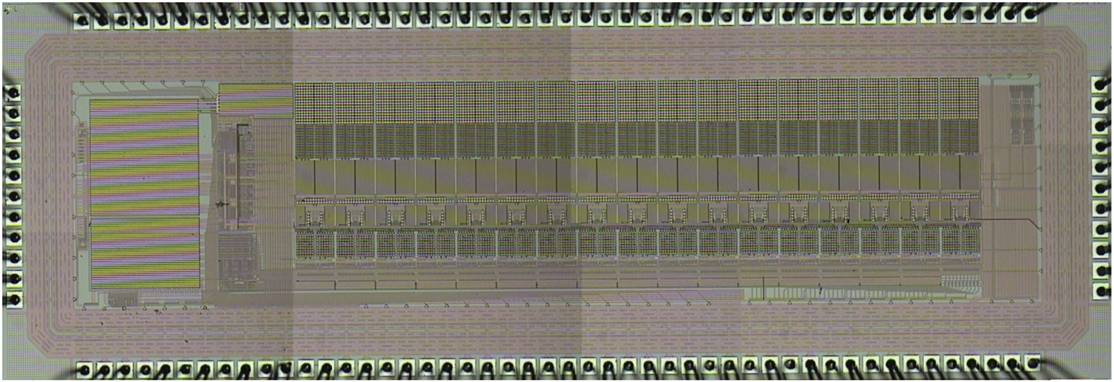

Photo of BioBolt ASIC chip (credit: E. Yoon et al./University of Michigan)

BioBolt block diagram (credit: E. Yoon et al./University of Michigan)

Why BioBolt is a breakthrough

“The primary goal is to acquire high signal-to-noise ratio signals with adequate spatial resolution so that the primate can perform experimental work such as moving cursors just by thinking, with visual feedback,” Yoon explained to me Thursday night, after returning from a presentation in Kyoto, Japan.

“The advantage of BioBolt, compared with the current electrode systems, is that it is minimally invasive (does not require open-skull surgery) and the wireless signal transmission can allow experimental work on freely-moving primates, which is not possible in the current system.” BioBolt is also sealed under the skin to avoid infection, he said.

With current experimental BCI systems, a doctor surgically implants wires into the brain. The wires connect portions of the motor cortex (controls muscle movement) with an external computer, via wires, so the patient is immobilized.

Compare that to the BrainGate brain-computer interface (which has been used for experiments with paraplegics), for example. One workaround to wires is to use a wireless transmitter, but that consumes a lot of power and expands the size of the implant, whereas using skin as a conductive media, digitized data can be transmitted at low power consumption (<0.2mW), a paper by Yoon and colleagues points out.

And unlike EEG signals, which come from broad areas of the brain and are weaker (because they are outside the skin), BioBolt connects with a tiny area of the brain that has neurons that control specific muscle groups.

BioBolt is also sealed under the skin to avoid infection.

Wireheading, anyone?

Could technology like BioBolt also enhance human abilities or experience, as portrayed in Snow Crash and elsewhere? Here’s one possible future scenario: BioBolt transmits a signal to the (next-generation) Eyez webcam you are wearing in your glasses, which in turn connects your motor neurons (say, the ones that control an index finger) directly to the Internet via WiFi or 4G.

You are now silently punching up data or apps in the cloud with just your mind — and watching the results on the built-inEyez display connected to an iPhone/iPad/Android augmented reality system, as you zip along in your autonomous vehicle transmitting the scene live on Qik.

OK, who in their right mind would screw a bolt into their brain just to operate hands-free? How about DARPA’s super soldiers, or extreme-edge test pilots, astronauts, or race-car drivers who need to react in tens of milliseconds instead of hundreds*, or expand their multitasking?

Think of the many other possibilities….

* With human reaction time of about 200 milliseconds, at 100 mph, you travel 9 meters before your foot starts to move to hit the brake.

The BioBolt concept was presented June 16 at the Symposium of VLSI Circuits in Kyoto, Japan. Sun-Il Chang, a Ph.D. student in Yoon’s research group, was lead author on the presentation.

Ref.: S.-I. Chang, K. AlAshmouny, M. McCormick, Y.-C. Chen and E. Yoon, “BioBolt: A Minimally-Invasive Neural Interface for Wireless Epidural Recording by Intra-Skin Communication,” Symposium On VLSI Circuits, June 16, 2011, http://www.vlsisymposium.org/circuits/cir_pdf/11C_AdvanceProgram_May18-3.pdf

Carmena JM, Lebedev MA, Crist RE, O’Doherty JE, Santucci DM, et al. (2003) Learning to Control a Brain–Machine Interface for Reaching and Grasping by Primates. PLoS Biol 1(2): e42. doi:10.1371/journal.pbio.0000042 (open access)