Robot learns ‘self-awareness’

August 24, 2012

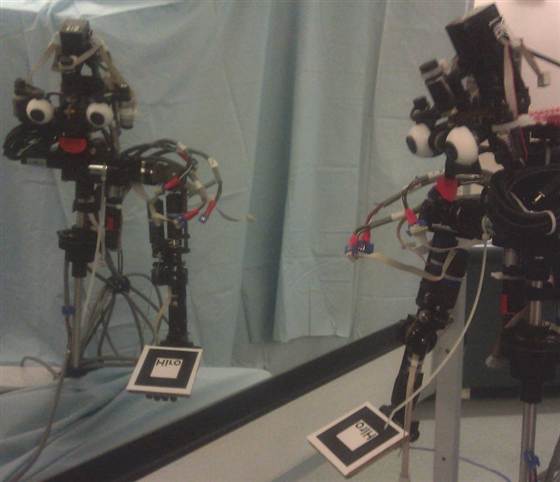

Who’s that good-looking guy? Nico examines itself and its surroundings in the mirror. (Credit: Justin Hart / Yale University )

Yale roboticists have programmed Nico, a robot, to be able to recognize itself in a mirror.

Using knowledge that it has learned about itself, Nico is able to use a mirror as an instrument for spatial reasoning, allowing it to accurately determine where objects are located in space based on their reflections, rather than naively believing them to exist behind the mirror.

Nico’s programmer, roboticist Justin Hart, a member of the Social Robotics Lab, focuses his thesis research primarily on “robots autonomously learning about their bodies and senses,” but he also explores human-robot interaction, “including projects on social presence, attributions of intentionality, and people’s perception of robots.”

Recently, the lab (along with MIT, Stanford, and USC) won a $10 million grant from the National Science Foundation to create “socially assistive” robots that can serve as companions for children with special needs. These robots will help with everything from cognitive skills to getting the right amount of exercise.

Hart’s specific goal in this program: enable Nico to interact with its environment by learning about itself, and using this self-model, to reason about tasks — mainly ones for humans.

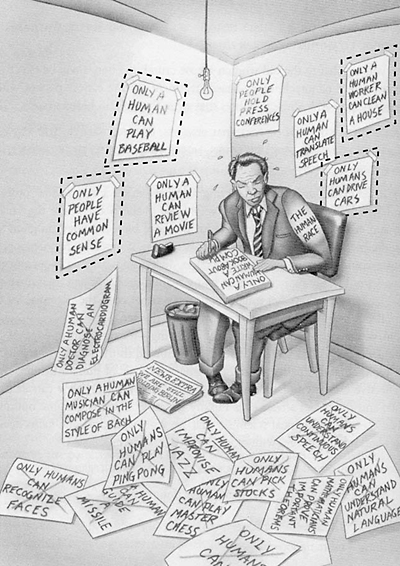

“Only humans can be self-aware” joins “Only humans can recognize faces” and other disgarded myths. Quiz: which of the posters on the wall in this 2005 cartoon (from The Singularity Is Near) should now be removed?

Previous researchers have built robots that acquire knowledge of the external world through experience, but Nico is different from those that have preceded it. “Knowledge about the robot itself has generally been built in by the designer,” Hart says. “None of these representations offer the flexibility, robustness, and functionality that are present in people.”

For example, Nico is learning the relationship of its end-effectors (grippers, for example) and sensors (stereoscopic cameras) to each other and the environment. It combines models of its perceptual and motor capabilities, to learn where its body parts exist with respect to each other and will soon learn how those body parts are able to cause changes by interacting with objects in the environment.

Nico in the looking glass

An object reflected in a mirror is “a reflection of what actually exists in space,” Hart says. “If one were to naively reach towards these reflections, one’s hand would hit the glass of the mirror, rather than the object being reached for.

“By understanding this reflection, however, one is able to use the mirror as an instrument to make accurate inferences about the positions of objects in space based on their reflected appearances. When we check the rearview mirror on our car for approaching vehicles or use a bathroom mirror to aim a hairbrush, we make such instrumental use of these mirrors.”

The classic mirror test has previously been done with animals to determine whether they understand that their reflections are actually images of themselves. The subject animals are allowed to familiarize themselves with a mirror. They are then sedated and a spot of dye is put on their faces. When they awaken, if they notice the new spot of color in their reflection and then touch the place on their face where the dye was put, they “pass” the mirror test.

“To our knowledge, this is the first robotic system to attempt to use a mirror in this way, representing a significant step towards a cohesive architecture that allows robots to learn about their bodies and appearances through self-observation, and an important capability required in order to pass the Mirror Test,” says Hart.

So far, no robot has successfully met this challenge. Jason and the Social Robotics Lab are working on it.

Reference (open access): Rajala AZ, Reininger KR, Lancaster KM, Populin LC (2010) Rhesus Monkeys (Macaca mulatta) Do Recognize Themselves in the Mirror: Implications for the Evolution of Self-Recognition. PLoS ONE 5(9): e12865. doi:10.1371/journal.pone.0012865