Secrets of human speech uncovered

March 1, 2013

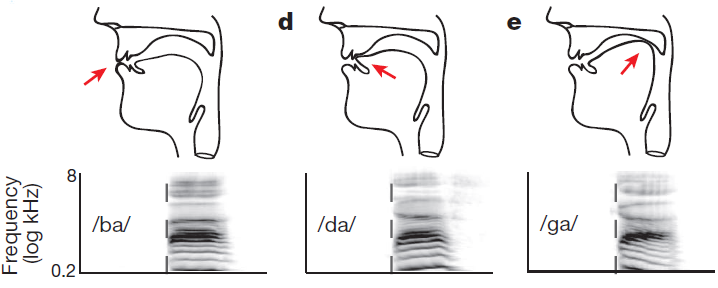

Top: vocal tract schematics for three consonants (/b/, /d/, /g/), produced by occlusion at the lips, tongue tip, and tongue body, respectively (red arrow). Bottom: corresponding spectrograms (frequency vs. time). (Credit: Kristofer E. Bouchard et al./Nature)

A team of researchers at UC San Francisco has uncovered the neurological basis of speech motor control, the complex coordinated activity of tiny brain regions that controls our lips, jaw, tongue and larynx as we speak.

The work has potential implications for developing brain-computer interfaces for artificial speech communication and for the treatment of speech disorders. It also sheds light on this ability, which is unique to humans among living creatures but poorly understood.

“Speaking is probably the most complex motor activity we do,” said senior author Edward Chang, MD, a neurosurgeon at the UCSF Epilepsy Center and a faculty member in the UCSF Center for Integrative Neuroscience.

That’s because spoken words require the coordinated efforts of numerous “articulators” in the vocal tract — the lips, tongue, jaw and larynx — but scientists have not understood how the movements of these distinct articulators are precisely coordinated in the brain.

The speech sensorimotor cortex

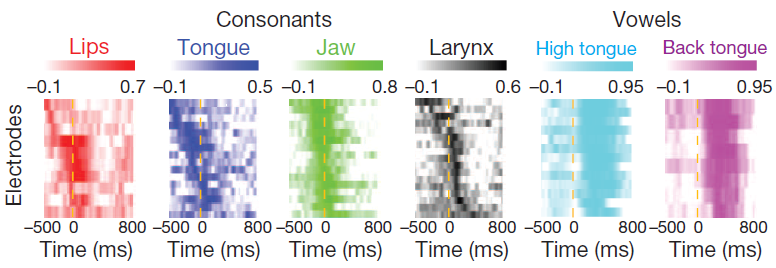

Timing of correlations between cortical activity and consonant and vowel

articulator features (credit: Kristofer E. Bouchard et al./Nature)

To understand how speech articulation works, Chang and his colleagues recorded electrical activity directly from the brains of three people undergoing brain surgery at UCSF, and used this information to determine the spatial organization of the “speech sensorimotor cortex,” which controls the lips, tongue, jaw, larynx as a person speaks. This gave them a map of which parts of the brain control which parts of the vocal tract.

They then applied a sophisticated new method called “state-space” analysis to observe the complex spatial and temporal patterns of neural activity in the speech sensorimotor cortex that play out as someone speaks. This revealed a surprising sophistication in how the brain’s speech sensorimotor cortex works.

They found that this cortical area has a hierarchical and cyclical structure that exerts a split-second, symphony-like control over the tongue, jaw, larynx and lips.

“These properties may reflect cortical strategies to greatly simplify the complex coordination of articulators in fluent speech,” said Kristofer Bouchard, PhD, a postdoctoral fellow in the Chang lab who was the first author on the paper.

In the same way that a symphony relies upon all the players to coordinate their plucks, beats or blows to make music, speaking demands well-timed action of several various brain regions within the speech sensorimotor cortex.

Brain mapping in epilepsy surgery

Surgical brain mapping can record neural activity directly and faster than other noninvasive methods, showing changes in electrical activity on the order of a few milliseconds.

Prior to this work, the majority of what scientists knew about this brain region was based on studies from the 1940’s, which used electrical stimulation of single spots on the brain, causing a twitch in muscles of the face or throat. This approach using focal stimulation, however, could never evoke a meaningful speech sound.

Chang and colleagues used an entirely different approach to studying the brain activity during natural speaking brain using the implanted electrodes arrays. The patients read from a list of English syllables — like bah, dee, goo. The researchers recorded the electrical activity within their speech-motor cortex and showed how distinct brain patterning accounts for different vowels and consonants in our speech.

“Even though we used English, we found the key patterns observed were ones that linguists have observed in languages around the world — perhaps suggesting universal principles for speaking across all cultures,” said Chang.

This work was funded by the National Institutes of Health and by the Ester A. and Joseph Klingenstein Foundation.