‘Seeing’ faces through touch

September 6, 2013

(Credit: Kazumichi Matsumiya)

Perceiving faces can be enhanced by touch, says researcher Kazumichi Matsumiya of Tohoku University in Japan.

The face aftereffect

In a series of studies, Matsumiya took advantage of a phenomenon called the “face aftereffect” to investigate whether our visual system responds to nonvisual signals for processing faces.

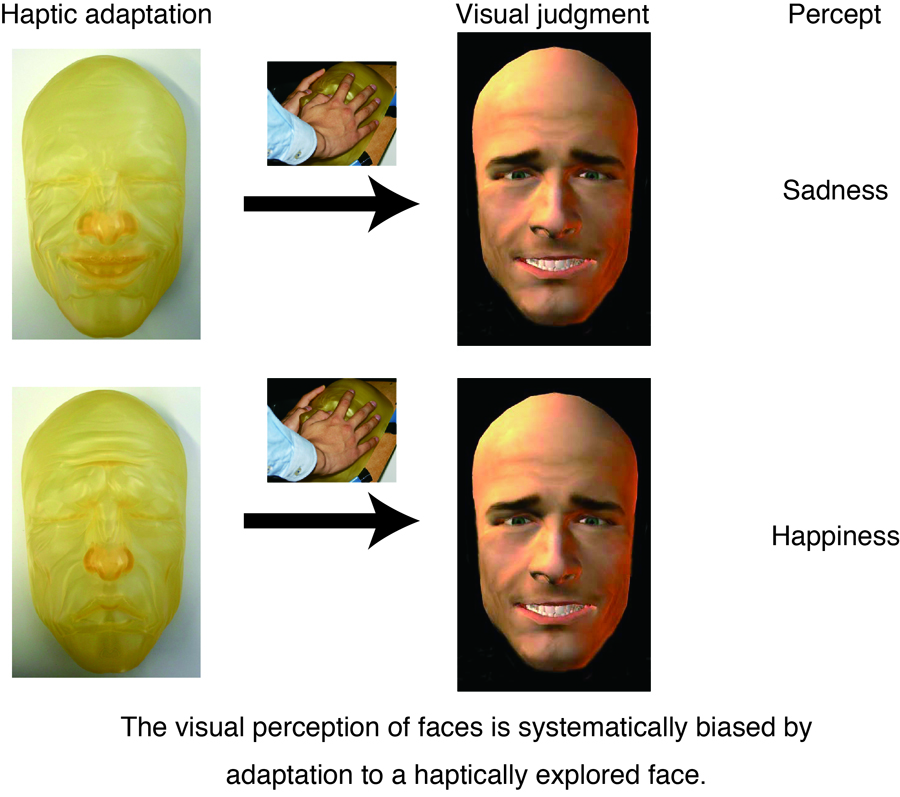

In the face aftereffect, we adapt to a face with a particular expression — happiness, for example — which causes us to perceive a subsequent neutral face as having the opposite facial expression (i.e., sadness).

Matsumiya hypothesized that if the visual system really does respond to signals from another modality, we should see evidence for face aftereffects from one modality to the other. So, adaptation to a face that is explored by touch should produce visual face aftereffects.

The experiment

To test this, Matsumiya had participants explore face masks concealed below a mirror by touching them. After this adaptation period, the participants were visually presented with a series of faces that had varying expressions and were asked to classify the faces as happy or sad. The visual faces and the masks were created from the same exemplar.

In line with his hypothesis, Matsumiya found that participants’ experiences exploring the face masks by touch shifted their perception of the faces presented visually compared to participants who had no adaptation period, such that the visual faces were perceived as having the opposite facial expression.

Further experiments ruled out other explanations for the results, including the possibility that the face aftereffects emerged because participants were intentionally imagining visual faces during the adaptation period.

And a fourth experiment revealed that the aftereffect also works the other way: Visual stimuli can influence how we perceive a face through touch.

Applications for the visually impaired

According to Matsumiya, current views on face processing assume that the visual system only receives facial signals from the visual modality — but these experiments suggest that face perception is truly crossmodal.

“These findings suggest that facial information may be coded in a shared representation between vision and haptics in the brain,” notes Matsumiya, suggesting that these findings may have implications for enhancing vision and telecommunication in the development of aids for the visually impaired.

This work was supported by a Grant-in-Aid for Scientific Research on Innovative Areas, “Face perception and recognition” from MEXT KAKENHI (23119704) and by the Research Institute of Electrical Communication, Tohoku University Original Research Support Program to K.M.

Future directions

The research results imply possible future directions in visual and telecommunication applications and in the development of aids for the visually impaired, Matsumiya said in an email to KurzweilAI.

“Previous research has developed optical-to-tactile substitution systems for aids for the visually impaired. One of the most famous system is a Braille system. Those studies presented relatively simple patterns, such as a geometrical pattern or an outline figure, and mainly transformed letter information .

“However, my results imply that complex visual patterns, or faces, are also available in the optical-to-tactile substitution systems, if the technology for haptic displays is more improved. Considering that facial information facilitates social communication, the realization of such a optical-to-tactile substitution system might provide the way to enable wealthy communication with the visually impaired.”

No information on practical products for the visually handicapped was available at this time.