The Internet, peer-reviewed

October 28, 2011 by Amara D. Angelica

It could be one of the most important innovations on the Internet since the browser.

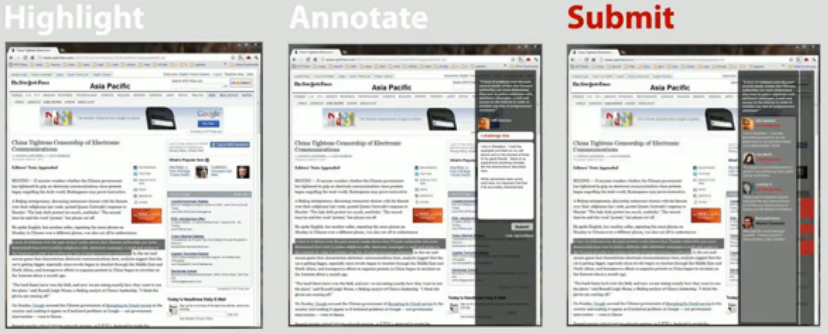

Imagine an open-source, crowd-sourced, community-moderated, distributed platform for sentence-level annotation of the Web. In other words, a way to cut through the babble and restore some sanity and trust.

That’s the idea behind Hypothes.is. It will work as an overlay on top of any stable content, including news, blogs, scientific articles, books, terms of service, ballot initiatives, legislation and regulations, software code and more — without requiring participation of the underlying site.

It’s based on a new draft standard for annotating digital documents currently being developed by the Open Annotation Collaboration, a consortium that includes the Internet Archive, NISO (National Information Standards Organization), O’Reilly Books, Amazon, Barnes and Noble, and a number of academic institutions.

“If what is published is immediately fact and logic-checked, in a detailed and highly visible way, it will necessarily put pressure upstream to the point of authorship,” says the FAQ. “In order to accomplish this we need better feedback mechanisms. Standard comments just aren’t up to the task, and neither are newer systems such as Disqus, IntenseDebate, Facebook Comments or others. While interesting, none of them fundamentally change the comment model. It’s time for a new set of tools.”

Yes, it’s been tried before, and didn’t catch on. But looking at the solid concept and the names involved, I believe they can pull it off.

I just donated to their Kickstarter fund. I recommend you do the same. (You might also want to reserve your user name.)