Watson: supercharged search engine or prototype robot overlord?

February 17, 2011 by Ben Goertzel

My initial reaction to reading about IBM’s “Watson” supercomputer and software was a big fat ho-hum. “OK,” I figured, “a program that plays “Jeopardy!” may be impressive to Joe Blow in the street, but I’m an AI guru so I know pretty much exactly what kind of specialized trickery they’re using under the hood. It’s not really a high-level mind, just a fancy database lookup system.”

My initial reaction to reading about IBM’s “Watson” supercomputer and software was a big fat ho-hum. “OK,” I figured, “a program that plays “Jeopardy!” may be impressive to Joe Blow in the street, but I’m an AI guru so I know pretty much exactly what kind of specialized trickery they’re using under the hood. It’s not really a high-level mind, just a fancy database lookup system.”

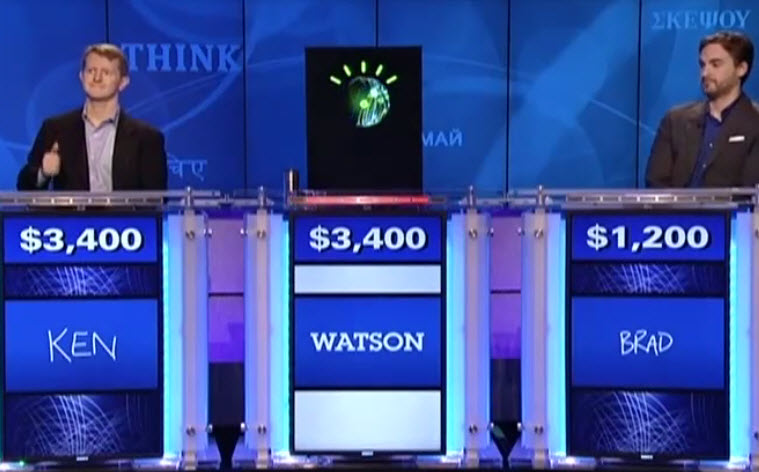

But while that cynical view is certainly technically accurate, I have to admit that when I actually watched Watson play “Jeopardy!” on TV — and beat its human opponents — I felt some real excitement … and even some pride for the field of AI. Sure, Watson is far from a human-level AI, and doesn’t have much general intelligence. But even so, it was pretty bloody cool to see it up there on stage, defeating humans in a battle of wits created purely by humans for humans — playing by the human rules and winning.

I found Watson’s occasional really dumb mistakes made it seem almost human. If the performance had been perfect there would have been no drama — but as it was, there was a bit of a charge in watching the computer come back from temporary defeats induced by the limitations of its AI. Even more so because I’m wholly confident that, 10 years from now, Watson’s descendants will be capable of doing the same thing without any stupid mistakes.

And in spite of its imperfections, by the end of its three day competition against human “Jeopardy!” champs Ken Jennings and Brad Rutter, Watson had earned a total of $77,147, compared to $24,000 for Jennings and $21,600 for Rutter. When Jennings graciously conceded defeat — after briefly giving Watson a run for its money a few minutes earlier — he quoted the line “And I, for one, welcome our new robot overlords.”

In the final analysis, Watson didn’t seem human at all — its IBM overlords didn’t think to program it to sound excited or to celebrate its victory. While the audience cheered Watson, the champ itself remained impassive, precisely as befitting a specialized question-answering system without any emotion module.

What does Watson Mean for AI?

But who is this impassive champion, really? A mere supercharged search engine, or a prototype robot overlord?

A lot closer to the former, for sure. Watson 2.0, if there is one, may make fewer dumb mistakes — but it’s not going to march out of the “Jeopardy!” TV studio and start taking over human jobs, winning Nobel Prizes, building femtofactories and spawning Singularities.

But even so, the technologies underlying Watson are likely to be part of the story when human-level and superhuman AGI robots finally do emerge.

“Jeopardy!” doesn’t have the iconic status of Chess or Go, but in some ways it cuts closer to the heart of human intelligence, focusing as it does on the ability to answer commonsense questions posed in human language. But still, succeeding at “Jeopardy!” requires a fairly narrow sort of natural language understanding — and understanding this is critical to understanding what Watson really is.

Watson is a triumph of the branch of AI called “natural language processing” (NLP) which combines statistical analysis of text and speech with hand-crafted linguistic rules to make judgments based on the syntactic and semantic structures implicit in language. Watson is not an intelligent autonomous agent like a human being, that reads information and incorporates it into its holistic world-view and understands each piece of information in the context of it own self, its goals, and the world. Rather, it’s an NLP-based search system — a purpose-specific system that matches the syntactic and semantic structures in a question with comparable structures found in a database of documents, and in this way tries to find answers to the questions in those documents.

Looking at some concrete “Jeopardy!” questions may help make the matter clearer; here are some random examples I picked from an online archive:

- This -ology, part of sociology, uses the theory of differential association (i.e., hanging around with a bad crowd)

- “Whinese” is a language they use on long car trips

- The motto of this 1904-1914 engineering project was “The land divided, the world united”

- Built at a cost of more than $200 million, it stretches from Victoria, B.C. to St. John’s, Newfoundland

- Jay Leno on July 8, 2010: The “nominations were announced today… there’s no ‘me’ in” this award

(Answers: criminology, children, the Panama Canal, the Trans-Canada Highway, the Emmy Awards.)

It’s worth taking a moment to think about these in the context of NLP-based search technology.

Question 1 stumped human “Jeopardy!” contestants on the show, but I’d expect it to be easier for an NLP based search system, which can look for the phrase “differential association” together with the morpheme “ology.”

Question 2 is going to be harder for an NLP based search system than for a human … but maybe not as hard as one might think, since the top Google hit for “whine ‘long car trip’ “ is a page titled Entertain Kids on Car Trip, and the subsequent hits are similar. The incidence of “kids” and “children” in the search results seems high. So the challenge here is to recognize that “whinese” is a neologism and apply a stemming heuristic to isolate “whine.”

Questions 3 and 4 are probably easier for an NLP based search system with a large knowledge base than for a human, as they contain some very specific search terms.

Question 5 is one that would be approached in a totally different way by an NLP based search system than by a human. A human would probably use the phonological similarity between “me” and “Emmy” (at least that’s how I answered the question). The AI can simply search the key phrases, e.g. “Jay Leno July 8, 2010 award” and then m any pages about the Emmys come up.

Now of course a human “Jeopardy!” contestant is not allowed to use a Web search engine while playing the show — this would be cheating! If this were allowed, it would constitute a very different kind of game show. The particular humans who do well at “Jeopardy!” are those with the capability to read a lot of text containing facts and remember the key data without needing to look it up again. However, an AI like Watson has a superhuman capability to ingest text from the Web or elsewhere and store it internally in a modified representation, without any chance of error or forgetting — just like you can copy a file from one computer to another without any mistakes, unless there’s an unusual hardware error like a file corruption.

So Watson can grab a load of “Jeopardy!”-relevant Web pages or similar documents in advance and store the key parts precisely in its memory, to use as the basis for question answering. And it can then do a rough (though somewhat more sophisticated) equivalent of searching in its memory for “whine ‘long car trip’ “ or “Jay Leno July 8, 2010 award” and finding the multiple results, and then statistically analyzing these multiple results to find the answer.

Whereas a human is answering many of these questions based on much more abstract representations, rather than by consulting an internal index of precise words and phrases.

Knowing this, you can understand how Watson made the stupid mistakes it did — like its howler, on the second night, of thinking Toronto was a US city. In that instance, the Final “Jeopardy!” category was “U.S. Cities,” and the clue was: “Its largest airport is named for a World War II hero, its second largest for a World War II battle.” Watson produced the odd response: “What is Toronto??????” — the question marks indicating that it had very low confidence in the response.

How could it choose Toronto in a category named “U.S. Cities”? Because its statistical analysis of prior “Jeopardy!” games told it that the category name was sometimes misleading — and because there is in fact a small US city named “Toronto.”

Of course, any human intelligent enough to succeed at “Jeopardy!” whatsoever, would have the common sense to know that if some city has a “largest airport,” it’s not going to be a small town like Toronto, Ohio. But Watson doesn’t work by common sense, it works by brute-force lookup against a large knowledge repository.

Both the Watson strategy and the human strategy are valid ways of playing “Jeopardy!” But, the human strategy involves skills that are fairly generalizable to many other sorts of learning (for instance, learning to achieve diverse goals in the physical world), whereas the Watson strategy involves skills that are only extremely useful for domains where the answers to one’s questions already lie in knowledge bases someone else has produced.

The difference is as significant as that between Deep Blue’s approach to chess, and Garry Kasparov’s approach. Deep Blue and Watson are specialized and brittle; Kasparov, Jennings and Rutter are flexible, adaptive agents. If you change the rules of chess a bit (say, tweaking it to be Fisher random chess), Deep Blue has got to be reprogrammed a bit, but Kasparov can adapt. If you change the scope of “Jeopardy!” to include different categories of questions, Watson would need to be retrained and retuned on different data sources, but Jennings and Rutter could adapt.

And general intelligence in everyday human environments — or in contexts like doing novel science or engineering — is largely about adaptation, about creative improvisation in the face of the fundamentally unknown, not just about performing effectively within clearly-demarcated sets of rules.

Wolfram on Watson

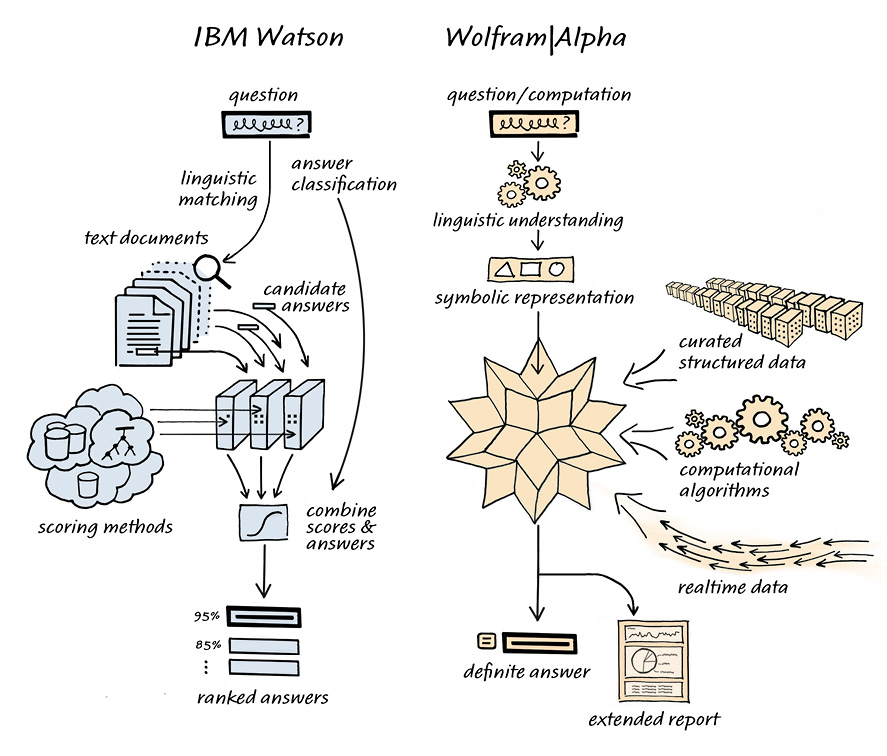

Stephen Wolfram, the inventor of Mathematica and Wolfram Alpha, wrote a very clear and explanatory blog post on Watson recently, containing an elegant diagram contrasting Watson with his own Wolfram Alpha system:

In his article he also gives some interesting statistics on search engines and “Jeopardy!,” showing that a considerable majority of the time, major search engines contain the answers to the “Jeopardy!” questions in the first few pages. Of course, this doesn’t make it trivial to extract the answers from these pages, but it nicely complements the qualitative analysis I gave above where I looked at 5 random “Jeopardy!” questions, and helps give a sense of what’s really going on here.

Neither Watson nor Alpha uses the sort of abstraction and creativity that the human mind does, when approaching a game like “Jeopardy!” Both systems use pre-existing knowledge bases filled with precise pre-formulated answers to the questions they encounter. The main difference between these two systems, as Wolfram observes, is that Watson answers questions by matching them against a large database of text containing questions and answers in various phrasings and contexts, whereas Alpha deals with knowledge that has been imported into it in structured, non-textual form, coming from various databases, or explicitly entered by humans .

Kurzweil on Watson

Ray Kurzweil has written glowingly of Watson as an important technology milestone

Indeed no human can do what a search engine does, but computers have still not shown an ability to deal with the subtlety and complexity of language. Humans, on the other hand, have been unique in our ability to think in a hierarchical fashion, to understand the elaborate nested structures in language, to put symbols together to form an idea, and then to use a symbol for that idea in yet another such structure. This is what sets humans apart.

That is, until now. Watson is a stunning example of the growing ability of computers to successfully invade this supposedly unique attribute of human intelligence.

I understand where Kurzweil is coming from, but nevertheless, this is a fair bit stronger statement than I’d make. As an AI researcher myself I’m quite aware of the all subtlety that goes into “thinking in a hierarchical fashion,” “forming ideas,” and so forth. What Watson does is simply to match question text against large masses of possible answer text — and this is very different than what an AI system will need to do to display human-level general intelligence. Human intelligence has to do with the synergetic combination of many things, including linguistic intelligence but also formal non-linguistic abstraction, non-linguistic learning of habits and procedures, visual and other sensory imagination, creativity of new ideas only indirectly related to anything heard or read before, etc. An architecture like Watson barely scratches the surface!

But Ray Kurzweil knows all this about the subtlety and complexity of human general intelligence, and the limited nature of the “Jeopardy!” domain — so why does Watson excite him so much?

Although Watson is “just” an NLP-based search system, it’s still not a trivial construct. Watson doesn’t just compare query text to potential-answer text, it does some simple generalization and inference, so that it represents and matches text in a somewhat abstracted symbolic form. The technology for this sort of process has been around a long time and is widely used in academic AI projects and even a few commercial products — but, the Watson team seems to have done the detail work to get the extraction and comparison of semantic relations from certain kinds of text working extremely well. I can quite clearly envision how to make a Watson-type system based on the NLP and reasoning software currently working inside our OpenCog AI system — and I can also tell you that this would require a heck of a lot of work, and a fair bit of R&D creativity along the way.

Kurzweil is a master technology trendspotter, and he’s good at identifying which current developments are most indicative of future trends. The technologies underlying Watson aren’t new, and don’t constitute much direct progress toward the grand goals of the AI field. What they do indicate, however, is that the technology for extracting simple symbolic information from certain sorts of text, using a combination of statistics and rules, can currently be refined into something highly functional like Watson, within a reasonably bounded domain.

Granted it took an IBM team 4 years to perfect this, and and granted “Jeopardy!” is a very narrow slice of life — but still Watson does bespeak that semantic information extraction technology has reached a certain level of maturity. And while Watson’s use of natural language understanding and symbol manipulation technology is extremely narrowly-focused, the next similar project may be less so.

Today “Jeopardy!,” Tomorrow the World?

Am I as excited about Watson as Ray Kurzweil’s article suggests? In spite of the excitement I felt at watching Watson’s performance — no, not really. Watson is a fantastic technical achievement, and should also be a publicity milestone roughly comparable to Deep Blue’s chess victory over Kasparov. But question answering doesn’t require human-like general intelligence — unless getting the answers involves improvising in a conceptual space not immediately implied by the available information … which is of course not the case with the “Jeopardy!” questions.

Ray’s response does contain some important lessons, such as the value of paying attention to the maturity levels of technologies, and what the capabilities of existing applications imply about this, even if the applications themselves aren’t so interesting or have obvious limitations. But it’s important to remember the difference between the “Jeopardy!” challenge and other challenges that would be more reminiscent of human-level general intelligence, such as

- Holding a wide-ranging English conversation with an intelligent human for an hour or two

- Passing the third grade, via controlling a robot body attending a regular third grade class

- Getting an online university degree, via interacting with the e-learning software (including social interactions with the other students and teachers) just as a human would do

- Creating a new scientific project and publication, in a self-directed way from start to finish

What these other challenges have in common is that they require intelligent response to a host of situations that are unpredictable in their particulars — so they require adaptation and creative improvisation, to a degree that highly regimented AI architectures like Deep Blue or Watson will never be able to touch.

Some AI researchers believe that this sort of artificial general intelligence will eventually come out of incremental improvements to “narrow AI” systems like Deep Blue, Watson and so forth. Many of us, on the other hand, suspect that Artificial General Intelligence (AGI) is a vastly different animal (and if you want to get a dose of the latter perspective, show up at the AGI-11 conference on Google’s campus in Mountain View this August). In this AGI-focused view, technologies like those used in Watson may ultimately be part of a powerful AGI architecture, but only when harnessed within a framework specifically oriented toward autonomous, adaptive, integrative learning.

But … well … even so, it was pretty damn funky watching an audience full of normal-looking, non-AI-geek people sitting there cheering for the victorious intellectual accomplishments of a computer!

Reprinted with permission from H+ Magazine