When The Speed Of Light Is Too Slow: Trading at the Edge

November 11, 2010 by Thomas McCabe

Modern stock market trading computers have become so fast that the speed of light is now their key limiting factor. A new paper by a physicist and a mathematician explains how traders can take advantage of this ultimate speed limit.

Computers were originally introduced in trading because they are faster than us in responding to market signals. A human trader might buy up a million shares of Microsoft for $20 a share, and sell them the next day for $21, making a million dollars in profit. However, if the price of a stock is $15.67 in New York and $15.68 in London one moment, but jumps to $15.70 and then $15.69 a tenth of a second later, no human could react quickly enough to buy the stock in New York and sell it in London before the prices reversed.

High-frequency trading

To solve this problem, traders over the last few years have been building automated high-frequency trading (HFT) systems that compete by making thousands of trades a minute to maximize profit.

But delays in communication over a network can throw a monkey wrench in this scheme. These delays can be caused by many factors, such as slow routing computers, excessive traffic, routes that go through many different computers, and so on. On ordinary networks, like the Internet, these factors add so many delays that the time it takes for a signal to physically traverse fiber-optic cables is only a small fraction of the total latency (delay).

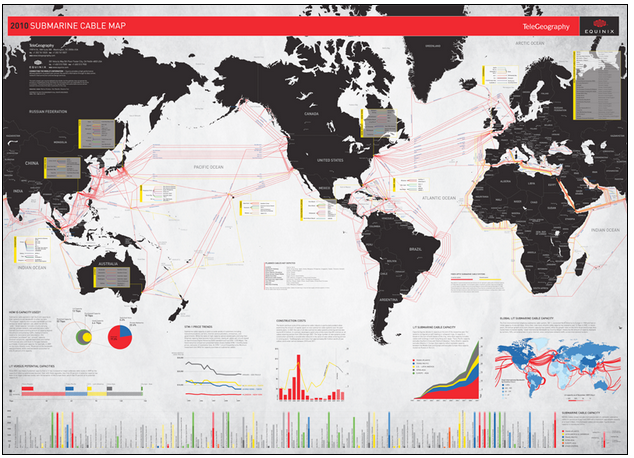

So currently, there are many projects to build faster fiber-optic cables, including one between Chicago and New York, to achieve faster HFT trades. The Chicago-New York cable will shave about 3 milliseconds off the communication time. (These ultra-fast cables are not built for use by the public; they’re designed by infrastructure companies specifically for HFT firms, who will pay high prices for bandwidth on the fastest cables.) A number of these cables are actually underwater, because going across the ocean is faster than making detours on land. For example, there’s a project to install a new undersea cable between New York and London.

See a larger version here.

Because of how HFT operates — generally, the profit from an HFT trading opportunity goes to the first firm to act on it, while other firms get nothing, to beat other traders to the punch, this has led to a race between trading firms to have the fastest hardware and the fastest signal cables. Recently, the time it takes to execute these trades has gone from milliseconds (thousandths of a second) to microseconds (millionths of a second).

Typically, the latency for HFT trades is now below 500 microseconds. Traders in the same city can achieve that by optimizing their computers, network hardware, and software for speed. But when it comes to trading between cities — or worse, between continents — the speed of light, not routing or traffic delays, actually becomes the limiting factor. (It takes at least 66.8 milliseconds, more than 100 times longer than 500 microseconds, for light to travel between two points located at opposite sides of the Earth, for example. This doesn’t include delays from the electronics and the fiber itself.)

Overcoming light-speed latency

As trading times go down, this light-speed delay can become important, even between closer pairs of cities, such as London and Paris, where the minimum delay is about 1 millisecond.

Dr. Alex Wissner-Gross, a Research Affiliate at the MIT Media Laboratory, and Dr. Cameron Freer, a Junior Researcher at the University of Hawaii, have developed an econophysical mathematical model called “relativistic statistical arbitrage” that provides a strategy for dealing with this new class of light-speed-limited, long-distance trading, in a paper published in Physical Review E. They advise market traders to locate their computers at certain points in between the two markets, with the locations of these points determined by how fast each market can send pricing information to the trading computers.

“Although there have always been light propagation delays, only in the last few years have transaction times been fast enough for our analysis to be relevant,” Freer explained in a phone call.

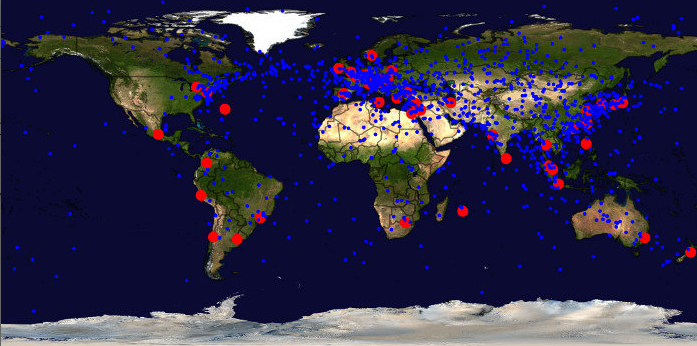

Optimal trading points (blue) between different markets (red). (Image credit: A. D. Wissner-Gross and C. E. Freer)

But simply switching trading to different existing computers is “only an approximation of an optimal scheme,” he added. “As trading times continue to decrease and competition heats up, the quest for maximum profitability may ultimately push trading firms to build new computers farther away from populated areas, to get closer to the exact, optimal points in between markets.

Freer and Wissner-Gross examined which locations would maximize trading profit, taking these light-speed delays into account. In the simplest version of their analysis, they found that if the pricing information at the two exchanges incorporates new data equally quickly, the best place for a trading computer is at the midpoint between the two exchanges, along a geodesic (great circle).

However, the locations directly in between major markets are frequently in remote places, such as the Arctic, in the middle of oceans, or hundreds of miles from major cities. (The map above shows the locations of major international markets, in red, and places where trading computers should be positioned to trade between these markets with maximum profitability, in blue.)

For instance, a trader wanting to exploit price differences between the New York Stock Exchange and the London Stock Exchange might want to place a computer in the middle of the Atlantic, because it could execute trades based on information from both of the two exchanges more quickly than ones based in either America or England.

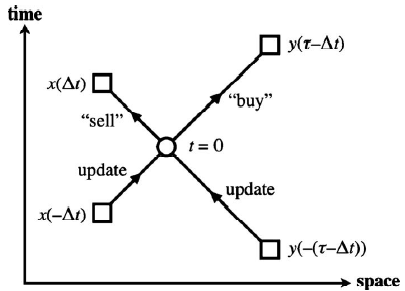

Space-time diagram of a geographically separated trade. (image credit: A. D. Wissner-Gross and C. E. Freer/Phys. Rev. E)

Smart planet

“We’re currently in talks with financial firms about licensing our technique,” Wissner-Gross said. Initially, these firms will probably want to just switch some of their trading systems to computers that have already been set up in better locations, to take advantage of this new research as fast as possible, he notes. “The scheme we describe in this paper can, in principle, be executed today, without the deployment of any new hardware,” just by moving operations to different computers.

During the dot-com boom, such infrastructure would have been impractically expensive to build relative to its benefits, because light-speed delays weren’t important, compared to other kinds of delay. But, the importance of being able to execute trades on millisecond timescales now makes such a project increasingly commercially viable.

“Eventually, this may lead to the development of a truly global computing infrastructure, covering even the most remote locales,” Wissner-Gross adds. “I see this work as one possible justification for making the entire surface of the planet more computationally capable… and in effect, making the whole planet smarter.”

Further information: