A ‘visual Turing test’ of computer ‘understanding’ of images

March 12, 2015

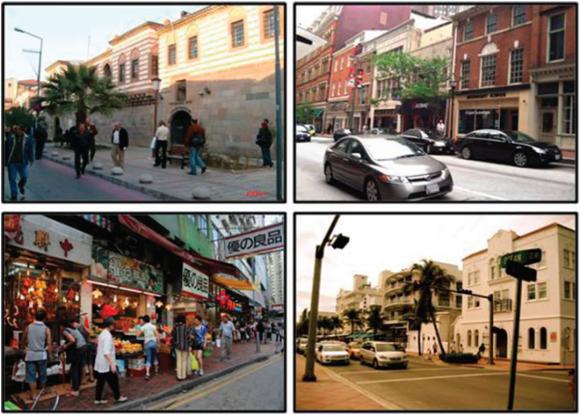

Athens, Baltimore, Hong Kong, Miami. What are those people doing? A new evaluation method measures a computer’s ability to decipher movements, relationships, and implied intent from images by asking questions (credit: Wikimedia Commons/Brown University)

Researchers from Brown and Johns Hopkins universities have come up with a new way to evaluate how well computers can “understand” the relationships or implied activities between objects in photographs, videos, and other images, not just recognize objects — a “visual Turing test,” as they describe it.

Traditional computer-vision benchmarks tend to measure an algorithm’s performance in detecting objects within an image (the image has a tree, or a car or a person), or how well a system identifies an image’s global attributes (scene is outdoors or in the nighttime).

“We think it’s time to think about how to do something deeper — something more at the level of human understanding of an image,” said Stuart Geman, the James Manning Professor of Applied Mathematics at Brown.

For example, recognizing that an image shows two people walking together and having a conversation is a much deeper understanding than just recognizing the people.

The system Geman and his colleagues developed, described this week in the Proceedings of the National Academy of Sciences, is designed to test for such a contextual understanding of photos.

It works by generating a string of yes or no questions about an image, which are posed sequentially to the system being tested. Each question is progressively more in-depth and based on the responses to the questions that have come before.

(credit: Wikimedia Commons/Brown University)

1. Q: Is there a person in the blue region? A: yes

2. Q: Is there a unique person in the blue region? A: yes

(Label this person 1)

3. Q: Is person 1 carrying something? A: yes

4. Q: Is person 1 female? A: yes

5. Q: Is person 1 walking on a sidewalk? A: yes

6. Q: Is person 1 interacting with any other object? A: no

For example, an initial question might ask a computer if there’s a person in a given region of a photo. If the computer says yes, then the test might ask if there’s anything else in that region — perhaps another person. If there are two people, the test might ask: “Are person1 and person2 talking?”

As a group, the questions are geared toward gauging the computer’s understanding of the contextual “storyline” of the photo. “You can build this notion of a storyline about an image by the order in which the questions are explored,” Geman said.

Because the questions are computer-generated, the system is more objective than having a human simply query a computer about an image. There is a role for a human operator, however: inform the computer when a question is unanswerable because of the ambiguities of the photo. For instance, whether or not a person in a photo is carrying something is unanswerable if most of the person’s body is hidden by another object. The human operator would flag that question as ambiguous.

The first version of the test was generated based on a set of photos depicting urban street scenes. But the concept could conceivably be expanded to all kinds of photos, the researchers say.

Geman and his colleagues hope this new test might spur computer vision researchers to explore new ways of teaching computers how to look at images. Most current computer vision algorithms are taught how to look at images using training sets in which objects are annotated by humans.

By looking at millions of annotated images, the algorithms eventually learn how to identify objects. But it would be very difficult to develop a training set with all the possible contextual attributes of a photo annotated. So true context understanding may require a new machine learning technique.

“As researchers, we tend to ‘teach to the test,’” Geman said. “If there are certain contests that everybody’s entering and those are the measures of success, then that’s what we focus on. So it might be wise to change the test, to put it just out of reach of current vision systems.”

Abstract of Visual Turing test for computer vision systems

Today, computer vision systems are tested by their accuracy in detecting and localizing instances of objects. As an alternative, and motivated by the ability of humans to provide far richer descriptions and even tell a story about an image, we construct a “visual Turing test”: an operator-assisted device that produces a stochastic sequence of binary questions from a given test image. The query engine proposes a question; the operator either provides the correct answer or rejects the question as ambiguous; the engine proposes the next question (“just-in-time truthing”). The test is then administered to the computer-vision system, one question at a time. After the system’s answer is recorded, the system is provided the correct answer and the next question. Parsing is trivial and deterministic; the system being tested requires no natural language processing. The query engine employs statistical constraints, learned from a training set, to produce questions with essentially unpredictable answers—the answer to a question, given the history of questions and their correct answers, is nearly equally likely to be positive or negative. In this sense, the test is only about vision. The system is designed to produce streams of questions that follow natural story lines, from the instantiation of a unique object, through an exploration of its properties, and on to its relationships with other uniquely instantiated objects.