Bee model could be breakthrough for autonomous drone development

May 5, 2016

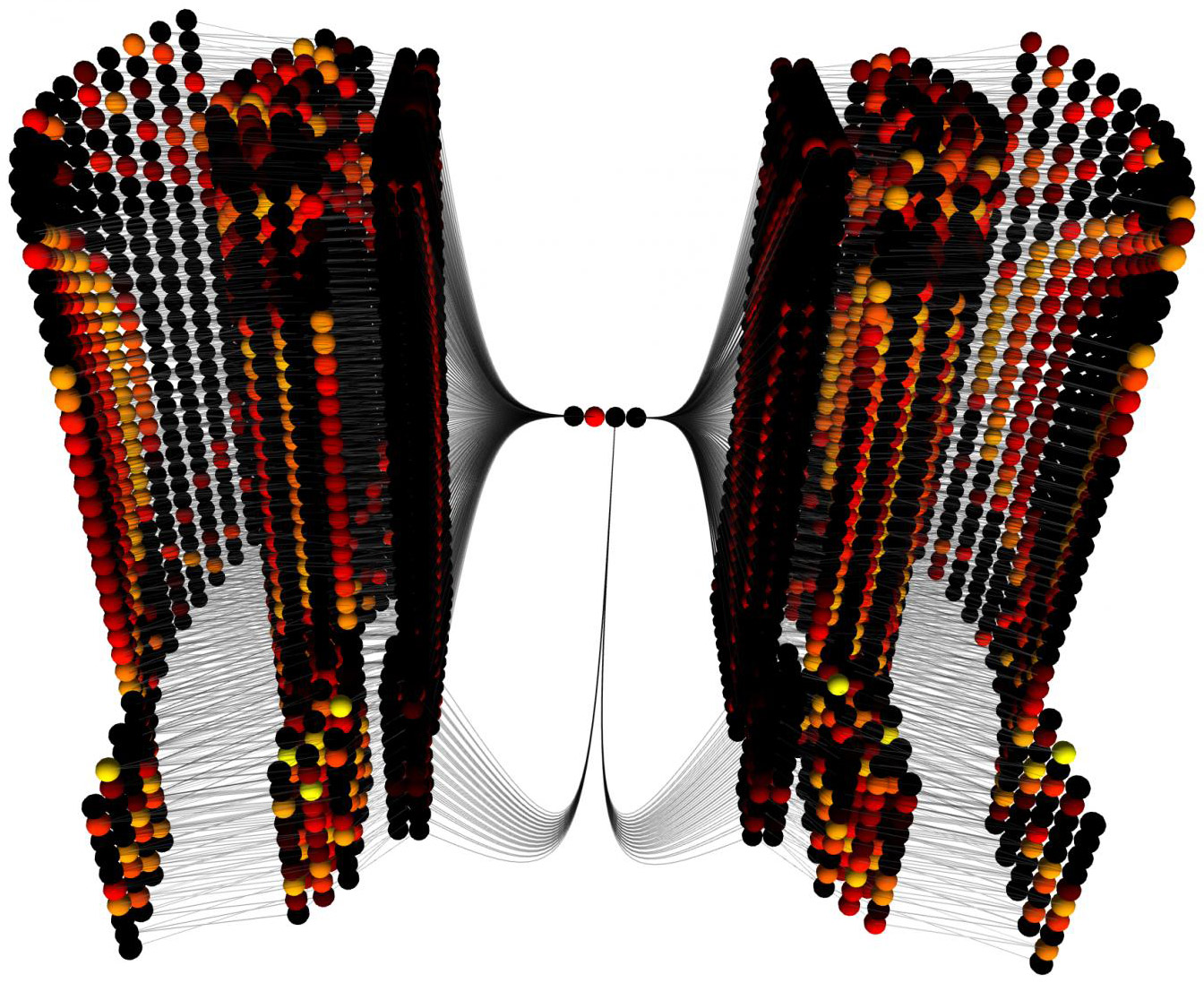

A visualization of the model taken at one time point while running. Each sphere represents a computational unit, with lines representing the connection between units. The colors represent the output of each unit. The left and right of the image are the inputs to the model and the center is the output, which is used to guide the virtual bee down a simulated corridor. (credit: The University of Sheffield)

A computer model of how bees use vision to avoid hitting walls could be a breakthrough in the development of autonomous drones.

Bees control their flight using the speed of motion (optic flow) of the visual world around them. A study by Scientists at the University of Sheffield Department of Computer Science suggests how motion-direction detecting circuits could be wired together to also detect motion-speed, which is crucial for controlling bees’ flight.

“Honeybees are excellent navigators and explorers, using vision extensively in these tasks, despite having a brain of only one million neurons,” said Alex Cope, PhD., lead researcher on the paper. “Understanding how bees avoid walls, and what information they can use to navigate, moves us closer to the development of efficient algorithms for navigation and routing, which would greatly enhance the performance of autonomous flying robotics,” he added.

“Experimental evidence shows that they use an estimate of the speed that patterns move across their compound eyes (angular velocity) to control their behavior and avoid obstacles; however, the brain circuitry used to extract this information is not understood, ” the researchers note. “We have created a model that uses a small number of assumptions to demonstrate a plausible set of circuitry. Since bees only extract an estimate of angular velocity, they show differences from the expected behavior for perfect angular velocity detection, and our model reproduces these differences.”

Their open-access paper is published in PLOS Computational Biology.

Abstract of A Model for an Angular Velocity-Tuned Motion Detector Accounting for Deviations in the Corridor-Centering Response of the Bee

We present a novel neurally based model for estimating angular velocity (AV) in the bee brain, capable of quantitatively reproducing experimental observations of visual odometry and corridor-centering in free-flying honeybees, including previously unaccounted for manipulations of behaviour. The model is fitted using electrophysiological data, and tested using behavioural data. Based on our model we suggest that the AV response can be considered as an evolutionary extension to the optomotor response. The detector is tested behaviourally in silico with the corridor-centering paradigm, where bees navigate down a corridor with gratings (square wave or sinusoidal) on the walls. When combined with an existing flight control algorithm the detector reproduces the invariance of the average flight path to the spatial frequency and contrast of the gratings, including deviations from perfect centering behaviour as found in the real bee’s behaviour. In addition, the summed response of the detector to a unit distance movement along the corridor is constant for a large range of grating spatial frequencies, demonstrating that the detector can be used as a visual odometer.