A tactile glove provides subtle guidance to locate objects

October 11, 2012

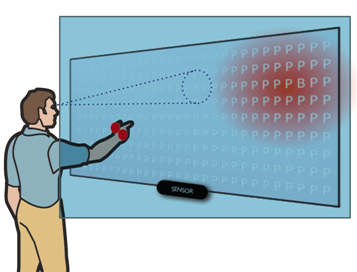

A user searches for an item in a large scene (here, the letter “B” among several “P”s). Vibrotactile stimulus “pulls” the hand toward a subregion (the red area) while the hand position (circle) is projected on the screen (Credit:Ville Lehtinen et al, Helsinki Institute for Information Technology HIIT)

Researchers from the University of Helsinki Institute for Information Technology (HIIT) and the Max Planck Institute for Informatics have developed a prototype of a glove that uses vibration feedback on the hand to guide the user’s hand towards a predetermined target in 3D space.

The glove could help users in daily visual search tasks in supermarkets, parking lots, warehouses, libraries etc.

Their study shows an almost three-fold advantage in finding objects from complex visual scenes, such as library or supermarket shelves.

Finding an object from a complex real-world scene is a common yet time-consuming and frustrating chore. What makes this task complex is that humans’ pattern recognition capability reduces to a serial one-by-one search when the items resemble each other.

“The advantage of steering a hand with tactile cues is that the user can easily interpret them in relation to the current field of view where the visual search is operating. This provides a very intuitive experience, like the hand being ‘pulled’ toward the target,” said chief researcher Ville Lehtinen of HIIT.

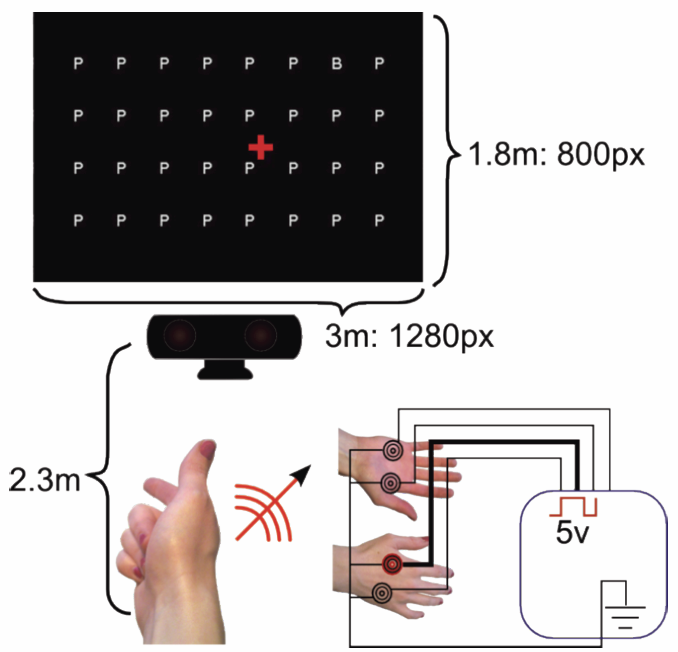

System overview. Left: Tracking the pointing arm with a user-facing computer vision sensor and projecting it as a cursor (+) on a wall-sized canvas. Right: Positioning of four vibrotactile actuators on the palmar and dorsal aspects of the hand. The radial actuator is active to direct the hand from the cursor position toward the upper right. (Credit:Ville Lehtinen et al, Helsinki Institute for Information Technology HIIT)

The solution builds on inexpensive off-the-shelf components such as four vibrotactile actuators on a simple glove and a Microsoft Kinect sensor for tracking the user’s hand. The researchers published a dynamic guidance algorithm that calculates effective actuation patterns based on distance and direction to the target.

In a controlled experiment, the complexity of the visual search task was increased by adding distractors to a scene. “In search tasks where there were hundreds of candidates but only one correct target, users wearing the glove were consistently faster, with up to three times faster performance than without the glove,” says Dr. Antti Oulasvirta from Max Planck Institute for Informatics.

For instance, warehouse workers could have gloves that guide them to target shelves.