essays | celebrating: 15 year anniversary of the book the Singularity Is Near

January 1, 2020

— contents —

~ welcome

~ bibliography

~ summary

— welcome —

Dear readers,

Year 2020 celebrates the 15th anniversary of the acclaimed book the Singularity Is Near written by Ray Kurzweil — best-selling author, inventor, and futurist. The book was published September 2005.

In the time since its publication, society has witnessed a whirlwind of breakthroughs: genetic engineering, autonomous robots, extreme computation, renewable energy. Advanced sensor arrays and internet meshes are uniting all people + things in the connected habitats we live in, and with each other. Today’s massively scaled knowledge — and shared human experience — will take us to the future.

15 years later, this classic book is still relevant. Ray is currently completing his new book the Singularity Is Nearer — debuting in year 2022.

— library editor

bibliography | books by Ray Kurzweil

— non-fiction —

- no. 1 — year: 1990 • the Age of Intelligent Machines

- no. 2 — year: 1992 • 10% Solution for a Healthy Life — how to eliminate virtually all risk of heart disease + cancer

- no. 3 — year: 1999 • the Age of Spiritual Machines — when computers exceed human intelligence

- no. 4 — year: 2004 • Fantastic Voyage — live long enough to live forever, the science behind radical life extension

- no. 5 — year: 2005 • the Singularity Is Near — when humans transcend biology

- no. 6 — year: 2009 • Transcend — 9 steps to living well forever

- no. 7 — year: 2012 • How to Create a Mind — the secret of human thought revealed

— novel —

- no. 8 — year: 2019 • Danielle — Chronicles of a Superheroine

Includes 2 non-fiction companion books.

IMAGE

— summary —

The Singularity Is Near presents the next stage of Ray Kurzweil’s compelling view of the future — the merger of humans + machines. He refers to this period as a singularity, when the pace of technological change is so rapid, and its impact so deep, that human life transforms.

Kurzweil explains we’re already in the early stages of this transition. And within a few decades, life as we know it will be completely different. The book has sold 255,000 copies, and is printed in 17 languages — spotlighting a growing mainstream + international interest in humanity’s future.

Kurzweil writes:

“The singularity will be a merger of our bodies + minds with our technology. The world will still be human, but transcend our biology’s roots. There will be no distinction between human and machine — nor between physical and virtual reality. If you wonder what will remain unequivocally human, it’s this quality — our species inherently seeks to extend its physical and mental reach beyond current limitations.”

book title: the Singularity Is Near

deck: When humans transcend biology

author: by Ray Kurzweil

date: 2005

— special essay selection —

writings: 3 essays on the technological future

author: by Ray Kurzweil

occasion: 15 year anniversary

for the book: the Singularity Is Near

For the 15 year anniversary — author Ray Kurzweil selected 3 of his essays below, touching-on these topics:

- How does the human brain + mind work?

- What will it take to probe its mysteries?

- Why do science + tech breakthroughs seem to come out of nowhere?

- How are people caught off guard by change?

The essay set is a journey through themes presented in the Singularity Is Near — for your interest + curiosity, and to spark conversation about humanity’s accelerating relationship with tech.

— table of contents —

1. essay | Who am I — What am I

2. essay | We are entering the singularity

3. essay | Exponential technological progress in the 21 century

4. supplement | math — from the law of accelerating returns

essay | no. 1

essay title: Who am I — What am I

author: by Ray Kurzweil

— introduction —

Perhaps I am this stuff here, that is, the ordered and chaotic collection of molecules that comprise my body + brain.

But there’s a problem. The specific set of particles that comprise my body and brain are completely different from the atoms and molecules than comprised me only a short while — on the order of weeks ago. We know that most of our cells are turned over in a matter of weeks. Even those that persist longer, like neurons, nonetheless change their component molecules in a matter of weeks.

I’m a completely different set of stuff than I was a month ago.

So I am a completely different set of stuff than I was a month ago. All that persists is the pattern of organization of that stuff. The pattern changes also, but slowly and in a continuum from my past self. From this perspective I am rather like the pattern that water makes in a stream as it rushes past the rocks in its path. The actual molecules (of water) change every millisecond, but the pattern persists for hours or even years.

So, perhaps we should say I am a pattern of matter and energy that persists in time.

But there is a problem here as well. We will ultimately be able to scan and copy this pattern in a at least sufficient detail to replicate my body and brain to a sufficiently high degree of accuracy such that the copy is indistinguishable from the original — that is, the copy could pass a “Ray Kurzweil” Turing test. I won’t repeat all the arguments for this here, but I describe this scenario in a number of documents including the essay “the Law of Accelerating Returns.”

The copy, therefore, will share my pattern. One might counter that we may not get every detail correct. But if that is true, then such an attempt would not constitute a proper copy. As time goes on, our ability to create a neural and body copy will increase in resolution and accuracy at the same exponential pace that pertains to all information based technologies.

We ultimately will be able to capture and recreate my pattern of salient neural and physical details to any desired degree of accuracy. Although the copy shares my pattern, it would be hard to say that the copy is me — because I would, or could, still be here. You could even scan and copy me while I was sleeping.

If you come to me in the morning and say, “Good news, Ray, we’ve successfully re-instantiated you into a more durable substrate, so we won’t be needing your old body and brain anymore,” I may beg to differ.

If you do the thought experiment, it’s clear that the copy may look and act just like me, but it’s nonetheless not me because I may not even know that he was created. Although he would have all my memories and recall having been me, from the point in time of his creation, Ray 2 would have his own unique experiences and his reality would begin to diverge from mine.

Let’s pursue this train of thought a bit further.

Now let’s pursue this train of thought a bit further — and you will see where the dilemma comes in. If we copy me, and then destroy the original, then that’s the end of me because as we concluded above the copy is not me.

Since the copy will do a convincing job of impersonating me, no one may know the difference, but it’s nonetheless the end of me.

However, this scenario is entirely equivalent to one in which I am replaced gradually. In the case of gradual replacement, there is no simultaneous old me and new me.

But at the end of the gradual replacement process, you have the equivalent of the new me, and no old me. So gradual replacement also means the end of me.

However, as I pointed out at the beginning of this question, it is the case that I am in fact being continually replaced. And, by the way, it’s not so gradual, but a rather rapid process. As we concluded, all that persists is my pattern.

But the thought experiment above shows that gradual replacement means the end of me even if my pattern is preserved. So am I constantly being replaced by someone else who just seems a like lot me a few moments earlier?

So, again, who am I? It’s the ultimate ontological question. We often refer to this question as the issue of consciousness. I have consciously (no pun intended) phrased the issue entirely in the first person because that is the nature of the issue. It is not a third person question. So my question is not “Who is John Doe?” although John Doe may ask this question himself.

When people speak of consciousness, they often slip into issues of behavioral and neurological correlates of consciousness — like whether or not an entity can be self-reflective. But these are third person, objective issues, and do not represent what philosopher and cognitive scientist David Chalmers, PhD calls the “hard question” of consciousness. Chalmers specializes in the area of philosophy of mind and philosophy of language.

The question of whether or not an entity is conscious is only apparent to himself. The difference between neurological correlates of consciousness — that is, intelligent behavior — and the ontological reality of consciousness is the difference between objective (third person) and subjective (first person) reality. For this reason, we are unable to propose an objective consciousness detector that does not have philosophical assumptions built into it.

Well, you see the problem.

I do say that we humans will come to accept that non-biological entities are conscious because ultimately they will have all the subtle cues that humans currently possess that we associate with emotional and other subjective experiences. But that’s a political and psychological prediction, not an observation that we will be able to scientifically verify. We do assume that other humans are conscious, but this is an assumption, and not something we can objectively demonstrate.

I will acknowledge that people seem conscious to me, but I should not be too quick to accept this impression. Perhaps I am really living in a simulation, and othere people are part of the simulation. Or, perhaps it’s only my memories that exist, and the actual experience never took place. Or maybe I am only now experiencing the sensation of recalling apparent memories of having met a person, but neither the experience nor the memories really exist. Well, you see the problem.

— end —

IMAGE

essay | no. 2.

essay title: We are entering the singularity

author: by Ray Kurzweil

— introduction —

My interest in the future really stems from my interest in being an inventor. I had the idea of being an inventor when I was 5 years old, and I quickly realized you had to have a good idea of the future, if you’re going to succeed. It’s like surfing, you have to catch a wave at the right time.

I noticed the world becomes a different place than it was when you started by the time you finally get something done. Most inventors fail not because they can’t get something to work, but because all the market’s enabling forces are not right place, right time.

My interest in the future.

So I became a student of technology trends, and have developed mathematical models about how technology evolves in different areas like computers, electronics in general, communication storage devices, biological technologies like genetic scanning, reverse engineering of the human brain, miniaturization, the size of technology, and the pace of paradigm shifts. This helped guide me as an entrepreneur and as a technology creator so that I could catch the wave at the right time.

This interest in technology trends took on a life of its own, and I began to project some of them using what I call the law of accelerating returns, which I believe underlies technology evolution to future periods. I did that in a book I wrote in the 1980s, which had a road map of what the 1990s and the early 2000’s would be like, and that worked out quite well. I’ve now refined these mathematical models, and have begun to really examine what the 21st century would be like.

It allows me to be inventive with the technologies of the 21st century, because I have a conception of what technology, communications, the size of technology, and our knowledge of the human brain will be like in 2010, 2020, or 2030. If I can come up with scenarios using those technologies, I can be inventive with the technologies of the future. I can’t actually create these technologies yet, but I can write about them.

One thing I’d say is that if anything the future will be more remarkable than any of us can imagine, because although any of us can only apply so much imagination, there’ll be thousands or millions of people using their imaginations to create new capabilities with these future technology powers. I’ve come to a view of the future that really doesn’t stem from a preconceived notion, but really falls out of these models, which I believe are valid both for theoretical reasons and because they also match the empirical data of the 20th century.

The pace of change itself has accelerated.

One thing that observers don’t fully recognize, and that a lot of otherwise thoughtful people fail to take into consideration adequately, is the fact that the pace of change itself has accelerated. Centuries ago people didn’t think that the world was changing at all. Their grandparents had the same lives that they did, and they expected their grandchildren would do the same, and that expectation was largely fulfilled.

Today it’s an axiom that life is changing and that technology is affecting the nature of society. But what’s not fully understood is that the pace of change is itself accelerating, and the last 20 years are not a good guide to the next 20 years. We’re doubling the paradigm shift rate, the rate of progress, every decade. This will actually match the amount of progress we made in the whole 20th century, because we’ve been accelerating up to this point.

The 20th century was like 25 years of change at today’s rate of change. In the next 25 years we’ll make four times the progress you saw in the 20th century. And we’ll make 20,000 years of progress in the 21st century, which is almost a thousand times more technical change than we saw in the 20th century.

Specifically, computation is growing exponentially. The one exponential trend that people are aware of is called Moore’s law. But Moore’s law itself is just one method for bringing exponential growth to computers. People are aware that we’re doubling the power of computation every 12 months, because we can put twice as many transistors on an integrated circuit every two years. But in fact, they run twice as fast, and double both the capacity and the speed, which means that the power quadruples.

What’s not fully realized is that Moore’s law was not the first but the fifth paradigm to bring exponential growth to computers. We had electro-mechanical calculators, relay based computers, vacuum tubes, and transistors. Every time one paradigm ran out of steam another took over. For a while there were shrinking vacuum tubes, and finally they couldn’t make them any smaller and still keep the vacuum, so a whole different method came along. They weren’t just tiny vacuum tubes, but transistors, which constitute a whole different approach. There’s been a lot of discussion about Moore’s law running out of steam in about 12 years because by that time the transistors will only be a few atoms in width and we won’t be able to shrink them any more. And that’s true, so that particular paradigm will run out of steam.

Computers will be based on biologically inspired models.

We’ll then go to the sixth paradigm, which is massively parallel computing in three dimensions. We live in a 3 dimensional world, and our brains organize in three dimensions, so we might as well compute in three dimensions. The brain processes information using an electro-chemical method that’s ten million times slower than electronics. But it makes up for this by being three dimensional. Every intra-neural connection computes simultaneously, so you have a hundred trillion things going on at the same time. And that’s the direction we’re going to go in. Right now, chips, even though they’re very dense, are flat. Fifteen or twenty years from now computers will be massively parallel and will be based on biologically inspired models, which we will devise largely by understanding how the brain works.

We’re already being significantly influenced by it. It’s generally recognized, or at least accepted by a lot of observers, that we’ll have the hardware to manipulate human intelligence within a brief period of time — I’d say about twenty years. A thousand dollars of computation will equal the 20 million billion calculations per second of the human brain. What’s more controversial is whether or not we will have the software. People acknowledge that we’ll have very fast computers that could in theory emulate the human brain, but we don’t really know how the brain works, and we won’t have the software, the methods, or the knowledge to create a human level of intelligence. Without this you just have an extremely fast calculator.

The brain is not of infinite complexity.

But our knowledge of how the brain works is also growing exponentially. The brain is not of infinite complexity. We’re not going to achieve a total understanding through one simple breakthrough, but we’re further along in understanding the principles of operation of the human brain than most people realize.

The technology for scanning the human brain is growing exponentially, our ability to actually see the internal connection patterns is growing, and we’re developing more and more detailed models of biological neurons. We have intricate math models of several dozen brain regions and how they work — recreating their methodologies using conventional computation. The results of those re-engineered or re-implemented synthetic models of those brain regions match the human brain closely.

We’re also literally replacing sections of the brain that are degraded or don’t work any more because of disabilities or disease. There are neural implants for Parkinson’s disease and well known cochlear implants for deafness. There’s a new generation of those that are coming out now that provide a thousand points of frequency resolution and will allow deaf people to hear music for the first time. The Parkinson’s implant actually replaces the cortical neurons themselves that are destroyed by that disease. So we’ve shown that it’s feasible to understand regions of the human brain, and re-implement those regions in conventional electronics computation that will actually interact with the brain and perform those functions.

If you follow this work and work out the mathematics of it. It’s a conservative scenario to say that within 30 years — possibly much sooner — we will have a complete map of the human brain, we will have complete mathematical models of how each region works, and we will be able to re-implement the methods of the human brain, which are quite different than many of the methods used in contemporary artificial intelligence.

But these are actually similar to methods that I use in my own field — pattern recognition — which is the fundamental capability of the human brain. We can’t think fast enough to logically analyze situations very quickly, so we rely on our powers of pattern recognition. Within 30 years we’ll be able to create non-biological intelligence that’s comparable to human intelligence. Just like a biological system, we’ll have to provide it an education, but here we can bring to bear some of the advantages of machine intelligence: machines are much faster, and much more accurate. A $1,000 dollar computer can remember billions of things accurately — we’re hard pressed to remember a handful of phone numbers.

Machines can share their knowledge with other machines.

Once they learn something, machines can also share their knowledge with other machines. We don’t have quick downloading ports at the level of our intra-neuronal connection patterns and our concentrations of neurotransmitters, so we can’t just download knowledge.

I can’t take my knowledge of French and download it to you, but machines can. So we can educate machines through a process that can be hundreds or thousands of times faster than the comparable process in humans.

It can provide a 20 year education to a human level machine in weeks or days, these machines then share their knowledge. The primary implication will be enhancing our own human intelligence.

We’re going to be putting these machines inside our own brains. We’re starting to do that now with people who have severe medical problems and disabilities, but ultimately we’ll all be doing this. Without surgery, we’ll be able to introduce calculating machines into the blood stream.

They will be able to pass through the capillaries of the brain. These intelligent, blood-cell-sized nanobots will actually be able to go to the brain and interact with biological neurons. The basic feasibility of this has already been demonstrated in animals.

One application of sending billions of nanobots into the brain is full-immersion virtual reality. If you want to be in real reality, the nanobots sit there and do nothing, but if you want to go into virtual reality, the nanobots shut down the signals coming from my real senses, replace them with the signals I would be receiving if I were in the virtual environment, and then my brain feels as if it’s in the virtual environment. And you can go there yourself — or, more interestingly you can go there with other people — and you can have everything from sexual and sensual encounters to business negotiations, in full-immersion virtual reality environments that incorporate all of the senses.

People will beam their flow of sensory experiences.

People will beam their own flow of sensory experiences and the neurological correlates of their emotions out into the web, the way people now beam images from web cams in their living rooms and bedrooms.

This will enable you to plug in and actually experience what it’s like to be someone else, including their emotional reactions, a la the plot concept of the film Being John Malkovich. In virtual reality you don’t have to be the same person. You can be someone else, and can project yourself as a different person.

Most importantly, we’ll be able to enhance our biological intelligence with non-biological intelligence through intimate connections. This won’t mean just having one thin pipe between the brain and a non-biological system, but actually having non-biological intelligence in billions of different places in the brain.

I don’t know about you, but there are lots of books I’d like to read and websites I’d like to go to, and I find my bandwidth limiting. So instead of having a mere hundred trillion connections, we’ll have a hundred trillion times a million. We’ll be able to enhance our cognitive pattern recognition capabilities greatly, think faster, and download knowledge.

If you follow these trends further, you get to a point where change is happening so rapidly that there appears to be a rupture in the fabric of human history. Some people have referred to this as the “singularity.” There are many different definitions of the Singularity, a term borrowed from physics, which means an actual point of infinite density and energy that’s kind of a rupture in the fabric of space-time.

Here, that concept is applied by analogy to human history, where we see a point where this rate of technological progress will be so rapid that it appears to be a rupture in the fabric of human history. It’s impossible in physics to see beyond a singularity, which creates an event boundary, and some people have hypothesized that it will be impossible to characterize human life after the singularity.

My question is what human life will be like after the singularity, which I predict will occur somewhere right before the middle of the 21st century.

The book I wrote 10 years later, The Age of Spiritual Machines

A lot of the concepts we have of the nature of human life — such as longevity — suggest a limited capability as biological, thinking entities. All of these concepts are going to undergo significant change as we basically merge with our technology. It’s taken me a while to get my own mental arms around these issues. In the book I wrote in the 1980s, The Age of Intelligent Machines, I ended with the spectre of machines matching human intelligence somewhere between 2020 and 2050, and I basically have not changed my view on that time frame, although I left behind my view that this is a final spectre.

In the book I wrote ten years later, The Age of Spiritual Machines, I began to consider what life would be like past the point where machines could compete with us. Now I’m trying to consider what that will mean for human society.

One thing that we should keep in mind is that innate biological intelligence is fixed. We have 10^(26) calculations per second in the whole human race and there are ten billion human minds. 50 years from now, the biological intelligence of humanity will still be at that same order of magnitude. On the other hand, machine intelligence is growing exponentially, and today it’s a million times less than that biological figure. So although it still seems that human intelligence is dominating, which it is, the crossover point is around 2030 and non-biological intelligence will continue its exponential rise.

Is knowledge tautological?

This leads some people to ask: how can we know if another species or entity is more intelligent that we are? Isn’t knowledge tautological? How can we know more than we do know? Who would know it, except us?

One response is not to want to be enhanced, not to have nano-bots. A lot of people say that they just want to stay a biological person.

But what will the singularity look like to people who want to remain biological? The answer is that they really won’t notice it, except for the fact that machine intelligence will appear to biological humanity to be their transcendent servants. It will appear that these machines are very friendly are taking care of all of our needs.

But providing that service of meeting all of the material and emotional needs of biological humanity will comprise a very tiny fraction of the mental output of the non-biological component of our civilization. So there’s a lot that, in fact, biological humanity won’t actually notice.

There are two levels of consideration here. On the economic level, mental output will be the primary criterion. We’re already getting close to the point that the only thing that has value is information. Information has value to the extent that it really reflects knowledge, not just raw data.

There are a few products on this table — a clock, a camera, tape recorder — that are physical objects, but really the value of them is in the information that went into their design: the design of their chips and the software that’s used to invent and manufacture them. The actual raw materials — a bunch of sand and some metals and so on — is worth a few pennies, but these products have value because of all the knowledge that went into creating them.

And the knowledge component of products and services is asymptoting towards 100 percent. By the time we get to 2030 it will be basically 100 percent. With a combination of nanotechnology and artificial intelligence, we’ll be able to create virtually any physical product and meet all of our material needs. When everything is software and information, it’ll be a matter of just downloading the right software, and we’re already getting pretty close to that.

We will have entities that seem to be conscious.

On a spiritual level, the issue of what is consciousness is another important aspect of this, because we will have entities by 2030 that seem to be conscious, and that will claim to have feelings.

We have entities today, like characters in your kids’ video games, that can make that claim, but they are not very convincing.

If you run into a character in a video game and it talks about its feelings, you know it’s just a machine simulation. You’re not convinced that it’s a real person there. This is because that entity, which is a software entity, is still a million times simpler than the human brain. In 2030, that won’t be the case.

Say you encounter another person in virtual reality that looks just like a human but there’s actually no biological human behind it — it’s completely an AI projecting a human-like figure in virtual reality, or even a human like image in real reality using an android robotic technology.

These entities will seem human. They won’t be a million times simpler than humans. They’ll be as complex as humans. They’ll have all the subtle cues of being humans. They’ll be able to sit here and be interviewed and be just as convincing as a human, just as complex, just as interesting. And when they claim to have been angry or happy it’ll be just as convincing as when another human makes those claims.

At this point, it becomes a really deeply philosophical issue. Is that just a very clever simulation that’s good enough to trick you, or is it really conscious in the way that we assume other people are? In my view there’s no real way to test that scientifically. There’s no machine you can slide the entity into where a green light goes on and says okay, this entity’s conscious, but no, this one’s not. You could make a machine, but it will have philosophical assumptions built into it. Some philosophers will say that unless it’s squirting impulses through biological neurotransmitters, it’s not conscious, or that unless it’s a biological human with a biological mother and father it’s not conscious. But it becomes a matter of philosophical debate. It’s not scientifically resolvable.

There’s not going to be any clear boundary.

The next big revolution that’s going to affect us right away is biological technology, because we’ve merged biological knowledge with information processing. We are in the early stages of understanding life processes and disease processes by understanding the genome, and how the genome expresses itself in protein. And we’re going to find — and this has been apparent all along — that there’s a slippery slope and no clear definition of where life begins. Both sides of the abortion debate have been afraid to get off the edges of that debate: that life starts at conception on the one hand or it starts literally at birth on the other. They don’t want to get off those edges, because they realize it’s just a completely slippery slope from one end to the other.

But we’re going to make it even more slippery. We’ll be able to create stem cells without ever actually going through the fertilized egg. What’s the difference between a skin cell, which has all the genes, and a fertilized egg? The only differences are some proteins in the eggs and some signalling factors that we don’t fully understand, yet that are basically proteins. We will get to the point where we’ll be able to take some protein mix, which is just a bunch of chemicals and clearly not a human being, and add it to a skin cell to create a fertilized egg that we can then immediately differentiate into any cell of the body. When I brush off thousands of skin cells, I will be destroying thousands of potential people. There’s not going to be any clear boundary.

Science and tech find a way around the controversy.

This is another way of saying also that science and technology are going to find a way around the controversy. In the future, we’ll be able to do therapeutic cloning, which is a very important technology that completely avoids the concept of the fetus. We’ll be able to take skin cells and create, pretty directly without ever going through a fetus, all the cells we need.

We’re not that far away from being able to create new cells. For example, I’m 53 but with my DNA, I’ll be able to create the heart cells of a 25 year old man, and I can replace my heart with those cells without surgery just by sending them through my blood stream. They’ll take up residence in the heart, so at first I’ll have a heart that’s one percent young cells and 99 percent older ones.

But if I keep doing this every day, a year later, my heart is 99 percent young cells. With that kind of therapy we can ultimately replenish all the cell tissues and the organs in the body. This is not something that will happen tomorrow, but these are the kinds of revolutionary processes we’re on the verge of.

If you look at human longevity — which is another one of these exponential trends — you’ll notice that we added a few days every year to the human life expectancy in the 18th century. In the 19th century we added a few weeks every year, and now we’re now adding over a hundred days a year, through all of these developments, which are going to continue to accelerate. Many knowledgeable observers, including myself, feel that within ten years we’ll be adding more than a year every year to life expectancy.

As we get older, human life expectancy will actually move out at a faster rate than we’re actually progressing in age, so if we can hang in there, our generation is right on the edge. We have to watch our health the old-fashioned way for a while longer so we’re not the last generation to die prematurely. But if you look at our kids, by the time they’re 20, 30, 40 years old, these technologies will be so advanced that human life expectancy will be pushed way out.

There is also the more fundamental issue of whether or not ethical debates are going to stop the developments that I’m talking about. It’s all very good to have these mathematical models and these trends, but the question is if they’re going to hit a wall because people, for one reason or another — through war or ethical debates such as the stem cell issue controversy — thwart this ongoing exponential development. I strongly believe that’s not the case.

You can’t stop the river of advances.

These ethical debates are like stones in a stream. The water runs around them. You haven’t seen any of these biological technologies held up for one week by any of these debates.

To some extent, they may have to find some other ways around some of the limitations, but there are so many developments going on.

There are dozens of very exciting ideas about how to use genomic information and proteonic information. Although the controversies may attach themselves to one idea here or there, there’s such a river of advances.

The concept of technological advance is so deeply ingrained in our society that it’s an enormous imperative. Bill Joy, activist and co-founder of Sun Microsystems, has gotten around — correctly — talking about the dangers, and I agree that the dangers are there, but you can’t stop ongoing development.

The kinds of scenarios I’m talking about 20 or 30 years from now are not being developed because there’s one laboratory that’s sitting there creating a human level intelligence in a machine. They’re happening because it’s the inevitable end result of thousands of little steps.

Each little step is conservative, not radical, and makes perfect sense. Each one is just the next generation of some company’s products. If you take thousands of those little steps — which are getting faster and faster — you end up with some remarkable changes 10, 20, or 30 years from now. You don’t see Sun Microsystems saying the future implication of these technologies is so dangerous that they’re going to stop creating more intelligent networks and more powerful computers. Sun can’t do that. No company can do that because it would be out of business. There’s enormous economic imperative.

There is also a tremendous moral imperative. We still have not millions but billions of people who are suffering from disease and poverty, and we have the opportunity to overcome those problems through these technological advances. You can’t tell the millions of people who are suffering from cancer that we’re really on the verge of great breakthroughs that will save millions of lives from cancer, but we’re cancelling all that because the terrorists might use that same knowledge to create a bioengineered pathogen.

This is a true and valid concern, but we’re not going to do that. There’s a tremendous belief in society in the benefits of continued economic and technological advance. Still, it does raise the question of the dangers of these technologies, and we can talk about that as well, because that’s also a valid concern.

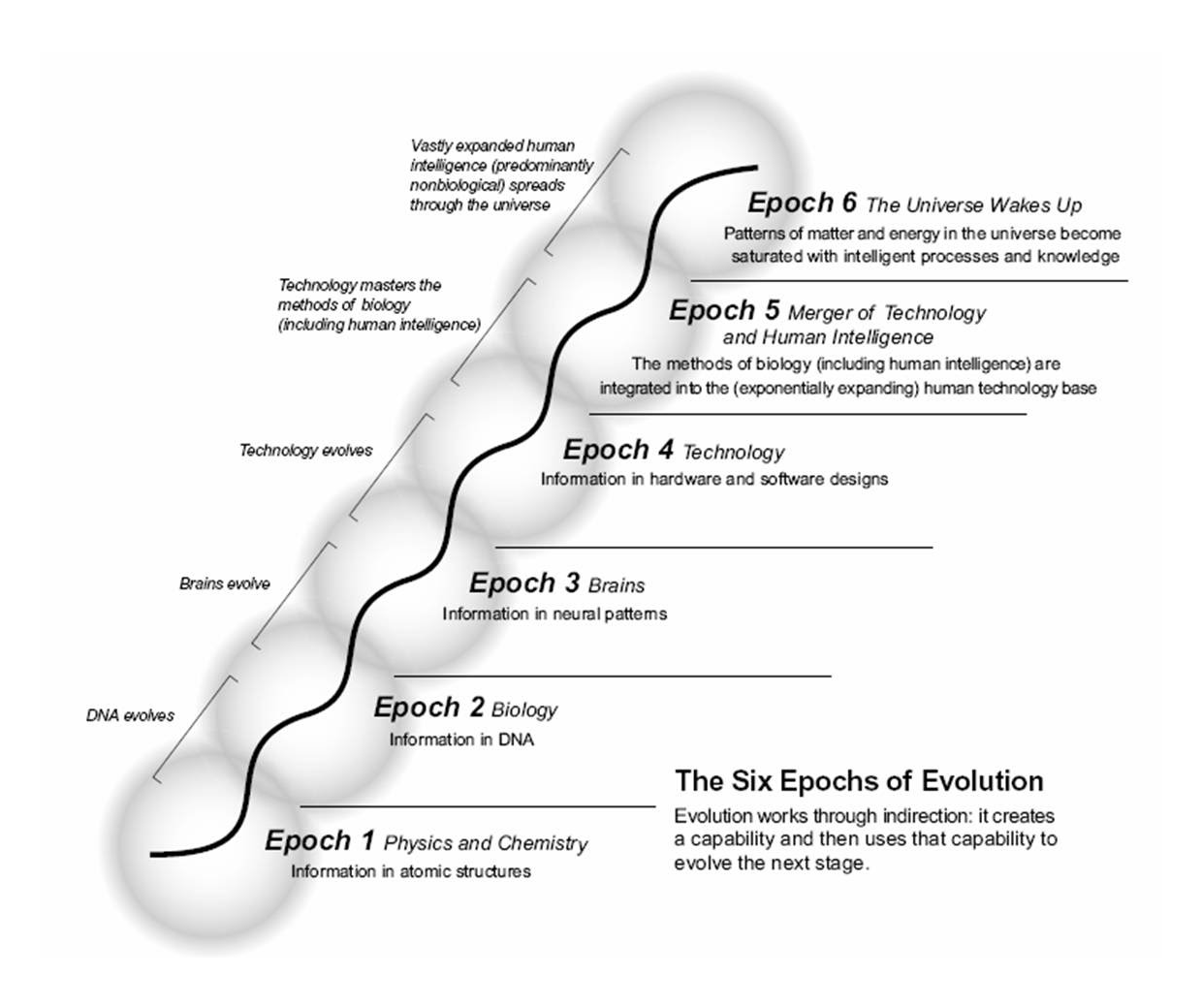

infographic | the 6 epochs of evolution

by Ray Kurzweil

The cutting edge of evolution on our planet.

Another aspect of all of these changes is that they force us to re-evaluate our concept of what it means to be human. There is a common viewpoint that reacts against the advance of technology and its implications for humanity. The objection goes like this: we’ll have very powerful computers but we haven’t solved the software problem. And because the software’s so incredibly complex, we can’t manage it.

I address this objection by saying that the software required to emulate human intelligence is actually not beyond our current capability. We have to use different techniques — different self-organizing methods — that are biologically inspired. The brain is complicated but it’s not that complicated. You have to keep in mind that it is characterized by a genome of only 23 million bytes.

The genome is six billion bits — that’s eight hundred million bytes — and there are massive redundancies. One pretty long sequence called ALU is repeated 300 thousand times. If you use conventional data compression on the genomes — at 23 million bytes, a small fraction of the size of Microsoft Word), it’s a level of complexity that we can handle. But we don’t have that information yet.

You might wonder how something with 23 million bytes can create a human brain that’s a million times more complicated than itself. That’s not hard to understand. The genome creates a process of wiring a region of the human brain involving a lot of randomness. Then, when the fetus becomes a baby and interacts with a very complicated world, there’s an evolutionary process within the brain in which a lot of the connections die out, others get reinforced, and it self-organizes to represent knowledge about the brain. It’s a very clever system, and we don’t understand it yet, but we will, because it’s not a level of complexity beyond what we’re capable of engineering.

In my view there is something special about human beings that’s different from what we see in any of the other animals. By happenstance of evolution we were the first species to be able to create technology. Actually there were others, but we are the only one that survived in this ecological niche. But we combined a rational faculty, the ability to think logically, to create abstractions, to create models of the world in our own minds, and to manipulate the world. We have opposable thumbs so that we can create technology, but technology is not just tools. Other animals have used primitive tools, but the difference is actually a body of knowledge that changes and evolves itself from generation to generation. The knowledge that the human species has is another one of those exponential trends.

We use one stage of technology to create the next stage, which is why technology accelerates, why it grows in power. Today, for example, a computer designer has these tremendously powerful computer system design tools to create computers, so in a couple of days they can create a very complex system and it can all be worked out very quickly. The first computer designers had to actually draw them all out in pen on paper. Each generation of tools creates the power to create the next generation.

So technology itself is an exponential, evolutionary process that is a continuation of the biological evolution that created humanity in the first place. Biological evolution itself evolved in an exponential manner. Each stage created more powerful tools for the next, so when biological evolution created DNA it now had a means of keeping records of its experiments so evolution could proceed more quickly. Because of this, the Cambrian explosion only lasted a few tens of millions of years, whereas the first stage of creating DNA and primitive cells took billions of years.

Finally, biological evolution created a species that could manipulate its environment and had some rational faculties, and now the cutting edge of evolution actually changed from biological evolution into something carried out by one of its own creations, Homo sapiens, and is represented by technology. In the next epoch this species that ushered in its own evolutionary process — that is, its own cultural and technological evolution, as no other species has — will combine with its own creation and will merge with its technology. At some level that’s already happening, even if most of us don’t necessarily have them yet inside our bodies and brains, since we’re very intimate with the technology, it’s in our pockets. We’ve certainly expanded the power of the mind of the human civilization through the power of its technology.

We are entering a new era. I call it “the Singularity.” It’s a merger between human intelligence and machine intelligence that is going to create something bigger than itself. It’s the cutting edge of evolution on our planet. One can make a strong case that it’s actually the cutting edge of the evolution of intelligence in general, because there’s no indication that it’s occurred anywhere else.

To me, this is what civilization is all about.

To me, that is what human civilization is all about. It is part of our destiny and part of the destiny of evolution to continue to progress ever faster, and to grow the power of intelligence exponentially. To contemplate stopping that — to think human beings are fine the way they are — is a misplaced fond remembrance of what human beings used to be. What human beings are is a species that has undergone a cultural and technological evolution, and it’s the nature of evolution that it accelerates, and that its powers grow exponentially, and that’s what we’re talking about. The next stage of this will be to amplify our own intellectual powers with the results of our technology.

What is unique about human beings is our ability to create abstract models and to use these mental models to understand the world and do something about it. These mental models have become more and more sophisticated, and by becoming embedded in technology, they have become very elaborate and very powerful. Now we can actually understand our own minds. This ability to scale up the power of our own civilization is what’s unique about human beings.

Patterns are the fundamental ontological reality, because they are what persists, not anything physical. Take myself, Ray Kurzweil. What is Ray Kurzweil? Is it this stuff here? Well, this stuff changes very quickly. Some of our cells turn over in a matter of days. Even our skeleton, which you think probably lasts forever because we find skeletons that are centuries old, changes over within a year. Many of our neurons change over. But more importantly, the particles making up the cells change over even more quickly, so even if a particular cell is still there the particles are different. So I’m not the same stuff, the same collection of atoms and molecules that I was a year ago.

The pattern persists.

But what does persist is that pattern. The pattern evolves slowly, but the pattern persists. So we’re kind of like the pattern that water makes in a stream; you put a rock in there and you’ll see a little pattern. The water is changing every few milliseconds. If you come a second later, it’s completely different water molecules, but the pattern persists.

Patterns are what have resonance. Ideas are patterns, technology is patterns. Even our basic existence as people is nothing but a pattern. Pattern recognition is the heart of human intelligence. 99 percent of our intelligence is our ability to recognize patterns.

There’s been a sea change just in the last several years in the public understanding of the acceleration of change and the potential impact of all of these technologies — computer technology, communications, biological technology — on human society.

There’s really been tremendous change in popular public perception in the past three years because of the onslaught of stories and news developments that document and support this vision. There are now several stories every day that are significant developments and that show the escalating power of these technologies.

— end —

IMAGE

essay | no. 3

essay title: Exponential technological progress in the 21 century

author: by Ray Kurzweil

— introduction —

Evolution applies positive feedback in that the more capable methods resulting from one stage of evolutionary progress are used to create the next stage. Each epoch of evolution has progressed more rapidly by building on the products of the previous stage.

Evolution works through indirection: evolution created humans, humans created technology, humans are now working with increasingly advanced technology to create new generations of technology. As a result, the rate of progress of an evolutionary process increases exponentially over time.

Over time, the “order” of the information embedded in the evolutionary process — the measure of how well the information fits a purpose, which in evolution is survival — increases.

A comment on the nature of order

The concept of the “order” of information is important here, as it is not the same as the opposite of disorder. If disorder represents a random sequence of events, then the opposite of disorder should imply “not random.” Information is a sequence of data that is meaningful in a process, such as the DNA code of an organism, or the bits in a computer program. Noise, on the other hand, is a random sequence. Neither noise nor information is predictable. Noise is inherently unpredictable, but carries no information. Information, however, is also unpredictable. If we can predict future data from past data, then that future data stops being information. We might consider an alternating pattern (0101010…) to be orderly, but it carries no information — beyond the first couple of bits.

Thus orderliness does not constitute order because order requires information. However, order goes beyond mere information. A recording of radiation levels from space represents information, but if we double the size of this data file, we have increased the amount of data, but we have not achieved a deeper level of order.

Order is information that fits a purpose. The measure of order is the measure of how well the information fits the purpose. In the evolution of life-forms, the purpose is to survive. In an evolutionary algorithm (a computer program that simulates evolution to solve a problem) applied to, say, investing in the stock market, the purpose is to make money. Simply having more information does not necessarily result in a better fit. A superior solution for a purpose may very well involve less data.

The concept of “complexity” is often used to describe the nature of the information created by an evolutionary process. Complexity is a close fit to the concept of order that I am describing, but is also not sufficient. Sometimes, a deeper order — a better fit to a purpose — is achieved through simplification rather than further increases in complexity.

For example, a new theory that ties together apparently disparate ideas into one broader more coherent theory reduces complexity but nonetheless may increase the “order for a purpose” that I am describing. Indeed, achieving simpler theories is a driving force in science. Evolution has shown, however, that the general trend towards greater order does generally result in greater complexity.

Thus improving a solution to a problem — which may increase or decrease complexity — increases order. Now that just leaves the issue of defining the problem. Indeed, the key to an evolution algorithm (and to biological and technological evolution) is exactly this: defining the problem.

Innovations created by evolution encourage and enable faster evolution

We may note that this aspect of “the law of accelerating returns” appears to contradict the second law of thermodynamics, which implies that entropy — randomness in a closed system — cannot decrease, and, therefore, generally increases. However, the law of accelerating returns pertains to evolution, and evolution is not a closed system. It takes place amid great chaos, and indeed depends on the disorder in its midst, from which it draws its options for diversity.

And from these options, an evolutionary process continually prunes its choices to create ever greater order. Even a crisis, such as the periodic large asteroids that have crashed into the Earth, although increasing chaos temporarily, end up increasing — deepening — the order created by an evolutionary process.

The law of accelerating returns

• A primary reason that evolution — of life-forms or of technology — speeds up is that it builds on its own increasing order, with ever more sophisticated means of recording and manipulating information. Innovations created by evolution encourage and enable faster evolution. In the case of the evolution of life forms, the most notable early example is DNA, which provides a recorded and protected transcription of life’s design from which to launch further experiments.

In the case of the evolution of technology, ever improving human methods of recording information have fostered further technology. The first computers were designed on paper and assembled by hand. Today, they are designed on computer workstations with the computers themselves working out many details of the next generation’s design, and are then produced in fully-automated factories with human guidance but limited direct intervention.

• The evolutionary process of technology seeks to improve capabilities in an exponential fashion. Innovators seek to improve things by multiples. Innovation is multiplicative, not additive. Technology, like any evolutionary process, builds on itself. This aspect will continue to accelerate when the technology itself takes full control of its own progression.

• We can thus conclude the following with regard to the evolution of life-forms, and of technology: the law of accelerating returns as applied to an evolutionary process: An evolutionary process is not a closed system; therefore, evolution draws upon the chaos in the larger system in which it takes place for its options for diversity; and evolution builds on its own increasing order. Therefore, in an evolutionary process, order increases exponentially.

• A correlate of the above observation is that the “returns” of an evolutionary process — that is: the speed, cost-effectiveness, or overall “power” of a process — increase exponentially over time. We see this in Moore’s law, in which each new generation of computer chip (now spaced about two years apart) provides twice as many components, each of which operates substantially faster — because of the smaller distances required for the electrons to travel, and other innovations. This exponential growth in the power and price-performance of information-based technologies — roughly doubling every year — is not limited to computers, but is true for a wide range of technologies, measured many different ways.

• In another positive feedback loop, as a particular evolutionary process (e.g., computation) becomes more effective (e.g., cost effective), greater resources are deployed towards the further progress of that process. This results in a second level of exponential growth (i.e., the rate of exponential growth itself grows exponentially). For example, it took three years to double the price-performance of computation at the beginning of the twentieth century, two years around 1950, and is now doubling about once a year. Not only is each chip doubling in power each year for the same unit cost, but the number of chips being manufactured is growing exponentially.

• Biological evolution is one such evolutionary process. Indeed it is the quintessential evolutionary process. It took place in a completely open system (as opposed to the artificial constraints in an evolutionary algorithm). Thus many levels of the system evolved at the same time.

• Technological evolution is another such evolutionary process. Indeed, the emergence of the first technology-creating species resulted in the new evolutionary process of technology. Therefore, technological evolution is an outgrowth of — and a continuation of — biological evolution. Early stages of humanoid created technology were barely faster than the biological evolution that created our species. Homo sapiens evolved in a few hundred thousand years. Early stages of technology — the wheel, fire, stone tools — took tens of thousands of years to evolve and be widely deployed. A thousand years ago, a paradigm shift such as the printing press, took on the order of a century to be widely deployed. Today, major paradigm shifts, such as cell phones and the world wide web were widely adopted in only a few years time.

• A specific paradigm (a method or approach to solving a problem, e.g., shrinking transistors on an integrated circuit as an approach to making more powerful computers) provides exponential growth until the method exhausts its potential. When this happens, a paradigm shift (a fundamental change in the approach) occurs, which enables exponential growth to continue.

• Each paradigm follows an “S curve,” which consists of slow growth (the early phase of exponential growth), followed by rapid growth (the late, explosive phase of exponential growth), followed by a leveling off as the particular paradigm matures.

• During this third or maturing phase in the life cycle of a paradigm, pressure builds for the next paradigm shift, and research dollars are invested to create the next paradigm. We can see this in the enormous investments being made today in the next computing paradigm — three dimensional molecular computing — despite the fact that we still have at least a decade left for the paradigm of shrinking transistors on a flat integrated circuit using photo-lithography — Moore’s law.

Generally, by the time a paradigm approaches its asymptote (limit) in price|performance, the next technical paradigm is already working in niche applications. For example, engineers were shrinking vacuum tubes in the 1950s to provide greater price|performance for computers, and reached a point where it was no longer feasible to shrink tubes and maintain a vacuum. At this point, around 1960, transistors had already achieved a strong niche market in portable radios.

• When a paradigm shift occurs for a particular type of technology, the process begins a new S curve.

• Thus the acceleration of the overall evolutionary process proceeds as a sequence of S curves,” and the overall exponential growth consists of this cascade of S curves.

• The resources underlying the exponential growth of an evolutionary process are relatively unbounded.

• One resource is the (ever growing) order of the evolutionary process itself. Each stage of evolution provides more powerful tools for the next. In biological evolution, the advent of DNA allowed more powerful and faster evolutionary “experiments.” Later, setting the “designs” of animal body plans during the Cambrian explosion allowed rapid evolutionary development of other body organs, such as the brain. Or to take a more recent example, the advent of computer-assisted design tools allows rapid development of the next generation of computers.

• The other required resource is the “chaos” of the environment in which the evolutionary process takes place and which provides the options for further diversity. In biological evolution, diversity enters the process in the form of mutations and ever- changing environmental conditions. In technological evolution, human ingenuity combined with ever-changing market conditions keep the process of innovation going.

• If we apply these principles at the highest level of evolution on Earth, the first step, the creation of cells, introduced the paradigm of biology. The subsequent emergence of DNA provided a digital method to record the results of evolutionary experiments. Then, the evolution of a species that combined rational thought with an opposable appendage (the thumb) caused a fundamental paradigm shift from biology to technology. The upcoming primary paradigm shift will be from biological thinking to a hybrid combining biological and nonbiological thinking. This hybrid will include “biologically inspired” processes resulting from the reverse engineering of biological brains.

• If we examine the timing of these steps, we see that the process has continuously accelerated. The evolution of life forms required billions of years for the first steps (primitive cells); later on progress accelerated. During the Cambrian explosion, major paradigm shifts took only tens of millions of years. Later on, Humanoids developed over a period of millions of years, and Homo sapiens over a period of only hundreds of thousands of years.

• With the advent of a technology-creating species, the exponential pace became too fast for evolution through DNA guided protein synthesis and moved on to human created technology. Technology goes beyond mere tool making; it is a process of creating ever more powerful technology using the tools from the previous round of innovation, and is, thereby, an evolutionary process.

As I noted, the first technological took tens of thousands of years. For people living in this era, there was little noticeable technological change in even a thousand years. By 1000 AD, progress was much faster and a paradigm shift required only a century or two. In the nineteenth century, we saw more technological change than in the nine centuries preceding it. Then in the first twenty years of the twentieth century, we saw more advancement than in all of the nineteenth century. Now, paradigm shifts occur in only a few years time.

• The paradigm shift rate — the overall rate of technical progress — is currently doubling (approximately) every decade. That is, paradigm shift times are halving every decade — and the rate of acceleration is itself growing exponentially. So, the technological progress in the 21 century will be equivalent to what would require (in the linear view) on the order of 200 centuries. In contrast, the 20 century saw only about 20 years of progress (again, at today’s rate of progress) since we have been speeding up to current rates. So the 21 century will see about a thousand times greater technological change than its predecessor.

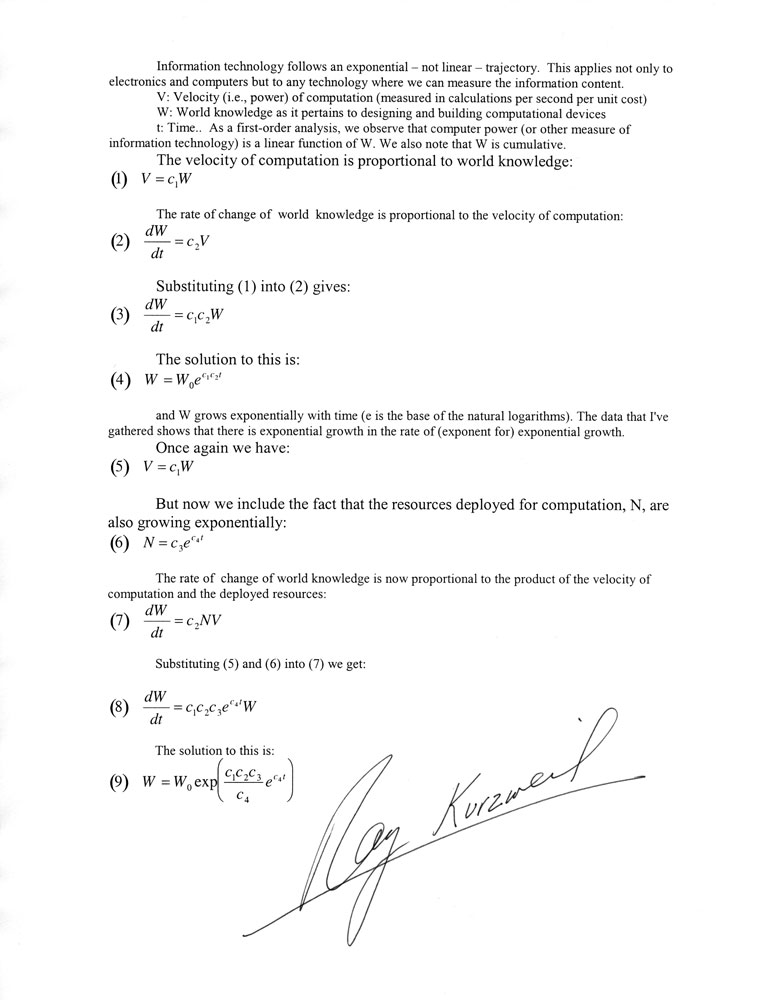

special supplement | no. 4

Math from the law of accelerating returns

by Ray Kurzweil

— end —