How to control robots with your mind

March 7, 2017

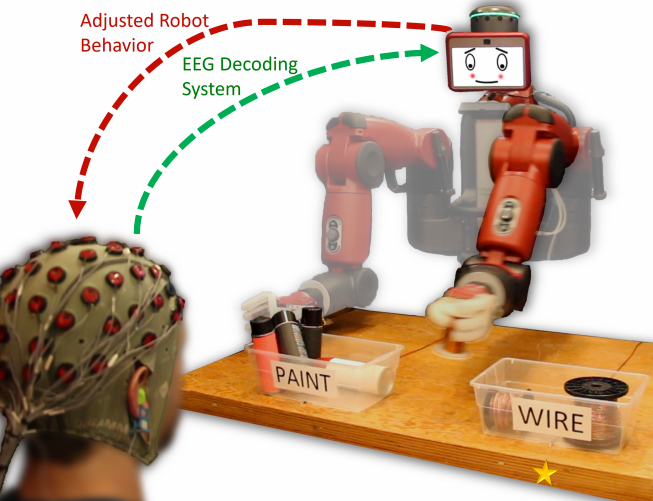

The robot is informed that its initial motion was incorrect based upon real-time decoding of the observer’s EEG signals, and it corrects its selection accordingly to properly sort an object (credit: Andres F. Salazar-Gomez et al./MIT, Boston University)

Two research teams are developing new ways to communicate with robots and shape them one day into the kind of productive workers featured in the current AMC TV show HUMANS (now in second season).

Programming robots to function in a real-world environment is normally a complex process. But now a team from MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) and Boston University is creating a system that lets people correct robot mistakes instantly by simply thinking.

In the initial experiment, the system uses data from an electroencephalography (EEG) helmet to correct robot performance on an object-sorting task. Novel machine-learning algorithms enable the system to classify brain waves within 10 to 30 milliseconds.

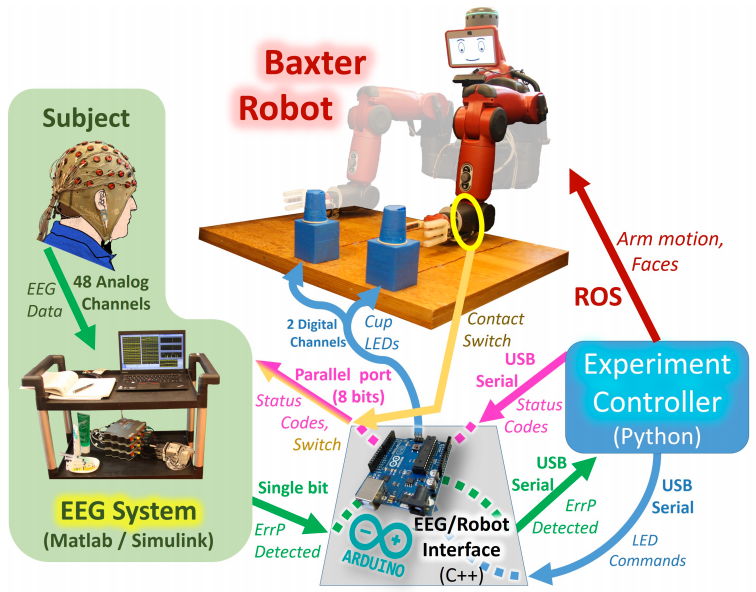

The system includes a main experiment controller, a Baxter robot, and an EEG acquisition and classification system. The goal is to make the robot pick up the cup that the experimenter is thinking about. An Arduino computer (bottom) relays messages between the EEG system and robot controller. A mechanical contact switch (yellow) detects robot arm motion initiation. (credit: Andres F. Salazar-Gomez et al./MIT, Boston University)

While the system currently handles relatively simple binary-choice activities, we may be able one day to control robots in much more intuitive ways. “Imagine being able to instantaneously tell a robot to do a certain action, without needing to type a command, push a button, or even say a word,” says CSAIL Director Daniela Rus. “A streamlined approach like that would improve our abilities to supervise factory robots, driverless cars, and other technologies we haven’t even invented yet.”

The team used a humanoid robot named “Baxter” from Rethink Robotics, the company led by former CSAIL director and iRobot co-founder Rodney Brooks.

MITCSAIL | Brain-controlled Robots

Intuitive human-robot interaction

The system detects brain signals called “error-related potentials” (generated whenever our brains notice a mistake) to determine if the human agrees with a robot’s decision.

“As you watch the robot, all you have to do is mentally agree or disagree with what it is doing,” says Rus. “You don’t have to train yourself to think in a certain way — the machine adapts to you, and not the other way around.” Or if the robot’s not sure about its decision, it can trigger a human response to get a more accurate answer.

The team believes that future systems could extend to more complex multiple-choice tasks. The system could even be useful for people who can’t communicate verbally: the robot could be controlled via a series of several discrete binary choices, similar to how paralyzed locked-in patients spell out words with their minds.

The project was funded in part by Boeing and the National Science Foundation. An open-access paper will be presented at the IEEE International Conference on Robotics and Automation (ICRA) conference in Singapore this May.

Here, robot, Fetch!

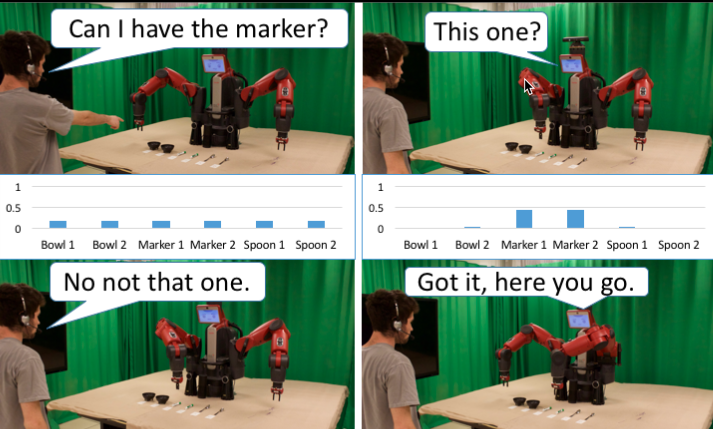

Robot asks questions, and based on a person’s language and gesture, infers what item to deliver. (credit: David Whitney/Brown University)

But what if the robot is still confused? Researchers in Brown University’s Humans to Robots Lab have an app for that.

“Fetching objects is an important task that we want collaborative robots to be able to do,” said computer science professor Stefanie Tellex. “But it’s easy for the robot to make errors, either by misunderstanding what we want, or by being in situations where commands are ambiguous. So what we wanted to do here was come up with a way for the robot to ask a question when it’s not sure.”

Tellex’s lab previously developed an algorithm that enables robots to receive speech commands as well as information from human gestures. But it ran into problems when there were lots of very similar objects in close proximity to each other. For example, on the table above, simply asking for “a marker” isn’t specific enough, and it might not be clear which one a person is pointing to if a number of markers are clustered close together.

“What we want in these situations is for the robot to be able to signal that it’s confused and ask a question rather than just fetching the wrong object,” Tellex explained.

The new algorithm does just that, enabling the robot to quantify how certain it is that it knows what a user wants. When its certainty is high, the robot will simply hand over the object as requested. When it’s not so certain, the robot makes its best guess about what the person wants, then asks for confirmation by hovering its gripper over the object and asking, “this one?”

David Whitney | Reducing Errors in Object-Fetching Interactions through Social Feedback

One of the important features of the system is that the robot doesn’t ask questions with every interaction; it asks intelligently.

And even though the system asks only a very simple question, it’s able to make important inferences based on the answer. For example, say a user asks for a marker and there are two markers on a table. If the user tells the robot that its first guess was wrong, the algorithm deduces that the other marker must be the one that the user wants, and will hand that one over without asking another question. Those kinds of inferences, known as “implicatures,” make the algorithm more efficient.

In future work, Tellex and her team would like to combine the algorithm with more robust speech recognition systems, which might further increase the system’s accuracy and speed. “Currently we do not consider the parse of the human’s speech. We would like the model to understand prepositional phrases (‘on the left,’ ‘nearest to me’). This would allow the robot to understand how items are spatially related to other items through language.”

Ultimately, Tellex hopes, systems like this will help robots become useful collaborators both at home and at work.

An open-access paper on the DARPA-funded research will also be presented at the International Conference on Robotics and Automation.

Abstract of Correcting Robot Mistakes in Real Time Using EEG Signals

Communication with a robot using brain activity from a human collaborator could provide a direct and fast

feedback loop that is easy and natural for the human, thereby enabling a wide variety of intuitive interaction tasks. This paper explores the application of EEG-measured error-related potentials (ErrPs) to closed-loop robotic control. ErrP signals are particularly useful for robotics tasks because they are naturally occurring within the brain in response to an unexpected error. We decode ErrP signals from a human operator in real time to control a Rethink Robotics Baxter robot during a binary object selection task. We also show that utilizing a secondary interactive error-related potential signal generated during this closed-loop robot task can greatly improve classification performance, suggesting new ways in which robots can acquire human feedback. The design and implementation of the complete system is described, and results are presented for realtime

closed-loop and open-loop experiments as well as offline analysis of both primary and secondary ErrP signals. These experiments are performed using general population subjects that have not been trained or screened. This work thereby demonstrates the potential for EEG-based feedback methods to facilitate seamless robotic control, and moves closer towards the goal of real-time intuitive interaction.

Abstract of Reducing Errors in Object-Fetching Interactions through Social Feedback

Fetching items is an important problem for a social robot. It requires a robot to interpret a person’s language and gesture and use these noisy observations to infer what item to deliver. If the robot could ask questions, it would help the robot be faster and more accurate in its task. Existing approaches either do not ask questions, or rely on fixed question-asking policies. To address this problem, we propose a model that makes assumptions about cooperation between agents to perform richer signal extraction from observations. This work defines a mathematical framework for an itemfetching domain that allows a robot to increase the speed and accuracy of its ability to interpret a person’s requests by reasoning about its own uncertainty as well as processing implicit information (implicatures). We formalize the itemdelivery domain as a Partially Observable Markov Decision Process (POMDP), and approximately solve this POMDP in real time. Our model improves speed and accuracy of fetching tasks by asking relevant clarifying questions only when necessary. To measure our model’s improvements, we conducted a real world user study with 16 participants. Our method achieved greater accuracy and a faster interaction time compared to state-of-theart baselines. Our model is 2.17 seconds faster (25% faster) than a state-of-the-art baseline, while being 2.1% more accurate.