How to read a mouse’s mind

February 21, 2013 by Amara D. Angelica

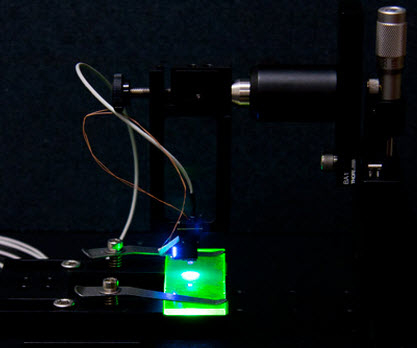

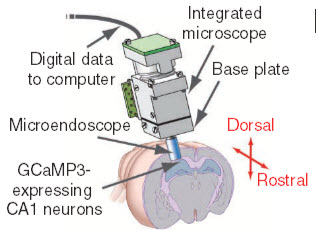

A tiny microscope equipped with a microendoscope images hippocampus CA1 pyramidal neurons expressing GCaMP3, a green fluorescent protein (credit: Yaniv Ziv et al./Nature Neuroscience)

Want to read a mouse’s mind — observing hundreds of neurons firing in the brain of a live mouse in real time — to see how it creates memories as it explores an environment?

You’ll just need some fluorescent protein and a tiny digital microscope implanted in the rodent’s head, Stanford University scientists say.

Here’s how:

1. First, you catch your mouse.

2. Light up some hippcampus (memory) neurons — specifically, CA1 pyramidal cells. To do that, genetically engineer (using viral vector AAV2/5-CaMKII-GCaMP3) the neurons to express a green fluorescent protein (GCaMP3), which is sensitive to the presence of calcium ions. (When a neuron fires, the cell naturally floods with calcium ions. Calcium stimulates the protein, causing the entire cell to fluoresce bright green.)

3. Implant a tiny microscope just above the mouse’s hippocampus — a part of the brain that is critical for spatial and episodic memory to capture the light of about 700 neurons. The microscope is connected to a digital camera chip. (A Stanford spinoff called Inscopix has been set up to make and sell the device, called nVista HD. (Inscopix’s Neuroscience Early Access Program (NEAP) is currently accepting applications from leading neuroscientists for access to this revolutionary imaging technology)

4, Connect the camera to a computer, which then displays near-real-time video of the mouse’s brain activity as a mouse runs around an arena (a small enclosure). Think Gladiator in miniature, with whiskers instead of swords.

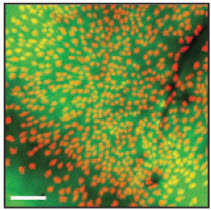

Calcium ions are shown here as red (the fluorescence is green); blood vessels appear as shadows. Scale bar: 100 microns (.1 mm). (Credit: Yaniv Ziv et al./Nature Neuroscience)

5. Decode the image. The neuronal firings look like tiny green fireworks, randomly bursting against a black background. (Makes for a great light show too, although not as good as this one.)

Mark Schnitzer, an associate professor of biology and of applied physics and the senior author on the paper (in the journal Nature Neuroscience) advises:

“We can literally figure out where the mouse is in the arena by looking at these lights.” When a mouse is scratching at the wall in a certain area of the arena, a specific neuron will fire and flash green, he explains. When the mouse scampers to a different area, the light from the first neuron fades and a new cell sparks up.

“The hippocampus is very sensitive to where the animal is in its environment, and different cells respond to different parts of the arena. Imagine walking around your office. Some of the neurons in your hippocampus light up when you’re near your desk, and others fire when you’re near your chair. This is how your brain makes a representative map of a space.”

6. Use this tool for studying new therapies for neurodegenerative diseases such as Alzheimer’s.

A mouse’s neurons fire in the same patterns, even when a month has passed between experiments, the researchers found. “The ability to come back and observe the same cells is very important for studying progressive brain diseases.”

For example, if a particular neuron in a test mouse stops functioning, as a result of normal neuronal death or a neurodegenerative disease, researchers could apply an experimental therapeutic agent and then expose the mouse to the same stimuli to see if the neuron’s function returns.

Although the technology can’t be used on humans, mouse models are a common starting point for new therapies for human neurodegenerative diseases, and Schnitzer believes the system could be a very useful tool in evaluating pre-clinical research for for neurodegenerative diseases such as Alzheimer’s.