How to see around a corner without a mirror

June 19, 2014

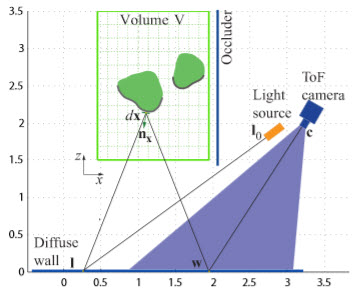

A laser projects a beam on a diffuse (rough) wall. The scattered light is reflected back to the wall and sensed by a “time of flight” camera, which correlates the time of arrival and brightness with the time sent to infer the shape and albedo (reflectance) of the object (credit: Felix Heide et al.)

A novel camera system that can detect objects hidden by obstructions — without using a mirror — has been developed by scientists at the University of Bonn and the University of British Columbia.

It uses diffusely reflected, time-coded light to reconstruct the shape of objects outside of the field of view.

Scattered light as a source of information

In the researchers’ prototype system, a laser dot on the wall is a source of scattered light, which serves as the crucial source of information. Some of this light, in a roundabout way, falls back onto the wall and finally into the camera.

“We are recording a kind of light echo, that is, time-resolved data, from which we can reconstruct the object,” explains research team leader Prof. Dr.-Ing. Matthias B. Hullin of the Institute of Computer Science II at the University of Bonn.

“Part of the light has also come into contact with the unknown object and it thus brings valuable information with it about its shape and appearance.”

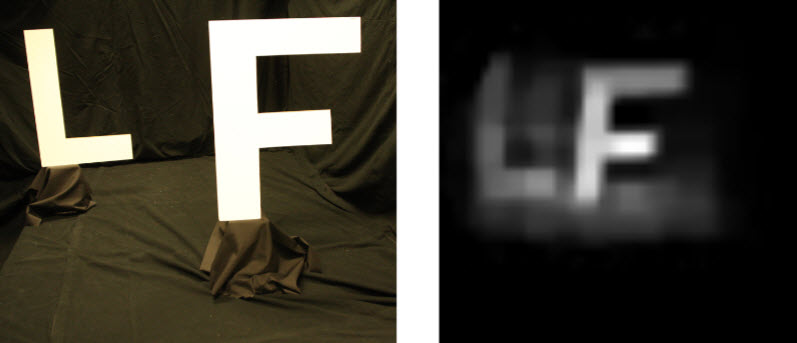

Reconstruction (right) of the projected image (left) from time-of-flight measurement and computation (credit: Felix Heide et al.)

Unlike conventional cameras, and time-of-flight camera records both the direction from which the light is coming but also how long it took the light to get from the source to the camera.

Prof. Hullin explains how light echo makes the invisible visible (credit: Bonn University and UniBonn TV) (in German; YouTube closed-caption translation option suggested)

Dealing with multipath interference

However, the challenge is to decode the time-of-flight data. The problem is “multipath interference” — like talking in a room that reverberates so much you can’t sustain a conversation. “In principle, we are measuring nothing other than the sum of numerous light reflections which reached the camera through many different paths and which are superimposed on each other on the image sensor,” explains Hullin.

Traditionally, one would attempt to remove the undesired multipath scatter and only use the direct portion of the signal, he says. However, based on an advanced mathematical model, Hullin and his colleagues developed a method that can derive the information from the noise, rather than just the signal, since multipath light also originates from objects not in the field of view.

“The accuracy of our method has its limits, of course,” admits Hullin — the results are still limited to rough outlines. But based on the rapid development of technical components and mathematical models, better results can be achieved soon, he suggests.

Hullin hopes similar approaches can be used in applications such as telecommunications, remote sensing, medical imaging, rescue operations, and traffic safety (especially for self-driving cars).

Lower-cost options

There have been several systems developed to do this detection, such as one developed by researchers at MIT, Harvard, the University of Wisconsin, and Rice University, as reported by KurzweilAI. “We draw inspiration from [the MIT et al. researchers], who were the first to demonstrate the use of multipath for shape reconstruction around the corner using ultrashort laser pulses and a streak camera,” Hullin explained to KurzweilAI in an email interview.

“Our contribution is to use computation to get rid of this extremely expensive (half a million dollars) and sensitive equipment (needs to be operated in total darkness) that can only image a single scanline at a time. Our reconstructions are based on data captured by an ordinary time-of-flight camera, which brings several advantages: three orders of magnitude cheaper, insensitive to ambient light, full-frame capture. The reconstruction process is based on a generative model (time-resolved radiosity), rather than [the MIT et al.] backprojection/filtering approach.

“The hardware for this system is already widely available in the form of time-of-flight cameras like the latest Microsoft Kinect. As we showed in earlier work, these types of sensors can be used to reconstruct transient images, or videos of light in flight, by solving a big linear system. Likewise, the step of making these systems look around the corner is software-only, so it could technically be deployed immediately.”

One other step in that direction: a small, cheap, power-efficient depth camera using time-of-flight measurements and that could be incorporated into a future cellphone has already been developed at MIT’s Research Lab of Electronics, as KurzweilAI has reported.

The researchers will present their results at the Conference for Computer Vision and Pattern Recognition (CVPR) June 24–27 in Columbus, Ohio.

Abstract of IEEE Conference on Computer Vision and Pattern Recognition presentation

The functional difference between a diffuse wall and a mirror is well understood: one scatters back into all directions, and the other one preserves the directionality of reflected light. The temporal structure of the light, however, is left intact by both: assuming simple surface reflection, photons that arrive first are reflected first. In this paper, we exploit this insight to recover objects outside the line of sight from second-order diffuse reflections, effectively turn- ing walls into mirrors. We formulate the reconstruction task as a linear inverse problem on the transient response of a scene, which we acquire using an affordable setup consisting of a modulated light source and a time-of-flight image sensor. By exploiting sparsity in the reconstruction domain, we achieve resolutions in the order of a few centimeters for object shape (depth and laterally) and albedo. Our method is robust to ambient light and works for large room-sized scenes. It is drastically faster and less expensive than previous approaches using femtosecond lasers and streak cameras, and does not require any moving parts.