How Watson works: a conversation with Eric Brown, IBM Research Manager

January 31, 2011 by Amara D. Angelica

For nearly two years IBM scientists have been working on a highly advanced Question Answering (QA) system, codenamed “Watson.” The scientists believe that the computing system will be able to understand complex questions and answer with enough precision, confidence, and speed to compete in the first-ever man vs. machine Jeopardy! competition, which will air on February 14, 15 and 16, 2011.

We had some questions, so we spoke with Dr. Eric Brown, a research manager at the IBM T.J. Watson Research Center who works in several areas on DeepQA, including designing and implementing the DeepQA architecture, developing and assessing hardware deployment models for DeepQA’s development and production cluster, and developing algorithms for special question processing. Eric has a background in Information Retrieval and Computer Systems Engineering.

Q: IBM’s Watson and Jeopardy! FAQ states that IBM expects Watson to “reach competitive levels of human performance.” Could you quantify that, and is there a range of dates?

Brown: So, competitive levels of human performance at the task of playing Jeopardy! is what we’re talking about there. Just to establish the context of what we’re talking about, what we’ve been working on for the last four years is this core technology that we call “DeepQA,” for “deep question-answering.” The first application of that technology is the system called Watson, which was built and tuned to play Jeopardy!

And we’re basically using Jeopardy! as a benchmark or a challenge problem to drive the development of that technology, and as a way to measure the progress of the technology. So, in this particular FAQ, when we’re talking about reaching competitive levels with human performance, here we’re talking specifically about Watson being able to compete in a real game of Jeopardy! — live, in real time — at the level of a human competitor. And, in fact, even better, at the level of a grand champion.

The way that we’ve been evaluating that is, this past fall, Watson has been competing in live sparring matches against Jeopardy! players that have actually won several games of Jeopardy! and gone on to compete in the Jeopardy! Tournament of Champions, so we’ll call them champion Jeopardy! players. We basically have played 55 different sparring games against these champion Jeopardy! players, and built up a record of performance there.

Then we have the Final Exhibition Match, where Watson is competing against Ken Jennings and Brad Rutter, and that’s the match that will actually air on television, on Jeopardy!, on February 14th, 15th and 16th. So, it’s the results of these various competitions that will allow us to evaluate this claim, of whether or not Watson has achieved human-level performance at the game of Jeopardy!.

So now, to speak more broadly is a little bit of a challenge, because Watson is the first application of this technology that we’ve fully developed. What we’ve started to do is look at other applications of the technology that are more aligned with IBM’s clients and business interests, and business problems that our clients are interested in solving. And the first one that we’re taking a look at is applying this technology in the medical domain — using it to do what we call differential diagnosis.

Now, using the capabilities of DeepQA to automatically generate hypotheses and gather evidence to support or refute those hypotheses, and then evaluate all of this evidence through an open, pluggable architecture of analytics, and then combine and weigh all those results to evaluate those hypotheses and make recommendations — that’s where we’re going with this technology. I think what you’re starting to get at is, in the broader context of artificial intelligence, are we making claims about DeepQA at that level? At this point, I’m not sure we’re ready to make any claims in a broader context.

Q: So a related question is: How well would Watson do on the Turing test at this point in time?

Brown: Whenever I get that question, my initial response is: If you were to rephrase the Turing test slightly, and couch it in terms of, if you had two players playing Jeopardy!, and you couldn’t tell which one was the computer and which one was the human, and that was the Turing test, then I think Watson would pass that very easily. But, of course, the Turing test is defined more broadly and open-ended, where you really have an open-ended dialogue, and Watson is not up to that task yet.

Within the constraints of the Jeopardy! game, and the way that those questions are phrased — again, using open-ended natural language, Watson is able to understand a very wide variety of these questions over a very broad domain, and answer them with an extremely high level of accuracy. But what we haven’t worked on yet is more of a dialogue system, where Watson starts to interact in an even more natural way.

I will say, though, that that is definitely a direction we want to take the technology in, especially as we apply it to other domains, where if you think of a decision support system or something that is assisting a professional in their information gathering, analysis, and decision-making processes, you would go to a more interactive dialogue scenario. But it’s still not up to the task of actually passing the Turing test.

Q: What are particularly good examples of questions that Watson gets correct, and that show its ability to deal with subtle human-thinking capabilities, like irony, riddles, or metaphor?

Brown: Well, there are a couple of commonly reoccurring, what we call puzzle-type questions in Jeopardy!. One kind of puzzle is what’s called “rhyme time,” where the clue actually breaks down into two parts, and the system has to recognize that there’s two parts to the clue and each sub-part has an answer, and then the constraint is that the answers to the two sub-parts have to rhyme. An example of one of those kinds of clues is the category, “edible rhyme time,” and the clue is: “A long, tiresome speech delivered by a frothy pie topping.”

So there, the system has to recognize that there are two parts to this clue. The first part is a long, tiresome speech, and the second part is a frothy pie topping. Then, it comes up with possible answers for each of those sub-parts. So a long, tiresome speech could be a diatribe, or in this case (which is the correct answer), a harangue. And then, a frothy pie topping could be something like marangue, which is, in fact, the answer we are looking for.

But there are other frothy pie toppings, like whipped cream or things like that,so then you’ve got possible answers for each sub-clue, and you have this rhyming constraint, where now you look for the two words that rhyme. In this case, it’s marangue and harangue, and the final answer, using typical rules of English, where you put the modifier before the noun, you then rewrite it to be marangue harangue. So, the correct answer to the clue would be a “marangue harangue.”

Q: OK, what about metaphor? That’s a little bit more of a challenge.

Brown: Off the top of my head, I would probably have to dig a little bit for a good example of something like that. You know, what we do often see is that the clues often have what I’ll call extraneous elements to them, that are there more for entertainment value than actually being required to answer the clue. What Watson seems to be particularly good at is, picking through all of that and zeroing in on the relevant part of the clue.

In fact, for me anyway, I’m a terrible Jeopardy! player, there are some clues that are very complex, that I’m still trying to figure out what the clue even means by the time Watson has actually come up with the correct answer. Just because, there’s all of this additional wording in there, that you kind of have to pick through to actually get at what’s really being asked.

The reason that being able to do that is so interesting is because you can easily imagine real business applications where the questions that are being expressed, or the scenario that you have to analyze and solve, is not worded very crisply or concisely. Especially, say, a tech-support problem where an end user is just doing a brain dump of everything that’s gone wrong, or maybe giving you details that are completely superfluous, and you need to be able to pick through that and identify the core question. Watson seems to be particularly good at doing that.

Smarter search engines?

So would you see Watson moving toward serving as a back end to search engines in the future, and moving toward cloud computing?

Brown: Actually, it’s almost the reverse. Watson solves a different problem than a traditional Web search engine does. I think ultimately, both of these technologies will have a role in supporting humans in everyday information seeking, gathering and analysis tasks. Watson, or DeepQA, actually uses several search engines inside it to do some of its preliminary searching and candidate answer generation.

So, that’s why I say it’s almost the reverse — rather than a search engine using Watson, it’s already the case that Watson uses a number of different search engines in its initial stages of searching through all of its content to find possible candidate answers. These candidate answers then go through much deeper levels of scoring and analysis and gathering additional evidence, which is analyzed by a variety of analytics.

But the reason I want to make a distinction between the two high-level tasks of using a search engine, versus using something like Watson, is Watson or the underlying technology is really more general than “question in, answer out.” It’s really much more of a hypothesis generation system, with the ability to gather evidence that either supports or refutes these hypotheses, and then analyze and evaluate that evidence using a variety of deep analytics. For instance, natural language processing analytics that can understand grammar or syntax or semantics, as well as other, say, semantic-web type technologies that can compare entities and relationships and evaluate things at that level. All of this is used in more of a decision support system, so it’s not just straightforward search; it’s solving a different kind of problem.

Q: Would it be accurate to say that Watson’s capability is somewhat analogous to Wolfram Alpha, in terms of providing that capability?

Brown: I think that the Watson system that plays Jeopardy! has a lot of similarities with Wolfram Alpha at the interface level, in terms of a question in and a precise answer out. But, once you peel back the interface layer and actually look at the implementation, and the types of questions that each of those two systems can actually answer, things start to differ quite a bit. My understanding of Wolfram Alpha, which is not complete, is that it’s built largely on manually curated databases and a lot of human knowledge engineering, and then when it is able to understand the question, it can then precisely map that into searches or queries against its underlying data to come up with a precise answer.

Watson does not take an approach of trying to curate the underlying data or build databases or structured resources, especially in a manual fashion, but rather, it relies on unstructured data — documents, things like encyclopedias, web pages, dictionaries, unstructured content.

Then we apply a wide variety of text analysis processes, a whole processing pipeline, to analyze that content and automatically derive the structure and meaning from it. Similarly, when we get a question as input, we don’t try and map it into some known ontology or taxonomy, or into a structured query language.

Rather, we use natural language processing techniques to try and understand what the question is looking for, and then come up with candidate answers and score those. So in general, Watson is, I’ll say more robust for a broader range of questions and has a much broader way of expressing those questions.

Watson on an iPhone?

Q: Well, given that robustness, when do you expect it to be available to the general public — let’s say, be able to do provide what you get on Answers.com?

Brown: That is what we’re currently working on now, applying the underlying technology to a number of different business applications. I think there’s a couple of elements to that answer. The first question is: would IBM get into that kind of business? I don’t know if that kind of business model makes sense for IBM, so I don’t know if we have any plans there. Our focus, at least with this technology initially, is going to be looking at specific business applications, and as I said, right now, the first one that we’re interested in exploring is in the medical domain or in the healthcare domain. We really don’t have an estimate of when something like that would be available.

Q: Are you free to mention other possible applications?

The other applications that we’re thinking about would include things like help desk, tech support, and business intelligence applications … basically any place where you need to go beyond just document search, but you have deeper questions or scenarios that require gathering evidence and evaluating all of that evidence to come up with meaningful answers.

Q: Does Watson use speech recognition?

Brown: No, what it does use is speech synthesis, text-to-speech, to verbalize its responses and, when playing Jeopardy!, make selections. When we started this project, the core research problem was the question answering technology. When we started looking at applying it to Jeopardy!, I think very early on we decided that we did not want to introduce a potential layer of error by relying on speech recognition to understand the question.

If you look at the way Jeopardy! is played, that’s actually, I think, a fair requirement, because when a clue is revealed, the host reads the clue so that the contestants can hear it. But at the same time, the text of the clue is revealed to the players and they can read it instantaneously. Since in Jeopardy!, humans can read the clues, when a clue is revealed, the full text of the clue is revealed on a large display to the contestants, and that full text is also sent in an ASCII file format to Watson.

Q: Simultaneously with the reading of the clue, right?

Brown: Exactly. Basically, the time that it takes the host to read the clue, is the amount of time that all of the players have to come up with their answer, and to decide whether they want to attempt to answer the clue. Each player has a signaling device, what we refer to as a buzzer, and the signaling device is actually not enabled until the host finishes reading the clue.

Then, at that point, if you think you know the answer and are confident enough to ring in, you can depress your signaling device, and if you’re the first one to ring in, you get to try and answer the clue. So, it’s basically the time it takes the host to read the clue, that’s the amount of time Watson has to come up with its answer and its confidence, and decide whether or not it’s going to try and ring in and answer the clue

Q: Interesting. So is Watson actually working on it as the person is reading the clue?

Brown: Yes. As it receives the text of the clue at the very beginning, it immediately starts processing the clue and trying to come up with an answer. [The “answer” buttons are disabled until Alex Trebek finishes reading the entire question.]

Does Watson need a supercomputer?

Q: So that brings up a question: the IBM FAQ mentions the possibility of moving to Blue Gene. Are you going to stay with POWER7, or move to a supercomputer?

Brown: That is actually an old question in the FAQ; it probably should be updated, and I apologize for that. Earlier on in the project, we explored moving to Blue Gene, and it turns out that the computational model supported by Blue Gene is not ideal for what we need to do in Watson. Blue Gene works great for highly data-parallel processing problems, like doing protein folding or weather forecasting or doing these massive simulations, but the analytics that we run in Watson tend to be fairly expensive and heavyweight from a single-thread point of view, and actually run much better on a much more powerful processor.

If you look at the power of a single processor in a Blue Gene, at least the Blue Gene/P, those are something like an 850 MHz power processor, and that does not provide the single-threaded performance that most of the analytics that we run in Watson would require.

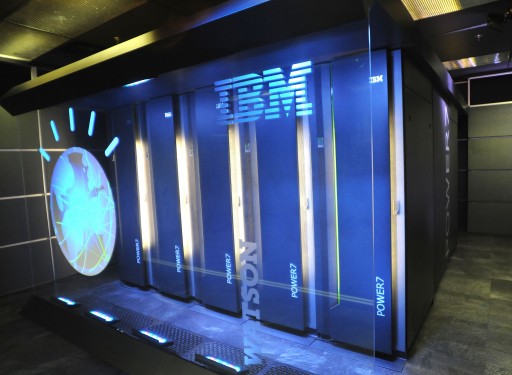

The POWER7, however, and in particular the Power 750 server that Watson is deployed on, does have that performance. In fact, it’s ideal as a workload-optimized system for Watson. Each server has 32 cores in it, and each one of these cores is a 3.55 GHz, full-blown POWER7 processor. And each server can have up to 256 GB of RAM, and our analytics — not only are they expensive to run, but they also use fairly large resources, and we typically want these loaded into main memory.

So what we can do with the core density of a Power 750 is run several copies of a particular analytic or collection of analytics that share some common big resources, and have that all loaded into this shared memory, which turns out to really be the ideal platform for running Watson. In our production system, I think we actually have a total of 90 of these Power 750 servers available to run Watson while it’s playing Jeopardy!

Can you expand on the single-threading model?

Brown: Well, when I say single threading, the only point I’m making there is that we have some fairly heavyweight analytics that run on a single thread, so they are not internally parallelized; they’re basically running as sequential processes. So in order for them to run fast enough, they need a fairly powerful processor. When Watson is actually processing one of these clues, we have many copies of these analytics running in parallel, distributed across essentially, a network of computers.

When you look at the processing of a clue, throughout the entire pipeline, at the beginning, we start with a single clue, we analyze the clue, and then we go through a candidate generation phase, which actually runs several different primary searches, which each produce on the order of 50 search results. Then, each search result can produce several candidate answers, and so by the time we’ve generated all of our candidate answers, we might have three to five hundred candidate answers for the clue.

Now, all of these candidate answers can be processed independently and in parallel, so now they fan out to answer-scoring analytics that are distributed across this cluster, and these answer-scoring analytics score the answers. Then, we run additional searches for the answers to gather more evidence, and then run deep analytics on each piece of evidence, so each candidate answer might go and generate 20 pieces of evidence to support that answer.

Now, all of this evidence can be analyzed independently and in parallel, so that fans out again. Now you have evidence being deeply analyzed on the cluster, and then all of these analytics produce scores that ultimately get merged together, using a machine-learning framework to weight the scores and produce a final ranked order for the candidate answers, as well as a final confidence in them. Then, that’s what comes out in the end.

So, if you kind of visualize a fan-out going from the beginning of the process to the end, and then kind of collapsing back at the end, to the final ranked answers, it’s this fan out and generation of all of these intermediate candidate answers and pieces of evidence that can be scored independently that allow us to leverage a big parallel-processing cluster.

Q: Why wouldn’t Blue Gene/P be capable of that?

Brown: The issue we ran into with the Blue Gene/P is that, to give you sort of a concrete but hypothetical example: let’s say we have an algorithm that, on a POWER7 processing core, takes 500 milliseconds to score all of the evidence for a particular candidate answer. Now, if we take that same algorithm and run it on a Blue Gene, on a single core of the Blue Gene, which is only 850 MHz, let’s say, it’s ten times slower. So, now it’s going to take five seconds, and that already exceeds the time budget for getting the entire clue answered, because on average you have about three seconds to come up with your answer and your confidence, and decide whether or not you’re going to ring in.

So what I mean by this single-threaded processing performance is that there are just certain analytics that the total time they take, if it exceeds a certain budget, is already too slow, and there’s no way to make it faster by doing things in parallel. Due to the sequential processing that particular analytic does, if the processor can’t do it fast enough, it’s going to be too slow. That’s the main reason why the POWER7 works much better with this particular application than Blue Gene.

It’s based on the power of a single core, but then also the ability to have a large number of cores in the whole cluster. Our entire POWER7 cluster that we’re running Watson on has 2,880 cores. One rack of Blue Gene/P actually has 4,096 cores, but these are 850 MHz cores, compared to the 3.55 GHz core on the POWER7.