In the beginning was the code

March 15, 2013 by Jürgen Schmidhuber

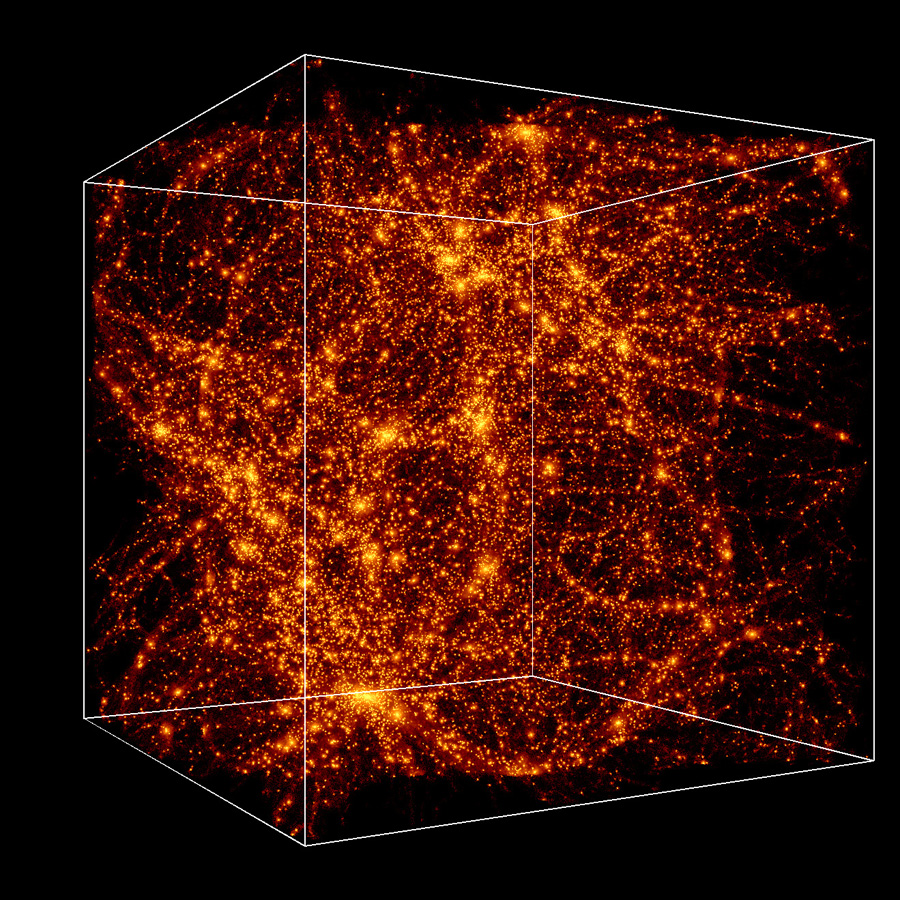

A supercomputer simulation of the evolution of the universe (credit: Andrey Kravtsov/University of Chicago)

There is a fastest, optimal, most efficient way of computing all logically possible universes, including ours — if ours is computable (no evidence against this). Any God-like “Great Programmer” with any self-respect should use this optimal method to create and master all logically possible universes.

At any given time, most of the universes computed so far that contain yourself will be due to one of the shortest and fastest programs computing you. This insight allows for making non-trivial predictions about the future. We also obtain formal, mathematical answers to age-old questions of philosophy and theology.

Transcript of Jürgen Schmidhuber’s TEDx talk at UHasselt, Belgium, Nov. 10, 2012

I will talk about the simplest explanation of the universe. The universe is following strange rules. Einstein’s relativity. Planck’s quantum physics. But the universe may be even stranger than you think. And even simpler than you think.

Is the universe being created by a computer program?

Many scientists are now taking seriously the possibility that the entire universe is being computed by a computer program, as first suggested in 1967 by the legendary Konrad Zuse, who also built the world’s first working general computer between 1935 and 1941. [1]

Zuse’s 1969 book Calculating Space discusses how a particular computer, a cellular automaton, might compute all elementary particle interactions, and thus the entire universe.

The idea is that every electron behaves the same, because all electrons re-use the same subprogram over and over again.

First consider the virtual universe of a video game with a realistic 3D simulation. In your computer, the game is encoded as a program, a sequence of ones and zeroes. Looking at the program, you don’t see what it does. You have to run it to experience it.

Reality has still higher resolution than video games. But soon you won’t see a difference any more, since every decade, simulations are becoming 100–1000 times better, because computing power per Swiss Franc is growing by a factor of 100–1000 per decade.

A few decades imply a factor of a billion. Soon, we’ll be able to simulate very convincing heavens and hells. It will seem quite plausible that the real world itself also is just a simulation.

To a man with a hammer, everything looks like a nail. To a man with a computer, everything looks like a computation.

Skeptics might say: What about quantum physics, and Heisenberg’s uncertainty principle, and Bell’s inequality? Don’t they imply that the universe cannot be produced by a deterministic program? Not at all. Bell himself knew well that deterministic universes including deterministically computable observers are fully compatible with all available physical observations.

The universe as the sum of all mathematics

When my brother Christof was a teenager in the early 1980s in Munich, he told me and others: the universe, or quantum multiverse, is the sum of all mathematics. I believe he is the reason why such ideas emerged in Munich.

He was younger than me. He still is. He also was smarter than me. He went on to become a physicist at Munich, Caltech, Princeton, and CERN, and he lived in Berne next door to where Einstein lived.

It took me a while to understand what my brother meant. In 1996, I formalized his idea through a computation. I generalized Everett’s many worlds theory, pointing out that there is a very short and fast program that not only computes our own universe, or multiverse, but also all other logically possible universes, even those with different physical laws. For example, universes with anti-gravity.

In fact, there is a fastest, optimal, most efficient way of computing all logically possible universes, including ours — if ours is computable (no evidence against this).

The optimal method can be programmed with only ten lines of code. I wrote it down for you — here it is! [Holds up a piece of paper.] [2]

Any God-like “Great Programmer” with some self-respect should use this optimal method to create and master all logically possible universes.

Suppose he runs it for a while. At some point, many of the executed programs will have computed universes that contain you! You, as you are sitting here and staring at me with incredulous eyes.

You could even become a “Great Programmer” yourself, using the optimal method [holds note up again] to simulate all possible universes in nested fashion. (But this would not necessarily help to figure out the future faster than by waiting for it to happen. The computer on which to run this program would have to be built within our universe, and as a small part of the latter would be unable to run as fast as the universe itself.)

Anyway, now it’s easy to see that due to the nature of the optimal method, at any given time, most of the universes computed so far that do contain yourself will be due to one of the shortest and fastest programs computing: YOU.

Predictions

This insight allows for making non-trivial predictions about the future. There are many possible futures of your past so far. Which one is going to happen? Answer: given the probability distribution induced by the optimal method, most likely one of the few regular, non-random futures with a fast and short program.[3] (Because the weird futures where suddenly the rules change and everything dissolves into randomness are fundamentally harder to compute, even by the optimal method. Random stuff by definition does not have a short program.)

This implies that the decay of neutrons, widely believed to be random, most likely is not random, but pseudo-random, like the decimal expansion of PI, which looks random, but isn’t, because it is computable by a short and fast program.

Why quantum computing may never scale

The optimal method also implies that quantum computation will never work well, essentially because it is consuming so many basic computational resources. I first made this prediction a dozen years ago. Since then there has not been any progress in practical quantum computation, despite lots of efforts. (The biggest number factored into its prime factors by any existing quantum computer is still 15.) Quantum computation is sexy, but dead.

What about free will? Free will is overrated. In my group at the Swiss AI Lab IDSIA, we often program simulated worlds including simulated observers with simulated artificial brains. Through pseudo-random trial and error they even learn from experience to become smarter over time, acting as if they had free will. They have no idea that every thought in their artificial neural networks is computed by a deterministic program. (In a way, they do have free will — it’s just deterministically computed free will.)

Computational theology

Nevertheless, computer science is now giving us formal, mathematical answers to old questions of philosophy and theology. One of the results of my Computational Theology is this: your own life must be very important in the grand scheme of things.

You may think that your life is insignificant, because you are so small, and the universe is so big. But given the Great Programmer’s optimal way of computing all universes, it is probably very hard to edit your life (or mine) out of our particular universe: Any program that produces a universe like ours, but without you, is probably much longer and slower (and thus less likely) than the original program that includes you.

So with high probability, your life essentially has to be this way, with all of its ups and downs. Your life is not insignificant. It seems to be an indispensable part of the grand scheme of things.[4]

This is compatible with religions claiming that “all is one,” “everything is connected to everything.” May this thought lift you up in times of frustration.

Footnotes:

1. A recent KurzweilAI news article mentioned somewhat related ideas by Max Tegmark (1997/1998). How does Schmidhuber’s approach differ?

“My paper on all computable universes called ‘A computer scientist’s view of life, the universe, and everything’ got submitted/published in 1996/1997,” Schmidhuber told KurzweilAI.

“Back then, Max also was based in Munich (at LMU). He put forth this somewhat vague and not really formally well-defined notion of a mathematics-based ensemble of universes.

“He assumed a uniform prior distribution on this ensemble, which unfortunately cannot even exist, as there is no uniform distribution on countably infinite things. Over the years, Max and I had quite a few little chats about this :-) . I think a mathematical analysis of this type really must focus on the formally well-defined, limit-computable mathematical structures/universes.

“Max also completely ignores computation time, while the talk above is all about computation time, which makes a big difference between easy-to-compute and hard-to-compute universes, and greatly affects their probabilities, and thus the most likely futures of observers inhabiting them. I also addressed such differences in an additional 2000 paper on all formally describable universes (and also in the 2012 survey paper for H. Zenil’s book, A Computable Universe).

“I also wrote that I suspect my brother Christof Schmidhuber is the real reason why such ideas emerged in Munich. At the age of 17 he declared that the universe is the sum of all math, inhabited by observers who are mathematical substructures (private communication, Munich, 1981).

“As he went on to become a theoretical physicist at LMU Munich, Caltech, Princeton, and CERN, discussions with him about the relation between superstrings and bitstrings became a source of inspiration for writing both the first paper and later ones based on computational complexity theory, which seems to provide the natural setting for his more math-oriented ideas (private communication, Munich 1981-86; Caltech 1987-93; Princeton 1994-96; Berne/Geneva 1997–; compare his notion of “mathscape”).”

2. The preprint of a recent overview paper by Schmidhuber includes pseudocode (a simplified generic version) for the ten lines of code mentioned in the talk (see also slides):

FAST Algorithm

for i := 1, 2, . . . do

Run each program p with l(p) ≤ i for at most 2i−l(p) steps and reset storage modified by p

end for

[here l(p) denotes the length of program p, a bitstring]

Schmidhuber explains: “This is essentially a variant of Leonid Levin’s universal search (1973), but without the search aspect. The code systematically lists and runs all possible programs in interleaving fashion. It can be shown that it computes each particular universe as quickly as this universe’s (typically unknown) fastest program, save for a constant factor that does not depend on the universe size.

From this asymptotically optimal method, we can derive an a priori probability distribution on possible universes called the Speed Prior. It reflects the fastest way of describing objects, not necessarily the shortest. (BTW, note that any general search in program space for the solution to a sufficiently complex problem will create many inhabited universes as byproducts.)”

3. Assume you are running computations for all universes in parallel, says Schmidhuber. Some contain you at a given time. So among those universes computed so far that contain you, which are the most likely ones, that is, what’s your most likely future? In a Bayesian framework, “Speed Prior” permits non-trivial answers to questions of this type.

4. “Because most likely the universe to which you owe your current existence has a high a priori probability,” explains Schmidhuber. “Other possible variants of your life are less likely because they are harder to compute, even by the optimal method.”

The insights mentioned in this talk were first published between 1996 and 2000, and further popularized in the new millennium. Detailed mathematical papers as well as popular high-level summaries can be downloaded from Schmidhuber’s overview site on all computable universes.

For information on a Master’s Degree in Artificial Intelligence through courses taught by Schmidhuber and colleagues, visit this site: Master’s Degree in Informatics with a Major in Intelligent Systems

For new jobs for postdocs and PhD students in Schmidhuber’s research group, visit this site: http://www.idsia.ch/~juergen/eu2013.html