Researchers decipher how faces are encoded in the brain

June 9, 2017

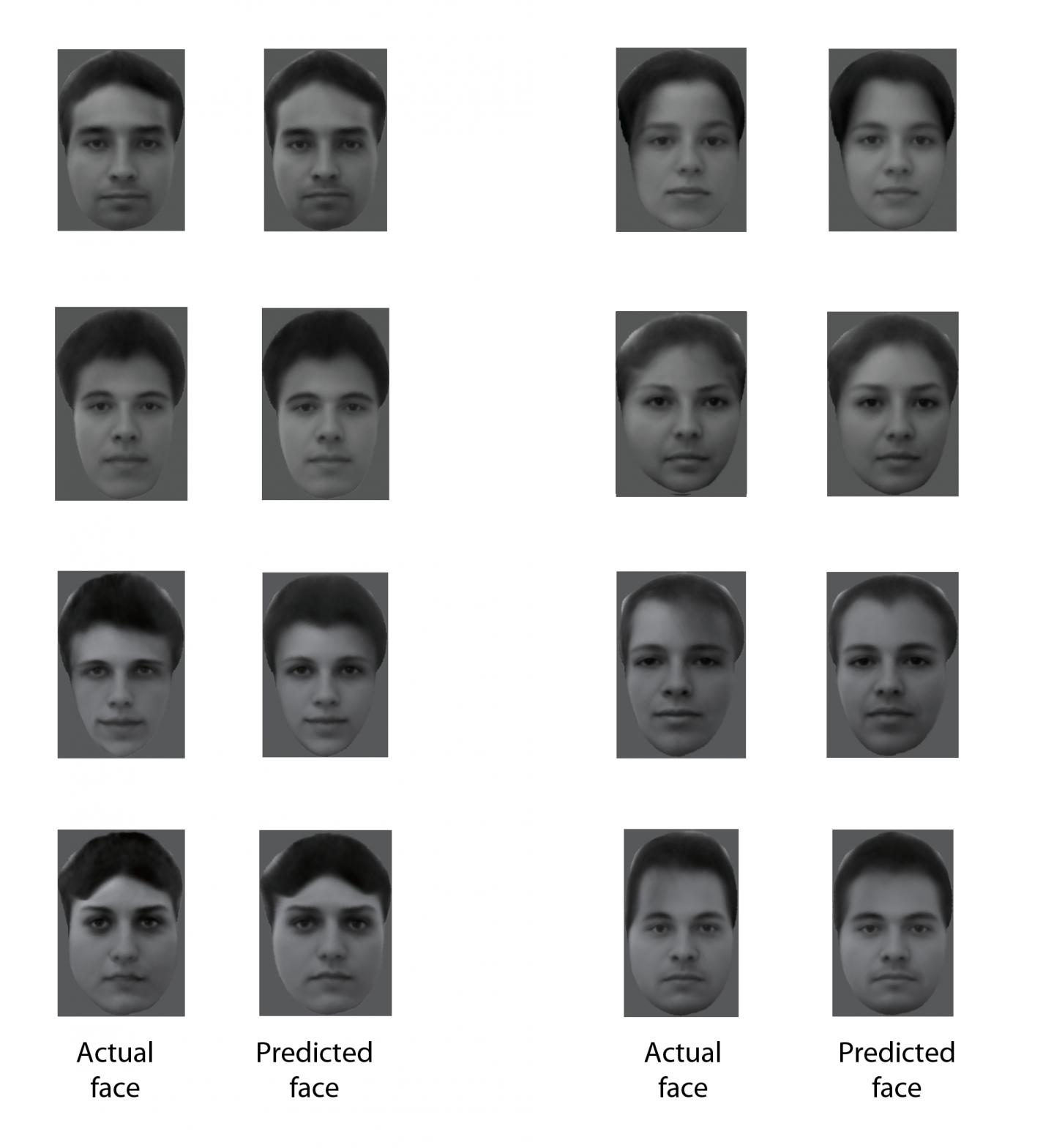

This figure shows eight different real faces that were presented to a monkey, together with reconstructions made by analyzing electrical activity from 205 neurons recorded while the monkey was viewing the faces. (credit: Doris Tsao)

In a paper published (open access) June 1 in the journal Cell, researchers report that they have cracked the code for facial identity in the primate brain.

“We’ve discovered that this code is extremely simple,” says senior author Doris Tsao, a professor of biology and biological engineering at the California Institute of Technology and senior author. “We can now reconstruct a face that a monkey is seeing by monitoring the electrical activity of only 205 neurons in the monkey’s brain. One can imagine applications in forensics where one could reconstruct the face of a criminal by analyzing a witness’s brain activity.”

The researchers previously identified the six “face patches” — general areas of the primate and human brain that are responsible for identifying faces — all located in the inferior temporal (IT) cortex. They also found that these areas are packed with specific nerve cells that fire action potentials much more strongly when seeing faces than when seeing other objects. They called these neurons “face cells.”

Previously, some experts in the field believed that each face cell (a.k.a. “grandmother cell“) in the brain represents a specific face, but this presented a paradox, says Tsao, who is also a Howard Hughes Medical Institute investigator. “You could potentially recognize 6 billion people, but you don’t have 6 billion face cells in the IT cortex. There had to be some other solution.”

Instead, they found that rather than representing a specific identity, each face cell represents a specific axis within a multidimensional space, which they call the “face space.” These axes can combine in different ways to create every possible face. In other words, there is no “Jennifer Aniston” neuron.

The clinching piece of evidence: the researchers could create a large set of faces that looked extremely different, but which all caused the cell to fire in exactly the same way. “This was completely shocking to us — we had always thought face cells were more complex. But it turns out each face cell is just measuring distance along a single axis of face space, and is blind to other features,” Tsao says.

AI applications

“The way the brain processes this kind of information doesn’t have to be a black box,” Chang explains. “Although there are many steps of computations between the image we see and the responses of face cells, the code of these face cells turned out to be quite simple once we found the proper axes. This work suggests that other objects could be encoded with similarly simple coordinate systems.”

The research also has artificial intelligence applications. “This could inspire new machine learning algorithms for recognizing faces,” Tsao adds. “In addition, our approach could be used to figure out how units in deep networks encode other things, such as objects and sentences.”

This research was supported by the National Institutes of Health, the Howard Hughes Medical Institute, the Tianqiao and Chrissy Chen Institute for Neuroscience at Caltech, and the Swartz Foundation.

* The researchers started by creating a 50-dimensional space that could represent all faces. They assigned 25 dimensions to the shape–such as the distance between eyes or the width of the hairline–and 25 dimensions to nonshape-related appearance features, such as skin tone and texture.

Using macaque monkeys as a model system, the researchers inserted electrodes into the brains that could record individual signals from single face cells within the face patches. They found that each face cell fired in proportion to the projection of a face onto a single axis in the 50-dimensional face space. Knowing these axes, the researchers then developed an algorithm that could decode additional faces from neural responses.

In other words, they could now show the monkey an arbitrary new face, and recreate the face that the monkey was seeing from electrical activity of face cells in the animal’s brain. When placed side by side, the photos that the monkeys were shown and the faces that were recreated using the algorithm were nearly identical. Face cells from only two of the face patches–106 cells in one patch and 99 cells in another–were enough to reconstruct the faces. “People always say a picture is worth a thousand words,” Tsao says. “But I like to say that a picture of a face is worth about 200 neurons.”

Caltech | Researchers decipher the enigma of how faces are encoded

Abstract of The Code for Facial Identity in the Primate Brain

Primates recognize complex objects such as faces with remarkable speed and reliability. Here, we reveal the brain’s code for facial identity. Experiments in macaques demonstrate an extraordinarily simple transformation between faces and responses of cells in face patches. By formatting faces as points in a high-dimensional linear space, we discovered that each face cell’s firing rate is proportional to the projection of an incoming face stimulus onto a single axis in this space, allowing a face cell ensemble to encode the location of any face in the space. Using this code, we could precisely decode faces from neural population responses and predict neural firing rates to faces. Furthermore, this code disavows the long-standing assumption that face cells encode specific facial identities, confirmed by engineering faces with drastically different appearance that elicited identical responses in single face cells. Our work suggests that other objects could be encoded by analogous metric coordinate systems.