Where and when the brain recognizes, categorizes an object

January 28, 2014

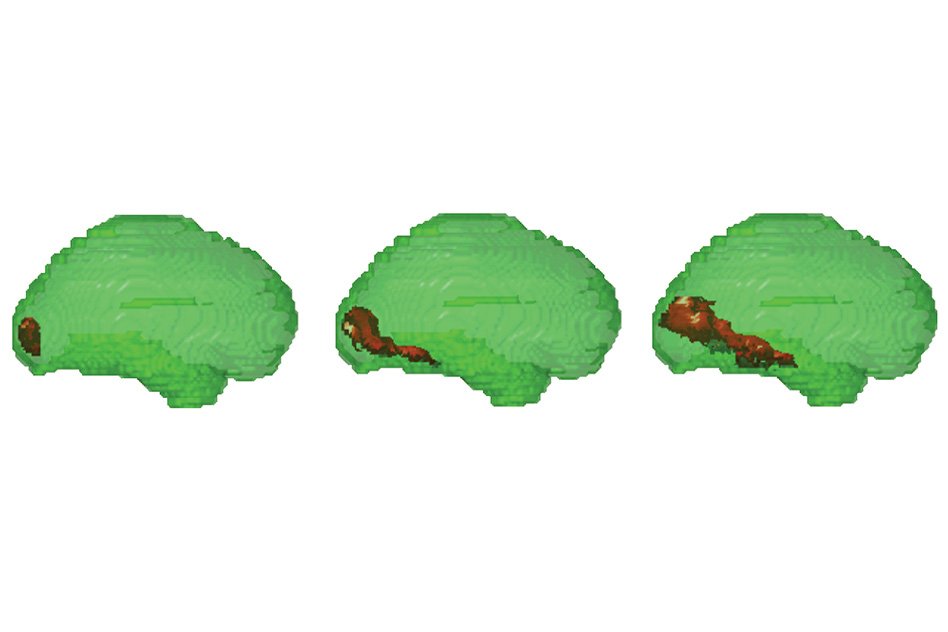

MIT researchers combined fMRI and MEG data to reveal which parts of the brain are active shortly after an image is seen. At around 60 milliseconds, only early visual cortex in the back of the brain was active (image at left). Then, activity spread to brain regions involved in later visual processing until the inferior temporal cortex was activated (image at right). This brain region represents complex shapes and categories of objects. (Credit: Radoslaw Martin Cichy, Dimitrios Pantazis, Aude Oliva)

MIT researchers scanned individuals’ brains as they looked at different images and were able to pinpoint, to the millisecond, when the brain recognizes and categorizes an object, and where these processes occur.

“This method gives you a visualization of ‘when’ and ‘where’ at the same time. It’s a window into processes happening at the millisecond and millimeter scale,” says Aude Oliva, a principal research scientist in MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL).

Oliva is the senior author of a paper describing the findings in the Jan. 26 issue of Nature Neuroscience. Lead author of the paper is CSAIL postdoc Radoslaw Cichy. Dimitrios Pantazis, a research scientist at MIT’s McGovern Institute for Brain Research, is also an author of the paper.

Combining fMRI (where) and when (MEG)a

Until now, scientists have been able to observe the location or timing of human brain activity at high resolution, but not both, because different imaging techniques are not easily combined.

The most commonly used type of brain scan, functional magnetic resonance imaging (fMRI), measures changes in blood flow, revealing which parts of the brain are involved in a particular task. However, it works too slowly to keep up with the brain’s millisecond-by-millisecond dynamics.

Another imaging technique, known as magnetoencephalography (MEG), uses an array of hundreds of sensors encircling the head to measure magnetic fields produced by neuronal activity in the brain. These sensors offer a dynamic portrait of brain activity over time, down to the millisecond, but do not tell the precise location of the signals.

To combine the time and location information generated by these two scanners, the researchers used a computational technique called representational similarity analysis, which relies on the fact that two similar objects (such as two human faces) that provoke similar signals in fMRI will also produce similar signals in MEG. This method has been used before to link fMRI with recordings of neuronal electrical activity in monkeys, but the MIT researchers are the first to use it to link fMRI and MEG data from human subjects.

In the study, the researchers scanned 16 human volunteers as they looked at a series of 92 images, including faces, animals, and natural and manmade objects. Each image was shown for half a second.

“We wanted to measure how visual information flows through the brain. It’s just pure automatic machinery that starts every time you open your eyes, and it’s incredibly fast,” Cichy says. “This is a very complex process, and we have not yet looked at higher cognitive processes that come later, such as recalling thoughts and memories when you are watching objects.”

Each subject underwent the test multiple times — twice in an fMRI scanner and twice in an MEG scanner — giving the researchers a huge set of data on the timing and location of brain activity. All of the scanning was done at the Athinoula A. Martinos Imaging Center at the McGovern Institute.

Millisecond timeline

By analyzing this data, the researchers produced a timeline of the brain’s object-recognition pathway that is very similar to results previously obtained by recording electrical signals in the visual cortex of monkeys, a technique that is extremely accurate but too invasive to use in humans.

About 50 milliseconds after subjects saw an image, visual information entered a part of the brain called the primary visual cortex, or V1, which recognizes basic elements of a shape, such as whether it is round or elongated. The information then flowed to the inferotemporal cortex, where the brain identified the object as early as 120 milliseconds. Within 160 milliseconds, all objects had been classified into categories such as plant or animal.

MIT neuroscientist Mary Potter, an MIT professor of brain and cognitive sciences, previously found that the human brain can correctly identify images seen for as little as 13 milliseconds, as reported on KurzweilAI*. “Potter believes one reason for the subjects’ better performance in this study may be that they were able to practice fast detection as the images were presented progressively faster, even though each image was unfamiliar,” KurzweilAI noted. “The subjects also received feedback on their performance after each trial, allowing them to adapt to this incredibly fast presentation.”

The MIT team’s strategy “provides a rich new source of evidence on this highly dynamic process,” says Nikolaus Kriegeskorte, a principal investigator in cognition and brain sciences at Cambridge University.

“The combination of MEG and fMRI in humans is no surrogate for invasive animal studies with techniques that simultaneously have high spatial and temporal precision, but Cichy et al. come closer to characterizing the dynamic emergence of representational geometries across stages of processing in humans than any previous work. The approach will be useful for future studies elucidating other perceptual and cognitive processes,” says Kriegeskorte, who was not part of the research team.

The MIT researchers are now using representational similarity analysis to study the accuracy of computer models of vision by comparing brain scan data with the models’ predictions of how vision works.

Using this approach, scientists should also be able to study how the human brain analyzes other types of information such as motor, verbal, or sensory signals, the researchers say. It could also shed light on processes that underlie conditions such as memory disorders or dyslexia, and could benefit patients suffering from paralysis or neurodegenerative diseases.

“This is the first time that MEG and fMRI have been connected in this way, giving us a unique perspective,” Pantazis says. “We now have the tools to precisely map brain function both in space and time, opening up tremendous possibilities to study the human brain.”

The research was funded by the National Eye Institute, the National Science Foundation, and a Feodor Lynen Research Fellowship from the Humboldt Foundation.

* KurzweilAI has asked both Potter and Oliva to comment on the new finding.

Abstract of Nature Neuroscience paper

A comprehensive picture of object processing in the human brain requires combining both spatial and temporal information about brain activity. Here we acquired human magnetoencephalography (MEG) and functional magnetic resonance imaging (fMRI) responses to 92 object images. Multivariate pattern classification applied to MEG revealed the time course of object processing: whereas individual images were discriminated by visual representations early, ordinate and superordinate category levels emerged relatively late. Using representational similarity analysis, we combined human fMRI and MEG to show content-specific correspondence between early MEG responses and primary visual cortex (V1), and later MEG responses and inferior temporal (IT) cortex. We identified transient and persistent neural activities during object processing with sources in V1 and IT. Finally, we correlated human MEG signals to single-unit responses in monkey IT. Together, our findings provide an integrated space- and time-resolved view of human object categorization during the first few hundred milliseconds of vision.